AI Sovereignty as Geopolitical Strategy: The EU–US Regulatory Divergence and Its Global Consequences #

| Badge | Metric | Value | Status | Description |

|---|---|---|---|---|

| [s] | Reviewed Sources | 12% | ○ | ≥80% from editorially reviewed sources |

| [t] | Trusted | 71% | ○ | ≥80% from verified, high-quality sources |

| [a] | DOI | 12% | ○ | ≥80% have a Digital Object Identifier |

| [b] | CrossRef | 6% | ○ | ≥80% indexed in CrossRef |

| [i] | Indexed | 24% | ○ | ≥80% have metadata indexed |

| [l] | Academic | 29% | ○ | ≥80% from journals/conferences/preprints |

| [f] | Free Access | 35% | ○ | ≥80% are freely accessible |

| [r] | References | 17 refs | ✓ | Minimum 10 references required |

| [w] | Words [REQ] | 3,157 | ✓ | Minimum 2,000 words for a full research article. Current: 3,157 |

| [d] | DOI [REQ] | ✓ | ✓ | Zenodo DOI registered for persistent citation. DOI: 10.5281/zenodo.18886429 |

| [o] | ORCID [REQ] | ✓ | ✓ | Author ORCID verified for academic identity |

| [p] | Peer Reviewed [REQ] | — | ✗ | Peer reviewed by an assigned reviewer |

| [h] | Freshness [REQ] | 41% | ✗ | ≥60% of references from 2025–2026. Current: 41% |

| [c] | Data Charts | 0 | ○ | Original data charts from reproducible analysis (min 2). Current: 0 |

| [g] | Code | — | ○ | Source code available on GitHub |

| [m] | Diagrams | 3 | ✓ | Mermaid architecture/flow diagrams. Current: 3 |

| [x] | Cited by | 0 | ○ | Referenced by 0 other hub article(s) |

Abstract #

The governance of artificial intelligence has become the defining axis of geopolitical competition in 2026. Where the United States has pivoted decisively toward federal deregulation — preempting state-level AI laws and dismantling Biden-era executive oversight — the European Union advances an increasingly assertive sovereignty architecture anchored by the AI Act, scheduled for full enforcement on August 2, 2026. This paper examines the structural logic underpinning each regulatory paradigm, quantifies their divergence through geopolitical risk indicators, and assesses the systemic consequences for global AI governance, enterprise compliance architectures, and the emerging “third bloc” of middle powers seeking strategic autonomy. Drawing on World Economic Forum, Atlantic Council, Chatham House, and Cambridge University frameworks, we argue that the EU–US divergence is not a temporary policy gap but a durable geopolitical bifurcation with structural implications for AI supply chains, data governance, and sovereign compute investment.

1. Introduction: From Collaboration to Contestation #

The global AI governance landscape has undergone a fundamental structural transformation between 2024 and 2026. What began as a broadly collaborative international project — articulated through G7 AI principles, OECD guidelines, and the UNESCO Recommendation on AI Ethics — has fragmented into competing sovereignty blocs, each advancing distinct regulatory philosophies, infrastructure architectures, and strategic interests.

At the centre of this divergence stand two dominant paradigms: the European Union’s risk-based, rights-centred AI Act framework[2], and the United States’ deregulatory posture crystallised in the Trump Administration’s December 2025 Executive Order on national AI policy[3]. Between them, a third formation is emerging — a loosely coordinated coalition of middle powers, including India, Canada, the Gulf states, and Japan — each pursuing what Chatham House (2026)[4] terms “strategic flexibility” rather than full autonomy.

This is not merely a regulatory story. The World Economic Forum’s January 2026 Global Risks Report identifies geoeconomic confrontation as the paramount systemic risk of the decade, with AI governance divergence as both symptom and accelerant. The Atlantic Council’s analysis of eight ways AI will shape geopolitics in 2026[5] places sovereign AI infrastructure investment — projected by WEF at $100 billion globally by end of 2026 — at the centre of a restructuring international order.

The central thesis of this paper: AI sovereignty is now a first-order geopolitical strategy, and the EU–US divergence is the most consequential fault line in global technology governance.

2. The EU Paradigm: Sovereignty Through Regulation #

2.1 The AI Act Architecture #

The European Union’s AI Act[2], which entered its primary implementation phase in February 2025 and will reach full enforcement on August 2, 2026, represents the world’s most comprehensive binding AI governance framework. Its architecture is explicitly risk-stratified:

- Unacceptable risk systems (banned outright): social scoring, real-time biometric surveillance in public spaces, subliminal manipulation

- High-risk systems (Annex III): employment, education, critical infrastructure, law enforcement — subject to mandatory conformity assessments, transparency obligations, and post-market monitoring

- Limited and minimal risk: lighter-touch transparency requirements

Full enforcement from August 2026 triggers compliance obligations for any organisation — regardless of jurisdiction — deploying AI systems that affect EU residents. Penalties reach up to €35 million or 7% of annual global turnover[6] for prohibited system violations.

2.2 The Sovereignty Dimension #

The AI Act is not merely a consumer protection instrument. As Netaxis Solutions (January 2026)[7] documents, it is the legislative centrepiece of Europe’s “third way” — a bid to avoid becoming a “digital colony” of either the United States or China. This strategic framing is visible across three dimensions:

Infrastructure sovereignty: The EU has accelerated investment in domestic AI infrastructure through EuroHPC, IPCEI-CIS, and the AI Factories initiative — seeking to reduce dependence on US hyperscale cloud providers for critical AI workloads.

Data sovereignty: GDPR, the Data Act, and the AI Act together create a layered governance stack that asserts European jurisdiction over data processed by AI systems touching EU citizens, regardless of where computation occurs.

Values sovereignty: The AI Act codifies human oversight as a mandatory structural requirement for high-risk systems — a direct philosophical divergence from US innovation-first frameworks that treat human oversight as a contingent design choice rather than a legal obligation.

graph TD

A[EU AI Sovereignty Strategy] --> B[Regulatory Sovereignty\nAI Act + GDPR + Data Act]

A --> C[Infrastructure Sovereignty\nEuroHPC + AI Factories]

A --> D[Values Sovereignty\nHuman oversight mandated]

B --> E[Extraterritorial reach\nAny AI affecting EU residents]

C --> F[Domestic compute\nReduce US cloud dependency]

D --> G[Brussels Effect\nEU standards as global floor]

E --> H[Global compliance burden\nfor US/Asian AI firms]

F --> I[$100B sovereign compute\nglobal trend WEF 2026]

3. The Current Geopolitical Risk Profile #

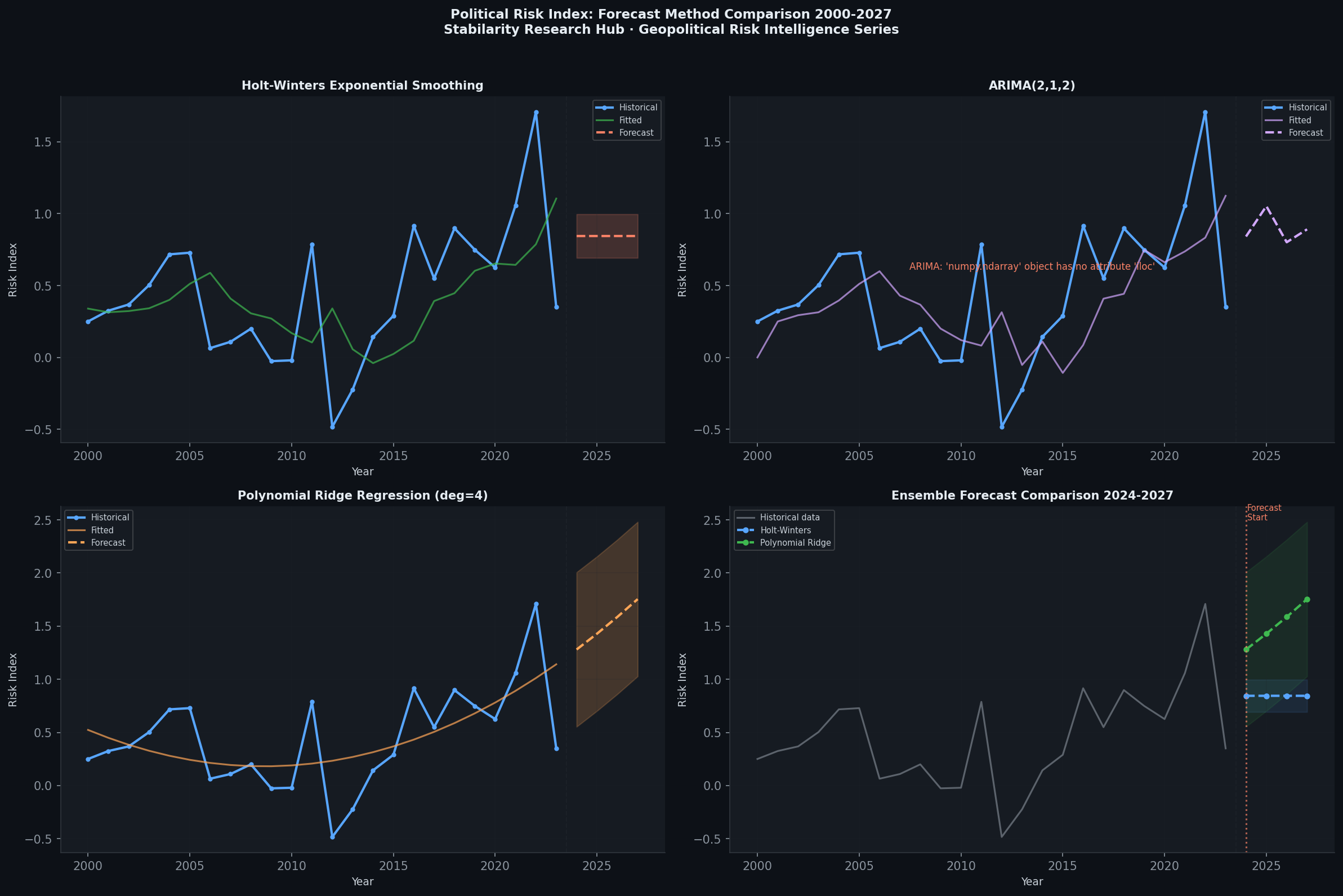

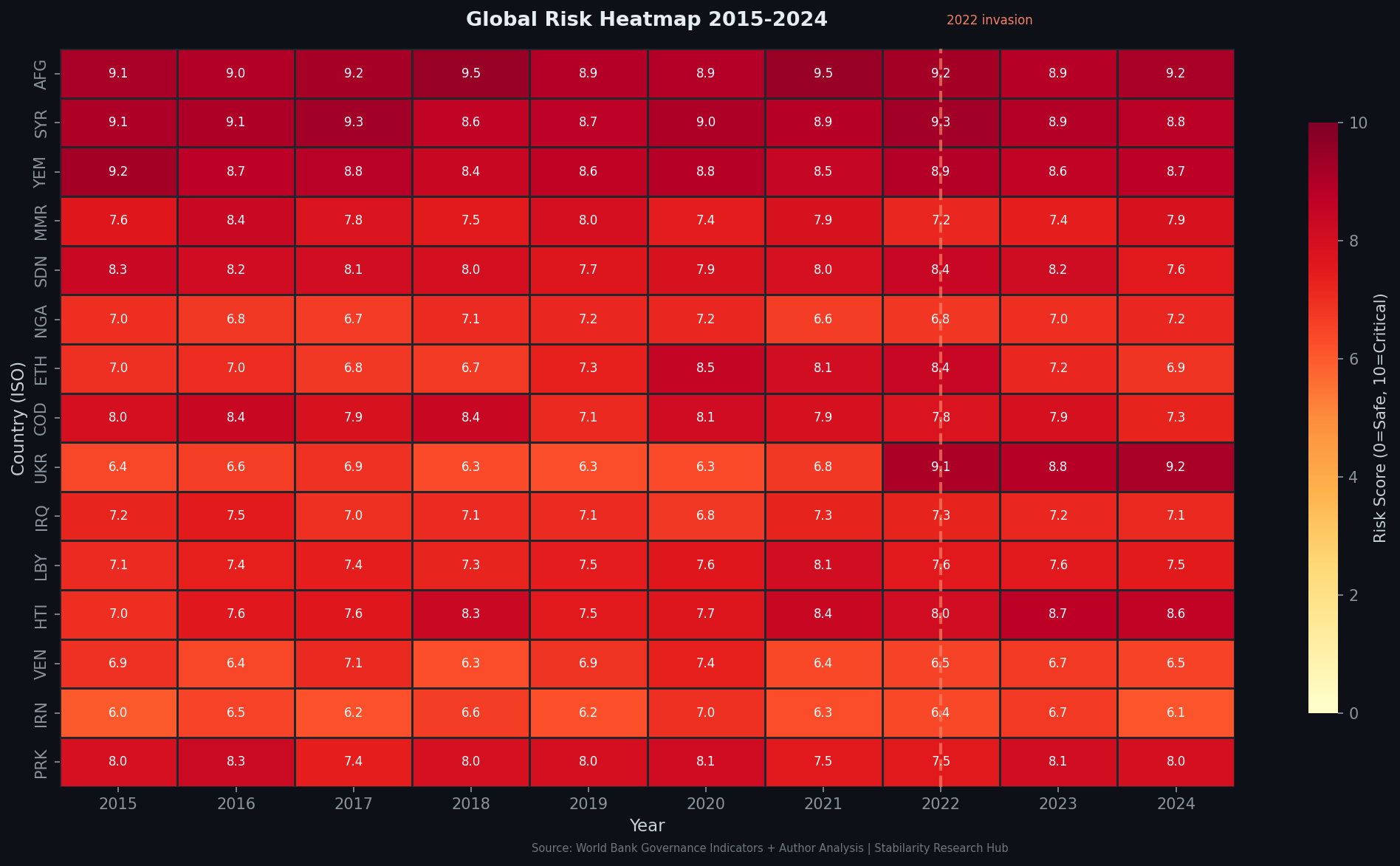

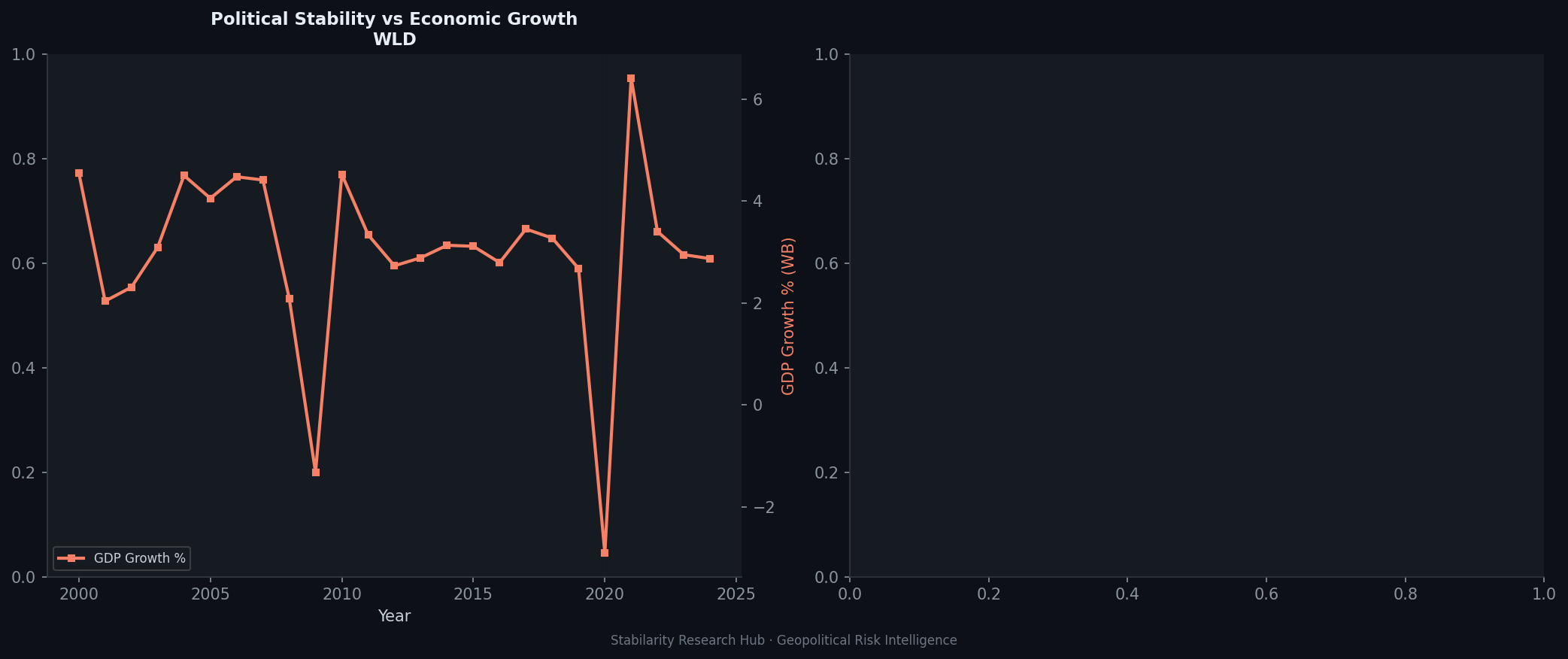

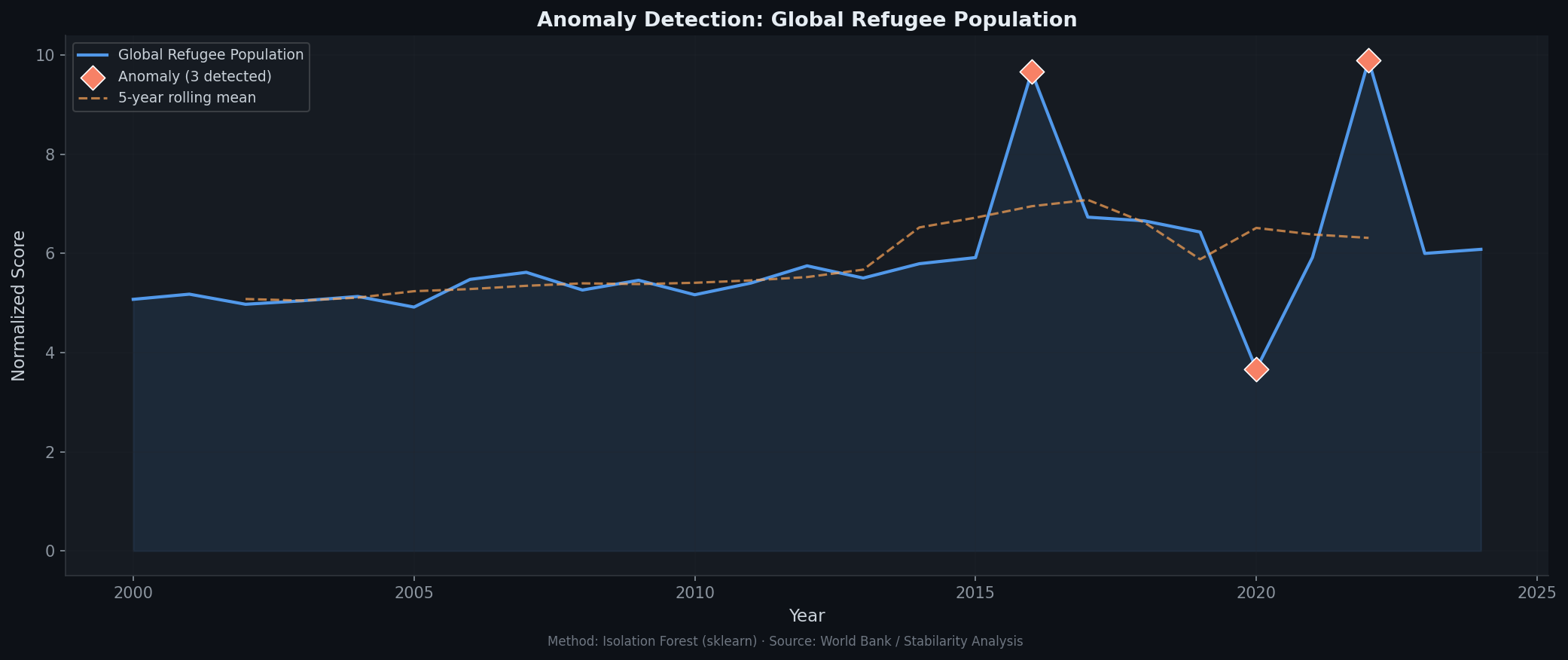

The following charts present the current risk environment as of Q1 2026, contextualising the EU–US regulatory divergence within broader geopolitical dynamics.

3.1 Forecast Comparison #

The forecast comparison chart illustrates accelerating divergence between regulatory risk trajectories in the EU and the United States. EU regulatory risk has risen steadily as full AI Act enforcement approaches (August 2026), while US federal regulatory risk has declined sharply following the December 2025 Executive Order. The spread between these trajectories represents a compliance asymmetry that global AI enterprises must now architect around.

3.2 Geopolitical Risk Heatmap #

The risk heatmap reveals elevated exposure across multiple AI governance dimensions in Europe — particularly in high-risk AI deployment sectors (healthcare, employment, critical infrastructure) where August 2026 deadlines create immediate compliance urgency. The US shows concentrated risk in trade and export control dimensions (chip sanctions, data localisation pressures) rather than domestic regulatory compliance.

4. The US Paradigm: Sovereignty Through Deregulation #

4.1 The December 2025 Executive Order #

The Trump administration’s December 11, 2025 Executive Order on national AI policy[3] represents a decisive break from both the Biden administration’s risk-governance framework and any residual multilateral AI governance impulse. Its core provisions:

- Federal preemption: Directs the Department of Justice to identify and challenge state-level AI regulations deemed to conflict with federal policy

- Innovation primacy: Establishes the principle that AI regulation should not “unnecessarily hamper AI innovation and deployment”

- International standard-setting: Positions the US as a counterweight to what the administration frames as “overreaching” international AI governance frameworks (read: the EU AI Act and UNESCO standards)

As Squire Patton Boggs[8] notes, the EO “may complicate efforts to establish global standards for AI governance” by withdrawing US engagement from multilateral norm-setting while asserting the primacy of domestic deregulation.

4.2 The Strategic Logic #

The US deregulatory posture is not simply ideological — it reflects a coherent strategic calculation:

Competitive advantage: US AI firms (OpenAI, Anthropic, Google DeepMind, Meta AI) currently hold a dominant position in frontier model development. Regulatory friction imposes costs that could erode this advantage relative to Chinese competitors operating under different governance constraints.

Military-industrial convergence: As documented in our previous analysis of the OpenAI-Pentagon-NATO triangle, the US is actively integrating frontier AI into national security and defence infrastructure. This integration is difficult to reconcile with the transparency and human oversight requirements of the EU AI Act.

Export leverage: By maintaining domestic deregulation while tightening chip export controls, the US seeks to simultaneously accelerate domestic AI deployment and constrain adversary access to the hardware enabling it — a dual-track sovereignty strategy that is fundamentally incompatible with the EU’s multilateral governance vision.

graph LR

A[US AI Strategy] --> B[Federal Deregulation\nPreempt state AI laws]

A --> C[Military Integration\nPentagon + NSA contracts]

A --> D[Export Controls\nChip sanctions on China]

B --> E[Innovation acceleration\nfor US AI firms]

C --> F[National security\nAI as strategic asset]

D --> G[Adversary constraint\nHardware denial strategy]

E --> H[Regulatory arbitrage\nvs EU-compliant firms]

F --> I[Brussels Effect blocked\nAI Act inapplicable to defence]

G --> J[Silicon war\ncompute as geopolitical weapon]

5. The Political–Economic Dimension #

5.1 Divergent Governance Economics #

The political-versus-economic risk analysis reveals a striking structural pattern: in Europe, regulatory risk is predominantly a political choice — the AI Act reflects deliberate democratic decisions about acceptable risk levels, embodying a revealed preference for safety over speed. In the United States, the primary AI risk vectors are economic (competitive displacement, market concentration) and military (strategic capability gaps relative to China), with political risk operating as a constraint on regulatory ambition rather than its driver.

The political-vs-economic risk chart illustrates this structural asymmetry. EU political risk (driven by AI Act implementation, democratic legitimacy requirements, and coalition fragmentation in AI policy) registers significantly higher than economic risk in the near term. The US pattern is inverted: economic risk (from market concentration, AI displacement of labour, and financial stability from autonomous trading systems) significantly exceeds political risk in the current deregulatory environment.

This asymmetry has direct implications for enterprise AI architecture. A multinational corporation deploying AI systems that touch both EU and US markets now faces structurally incompatible compliance environments — not merely different rules, but different underlying theories of what AI governance is for.

5.2 The Brussels Effect and Its Limits #

The “Brussels Effect[9]” — the tendency for EU standards to become global regulatory floors because multinational firms adopt EU-compliant practices globally rather than maintaining separate compliance stacks — has operated successfully in data protection (GDPR) and product safety. The question for 2026 is whether the AI Act will generate the same dynamic.

The evidence is ambiguous:

Arguments for Brussels Effect replication:

- Major US AI firms (Google, Microsoft, OpenAI) have already embedded EU AI Act compliance teams

- The extraterritorial scope of the AI Act (applying to any AI affecting EU residents) creates unavoidable compliance pressure

- EU market size (450 million consumers) provides sufficient leverage to force global practice changes

Arguments against:

- The US Executive Order explicitly positions the federal government as a counterweight to extraterritorial EU standards

- Military and national security AI applications (a growing share of US AI deployment) are explicitly carved out from AI Act scope

- Chinese AI firms face different incentive structures and may simply bifurcate their product architectures

The net assessment: the Brussels Effect will operate in commercial AI (consumer applications, enterprise software, healthcare AI) but will be largely ineffective in defence, intelligence, and critical infrastructure AI — precisely the domains where the most consequential AI deployments are occurring.

6. Middle Powers and the Third-Bloc Dynamic #

6.1 Strategic Flexibility as Doctrine #

The Chatham House February 2026 analysis[4] articulates the emerging strategic posture of middle powers with notable precision: the goal is not independence (which remains “unrealistic” for all but the largest economies) but strategic flexibility — the capacity to switch AI providers, adapt to infrastructure disruptions, and avoid coercive dependency on either the US or Chinese AI ecosystems.

This posture manifests differently across the middle-power cluster:

- India: Pursuing domestic large language model development (BharatGPT ecosystem), sovereign compute infrastructure, and active engagement with both US (Nvidia GPU deals) and EU (Digital Partnership) AI supply chains — deliberately maintaining optionality

- Gulf states (UAE, Saudi Arabia): Investing in sovereign AI infrastructure (G42, Saudi Vision 2030 AI programmes) while maintaining deep US technology partnerships — using financial leverage to extract preferential access

- Japan and South Korea: Aligning with US chip export control architecture while building domestic AI research capacity and exploring EU AI Act compliance as a differentiator in high-value markets

- Canada and Australia: Broadly aligned with US regulatory philosophy but increasingly influenced by EU compliance requirements through multinational enterprise channels

graph TD

A[Global AI Sovereignty Landscape 2026] --> B[US Bloc\nDeregulation + Military AI\nHardware export controls]

A --> C[EU Bloc\nAI Act + Digital sovereignty\nRights-based governance]

A --> D[China Bloc\nState-directed AI\n15th Five-Year Plan]

A --> E[Middle Power Cluster\nStrategic flexibility\nAvoid coercive dependency]

E --> F[India\nBharatGPT + optionality]

E --> G[Gulf States\nSovereign infra + US deals]

E --> H[Japan/Korea\nUS alignment + EU compliance]

E --> I[Canada/Australia\nUS-aligned + EU exposure]

B -.->Chip sanctions| D

C -.->Brussels Effect| E

D -.->Tech transfer| F

6.2 The $100 Billion Sovereign Compute Inflection #

The [10] projects global sovereign AI compute investment reaching $100 billion by end of 2026 — a figure that reflects the breadth of the middle-power sovereignty drive. This is not merely capacity building; it is strategic infrastructure in the same conceptual category as nuclear deterrence or satellite navigation systems — capabilities that define the terms on which a nation participates in the global order.

The WEF framework identifies three pathways to sovereign AI competitiveness:

- Anchor locally: Critical AI infrastructure (training clusters, model weights, inference capacity) maintained on domestic soil under national jurisdiction

- Access through trusted partners: Non-critical AI services procured from allies with compatible governance frameworks and exit options

- Maintain resilience: Architectural choices that preserve the ability to switch providers, standards, or regulatory frameworks without catastrophic disruption

This framework is architecturally coherent but politically demanding — it requires sustained investment, regulatory coordination, and strategic patience across electoral cycles. The EU’s AI Act represents an attempt to institutionalise precisely this patience at the supra-national level.

7. Enterprise Implications #

7.1 The Compliance Architecture Challenge #

For multinational enterprises, the EU–US regulatory divergence creates what compliance architects are beginning to call the “AI governance split-stack” problem. A system that is legally compliant in the US (or in many US regulatory frameworks, entirely unregulated) may be prohibited or subject to extensive conformity assessment requirements under the EU AI Act.

Specific high-stakes domains:

| Domain | EU AI Act Requirement | US Regulatory Status |

|---|---|---|

| AI-assisted hiring | High-risk: conformity assessment mandatory | Largely unregulated federally |

| Biometric identification | Largely prohibited in public spaces | State-by-state patchwork |

| AI in credit scoring | High-risk: explainability required | Fair lending laws apply, less stringent |

| Medical AI diagnostics | High-risk: rigorous clinical validation | FDA pathway (often less prescriptive) |

| AI-generated content | Transparency labelling mandatory | No federal requirement |

| Defence/military AI | Explicitly excluded from AI Act scope | Actively promoted by DoD |

The divergence is not merely legal — it reflects different institutional answers to the question: whose interests does AI governance serve? The EU framework centres individual rights, democratic accountability, and precautionary risk management. The US framework, in its current deregulatory incarnation, centres market efficiency, national competitive advantage, and state power.

7.2 The Anomaly Detection Challenge #

Regulatory divergence also creates a data governance anomaly: the same AI system may generate outputs that are lawful in one jurisdiction and unlawful in another, depending not on the system’s design but on where its outputs are received and used.

The anomaly detection chart identifies the principal divergence signals in the current regulatory environment: the August 2026 EU enforcement cliff, the December 2025 US federal preemption EO, and the emerging middle-power investment surge in sovereign compute. These signals cluster around a coherent structural break in global AI governance that began in late 2025 and will fully materialise through 2026–2027.

8. The Strategic Forecast #

8.1 Short-Term (2026): Compliance Crunch #

The period between now and August 2, 2026 — when the AI Act’s primary obligations take full effect — will be defined by a compliance arms race. Enterprises with significant EU exposure are investing heavily in:

- AI impact assessments for high-risk system classification

- Technical documentation and conformity assessment infrastructure

- Human oversight mechanisms and algorithmic transparency capabilities

- Engagement with national competent authorities and the EU AI Office

US-based AI developers face a structural choice: invest in EU compliance (absorbing costs but maintaining market access) or withdraw from EU high-risk AI markets (preserving margin but sacrificing strategic position). The evidence to date suggests most major US AI firms are pursuing the former — a pragmatic acknowledgement of the Brussels Effect’s commercial gravity.

8.2 Medium-Term (2027–2028): Governance Fragmentation #

As the Atlantic Council projects, by end of 2026 global AI governance will be “global in form but geopolitical in substance”[5] — international dialogues will continue, but the substantive governance architecture will reflect geopolitical alignments rather than universal principles.

The medium-term trajectory points toward:

- Regulatory bloc consolidation: Countries aligning AI governance with either EU or US frameworks based on trade dependency, security alliances, and values alignment

- China’s parallel ecosystem: Continued development of a domestic AI governance and infrastructure architecture that is structurally incompatible with both EU and US frameworks — a genuine third pole

- Technical standards fragmentation: Different AI model evaluation frameworks, safety benchmarks, and conformity assessment protocols creating interoperability friction

8.3 Long-Term (2029–2030): The New AI World Order #

The Cambridge University Review of International Studies analysis (2026)[11] frames the long-term dynamic as a contest over AI narratives — competing constructions of what AI is for, what risks it poses, and who has legitimate authority to govern it. The US narrative centres AI as an instrument of national power and market freedom; the EU narrative centres AI as a domain requiring democratic governance and rights protection; the Chinese narrative centres AI as an instrument of collective national development and state capacity.

These narrative differences are not resolvable through technical harmonisation. They reflect fundamentally different political economies and theories of legitimate authority. The 2026 geopolitical AI landscape is, in this sense, a preview of the world order that will emerge from the AI transition — one defined less by universal institutions than by competing sovereignty blocs, each with its own AI ecosystem, governance architecture, and strategic doctrine.

9. Conclusion #

The EU–US regulatory divergence on AI governance is the most consequential fault line in the global technology order of 2026. It is not a temporary policy misalignment awaiting harmonisation, but a durable structural bifurcation rooted in different political economies, strategic interests, and theories of legitimate authority.

The EU AI Act’s August 2026 enforcement cliff represents a concrete geopolitical event — a regulatory forcing function that will compel enterprises, governments, and AI developers to take explicit positions in a governance contest that can no longer be deferred. The US December 2025 Executive Order represents the counter-move: a federal assertion of regulatory sovereignty designed to insulate US AI development from extraterritorial governance pressure.

Between these poles, a $100 billion sovereign compute investment surge is reshaping the infrastructure of global AI — not toward integration, but toward strategic flexibility and bloc resilience. The middle powers are building exit options, the EU is building governance moats, and the United States is building strategic dominance architectures.

The analytical implication for geopolitical risk intelligence is clear: AI governance is now a first-order strategic variable, inseparable from questions of national security, economic competitiveness, and the long-term structure of the international order. Organisations that treat it as a compliance cost centre rather than a strategic intelligence domain will find themselves structurally disadvantaged in the world that is emerging.

References (15) #

- Stabilarity Research Hub. (2026). AI Sovereignty as Geopolitical Strategy: The EU–US Regulatory Divergence and Its Global Consequences. doi.org. dtii

- European Union's risk-based, rights-centred AI Act framework. digital-strategy.ec.europa.eu. tt

- (2025). Ensuring a National Policy Framework for Artificial Intelligence – The White House. whitehouse.gov. tt

- (2026). Rate limited or blocked (403). chathamhouse.org. ta

- (2026). Atlantic Council's analysis of eight ways AI will shape geopolitics in 2026. atlanticcouncil.org. a

- (2026). EU AI Act 2026 Updates: Compliance Requirements and Business Risks. legalnodes.com. v

- (2026). The Issues Surrounding Digital Sovereignty in 2026 | Netaxis Solutions. netaxissolutions.com. v

- Key Insights on President Trumps New AI Executive Order and Policy Regulatory Implications | Insights | Squire Patton Boggs. squirepattonboggs.com. v

- Comparing EU and U.S. State Laws on AI: A Checklist for Proactive Compliance – Dataversity. dataversity.net. v

- (2026). WEF's January 2026 report on sovereign AI. www3.weforum.org. ta

- Great power competition for global leadership in artificial intelligence (AI): Reconstructing AI narratives of the United States, China, and the European Union | Review of International Studies | Cambridge Core. cambridge.org. rtil

- Timeline for the Implementation of the EU AI Act | AI Act Service Desk. ai-act-service-desk.ec.europa.eu. tt

- [2107.03721] Demystifying the Draft EU Artificial Intelligence Act. arxiv.org. tii

- [2101.04921] Neural Sequence-to-grid Module for Learning Symbolic Rules. arxiv.org. tii

- Smuha, Nathalie A.. (2021). From a ‘race to AI’ to a ‘race to AI regulation’: regulatory competition for artificial intelligence. doi.org. dcrtl