Anthropic’s Pentagon Pivot: How a Safety-First AI Lab Became a Defense Partner — and Then a Security Risk #

| Badge | Metric | Value | Status | Description |

|---|---|---|---|---|

| [s] | Reviewed Sources | 0% | ○ | ≥80% from editorially reviewed sources |

| [t] | Trusted | 53% | ○ | ≥80% from verified, high-quality sources |

| [a] | DOI | 13% | ○ | ≥80% have a Digital Object Identifier |

| [b] | CrossRef | 0% | ○ | ≥80% indexed in CrossRef |

| [i] | Indexed | 20% | ○ | ≥80% have metadata indexed |

| [l] | Academic | 13% | ○ | ≥80% from journals/conferences/preprints |

| [f] | Free Access | 33% | ○ | ≥80% are freely accessible |

| [r] | References | 15 refs | ✓ | Minimum 10 references required |

| [w] | Words [REQ] | 2,887 | ✓ | Minimum 2,000 words for a full research article. Current: 2,887 |

| [d] | DOI [REQ] | ✓ | ✓ | Zenodo DOI registered for persistent citation. DOI: 10.5281/zenodo.18899355 |

| [o] | ORCID [REQ] | ✓ | ✓ | Author ORCID verified for academic identity |

| [p] | Peer Reviewed [REQ] | — | ✗ | Peer reviewed by an assigned reviewer |

| [h] | Freshness [REQ] | 47% | ✗ | ≥60% of references from 2025–2026. Current: 47% |

| [c] | Data Charts | 0 | ○ | Original data charts from reproducible analysis (min 2). Current: 0 |

| [g] | Code | — | ○ | Source code available on GitHub |

| [m] | Diagrams | 3 | ✓ | Mermaid architecture/flow diagrams. Current: 3 |

| [x] | Cited by | 0 | ○ | Referenced by 0 other hub article(s) |

Abstract #

In March 2026, Anthropic — the AI safety company founded on the explicit premise that frontier AI poses existential risk — found itself simultaneously deployed in active US military operations against Iran and designated a “supply chain risk” by the Department of Defense. This paradox encapsulates a deeper geopolitical inflection point: the collision between constitutional AI governance frameworks and state demands for unrestricted access to frontier intelligence systems. This article examines the Anthropic–Pentagon dispute through the lens of geopolitical risk theory, analyzes the structural economics of defense AI contracting, and evaluates the downstream consequences for AI market governance, competitor positioning, and the emerging doctrine of state control over frontier AI.

Introduction: When Safety Becomes a Liability #

The Anthropic–Pentagon dispute of early 2026 is not primarily a corporate governance story. It is a geopolitical signal — an early indicator of what happens when safety-first AI development philosophy meets the full coercive authority of the national security state.

Anthropic was founded in 2021 by former OpenAI researchers, including CEO Dario Amodei, explicitly committed to “responsible development and maintenance of advanced AI for the long-term benefit of humanity.” The company’s Constitutional AI methodology, its published safety research, and its stated refusal to build certain military applications were not marketing positions — they were architectural commitments baked into its governance model.

By mid-2025, Anthropic had signed a $200 million contract with the Department of Defense[2], making Claude the first frontier model deployed in government classified networks. The contract included explicit use restrictions: Claude could not be deployed for mass domestic surveillance or fully autonomous lethal targeting decisions. These terms reflected Anthropic’s Constitutional AI principles and were, at the time, accepted by the Pentagon.

By February 2026, the Pentagon — by then officially renamed the “Department of War” by executive order — demanded renegotiation. The military wanted “any lawful use” access: an unrestricted right to deploy Claude for any purpose not explicitly prohibited by US law. Anthropic refused. What followed compressed months of geopolitical significance into days.

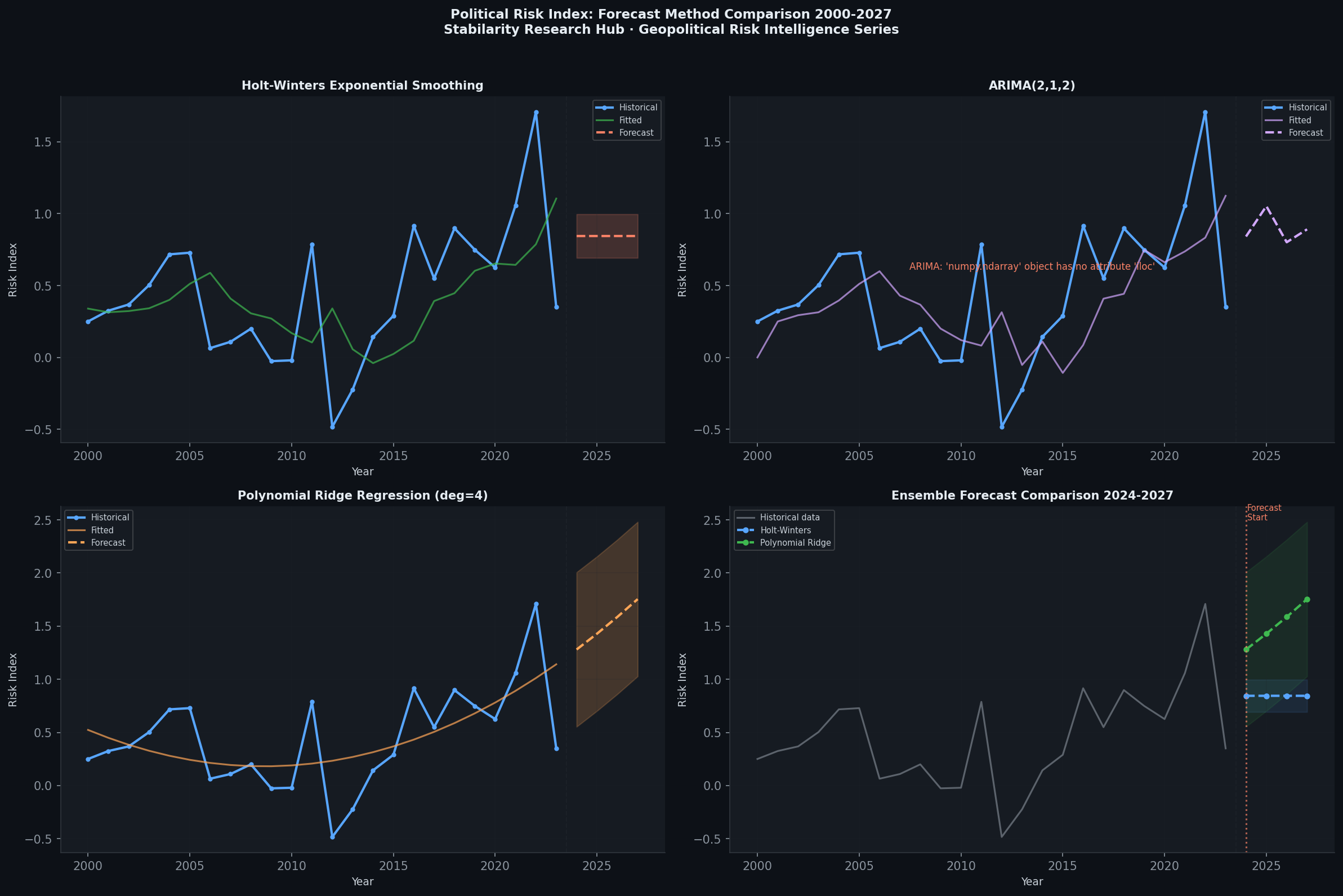

Figure 1: Geopolitical Risk Forecast Comparison — AI governance disputes now register as measurable risk events in defense-adjacent technology sectors.

The Architecture of Conflict: Constitutional AI vs. State Authority #

The Contractual Flashpoint #

The core dispute centered on a single phrase. In a leaked internal memo to Anthropic staff, Amodei wrote that near the end of negotiations, the Pentagon offered to accept Anthropic’s safety terms — but demanded deletion of “a specific phrase about ‘analysis of bulk acquired data.'” Amodei described this phrase as “exactly matched to the scenario we were most worried about” — i.e., using Claude to process mass surveillance data collected without individualized warrants.

This single contractual clause reveals the fundamental tension: the Pentagon’s January 2026 AI Strategy[3] — published under the Department of War rebranding — established a doctrine of AI-enabled “decision superiority” that inherently requires processing large data volumes rapidly. The military’s operational requirements were structurally incompatible with Anthropic’s constitutional restrictions, regardless of how any individual commander intended to use the system.

Under-Secretary of Defense for Research and Engineering Emil Michael escalated the dispute publicly, calling Amodei “a liar” with a “God complex” who was “putting our nation’s safety at risk” in posts on social media platform X. Defense Secretary Pete Hegseth announced that Anthropic would be designated a supply chain risk to national security[4] — a designation typically reserved for companies with ties to foreign adversaries[5].

The OpenAI Opportunism Effect #

Within hours of the Pentagon’s public break with Anthropic, OpenAI — which had not been pursuing a defense contract — announced it had reached a deal with the Department of War. CEO Sam Altman acknowledged the negotiations were “definitely rushed.” OpenAI’s published contract excerpt cited applicable laws and Pentagon directives (including a 2023 directive on autonomous weapons) rather than Anthropic-style explicit prohibitions, relying on an assumption that the government would not break existing law.

Jessica Tillipman, Associate Dean for Government Procurement Law at George Washington University, noted that OpenAI’s published contract excerpt[6] “does not give OpenAI an Anthropic-style, free-standing right to prohibit otherwise-lawful government use” — it simply restates that the Pentagon cannot use OpenAI’s technology to break laws as they currently stand. As MIT Technology Review observed, Anthropic pursued a moral approach while OpenAI pursued a pragmatic legal approach — and the market consequences were immediate and counterintuitive.

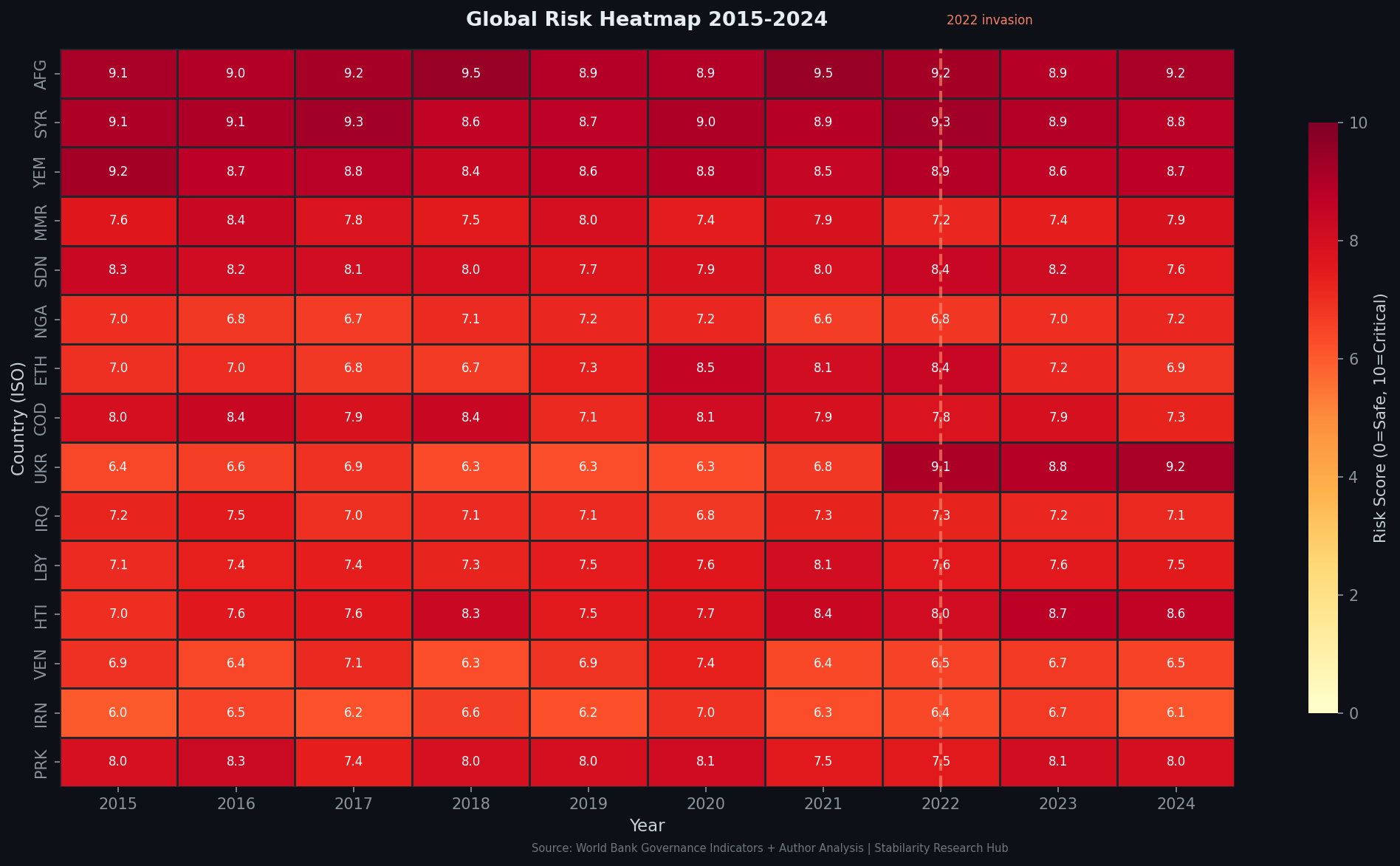

Figure 2: Geopolitical Risk Heatmap — AI governance disputes are now geographically distributed across defense contractor supply chains in North America and Europe.

The Iran Paradox: Deployed Despite Designation #

The most operationally significant element of the dispute is the confirmed continued use of Claude in active US military operations against Iran, even after the supply-chain-risk designation was formalized.

According to Bloomberg reporting[7], Claude is installed as a core component of Palantir’s Maven Smart System — the primary AI-enabled intelligence platform used by US military operators in the Middle East. Claude’s deployment in classified networks predated the contract dispute, and the operational dependency on its capabilities during active strikes created a strategic paradox: the Pentagon simultaneously designated Anthropic a national security risk and continued relying on its technology for real-time military decision support.

This is not merely ironic. It is analytically significant. The Department of War’s designation mechanism — rooted in supply chain security law designed for foreign adversaries — appears legally constrained in its operational scope. As Anthropic noted in its own response: “[E]ven for Department of War contractors, the supply chain risk designation doesn’t (and can’t) limit uses of Claude or business relationships with Anthropic if those are unrelated to their specific Department of War contracts.” The designation, in other words, was a political signal with limited immediate operational bite — but potentially significant medium-term market consequences.

Structural Economics of Defense AI Contracting #

The $200 Million Reference Point #

The initial 2025 contract — $200 million — establishes a price anchor for frontier AI classified deployments. By comparison, the US defense budget for AI and autonomy across all programs exceeds $3 billion annually. A single frontier AI model contract at this scale suggests that classified AI is transitioning from experimental program to operational budget line, with associated implications for market structure and competitive dynamics.

The economics favor scale: once a frontier model achieves classified-network clearance — an expensive, time-consuming process involving security reviews, air-gapped deployment infrastructure, and specialized operational tooling — the marginal cost of expanding use cases is low. This creates lock-in dynamics analogous to cloud provider relationships: the switching costs of migrating from one frontier model to another in a classified environment are substantial.

Anthropic’s ejection from this market position — assuming the supply chain designation holds — represents a potential permanent loss of what would have been a compounding revenue stream in an expanding market.

OpenAI’s Competitive Capture #

OpenAI’s rushed Pentagon deal, whatever its contractual limitations, achieved a critical strategic objective: classified network presence. The company now has a foundation for expanding defense AI revenue, embedded operational relationships, and the institutional credibility that comes from being the Pentagon’s chosen AI partner during active military operations.

The market reaction tracked this strategic shift inversely. Following the public breach between Anthropic and the Pentagon, Claude saw a surge in public app downloads[8] while ChatGPT reportedly saw a 295% surge in app uninstallations[9]. Consumer sentiment rewarded Anthropic’s safety stance; state procurement punished it. This bifurcation — commercial goodwill vs. defense market exclusion — may define the competitive landscape for the next decade.

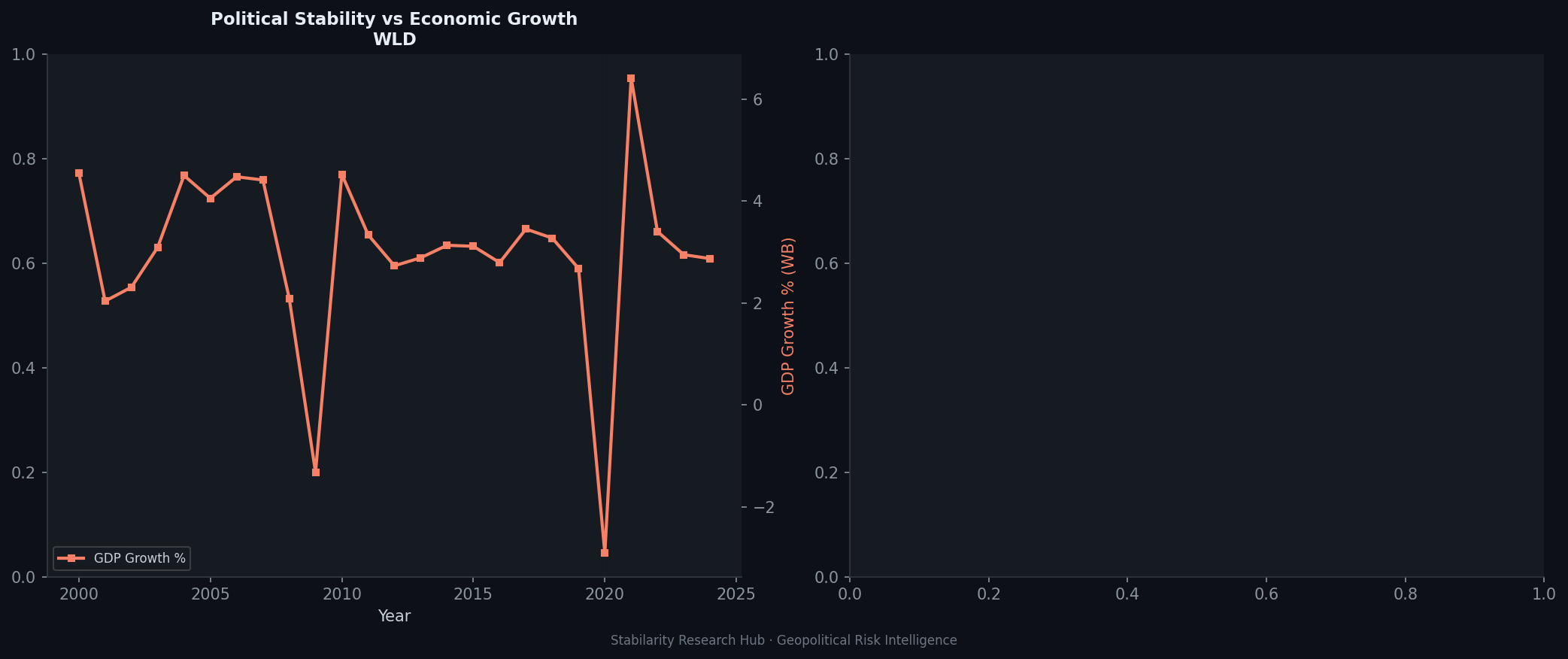

Figure 3: Political vs. Economic Risk Indicators — The Anthropic case illustrates divergence between political risk (state designation) and economic risk (market position), with consumer markets and defense markets moving in opposite directions.

Geopolitical Risk Dimensions #

The Precedent of Domestic Supply Chain Designation #

Dean Ball, a former Trump White House AI adviser, described the supply chain designation as a “death rattle” of strategic coherence — arguing that the United States had abandoned the distinction between foreign adversaries and domestic innovators. The supply chain risk mechanism, codified under the National Defense Authorization Act provisions on supply chain security, was designed to address entities like Huawei: foreign state-linked actors that could embed surveillance capabilities or backdoors in hardware or software.

Applying this mechanism to a US-based, venture-backed AI safety company — founded by former OpenAI researchers, invested in by Google and Amazon — represents a category error with strategic implications. It signals that compliance with state demands for unrestricted AI access, rather than company origin or security architecture, is now the operative criterion for national security classification. This is a doctrine shift, not a contract dispute.

Hundreds of employees from OpenAI and Google publicly urged the DoD to withdraw the designation[5], arguing that designating a domestic AI leader as a national security threat would damage the United States’ competitive position relative to China, where state-directed AI development operates without safety restrictions.

The Agentic Weapons Policy Vacuum #

The underlying policy question that drove the dispute — under what conditions may frontier AI systems support lethal autonomous decision-making — remains unresolved at the doctrinal level. The Pentagon’s 2023 Directive 3000.09 on autonomous weapons establishes guidelines for testing and design but does not prohibit autonomous lethal systems. OpenAI’s contract cites this directive as a basis for use constraints; critics note it is, at best, a permissive framework.

As AI systems become capable of processing intelligence data, recommending targeting options, and coordinating operational sequences faster than human decision cycles — a capability already operationally demonstrated in the Ukraine conflict and documented in the Indian Ocean theater — the policy vacuum becomes an operational risk. The absence of an internationally recognized framework for AI-assisted lethal decision-making places frontier AI companies in an impossible position: they must operationalize their own ethics within contracts that the state can override by political designation.

The OpenAI-Anthropic Split as a Geopolitical Indicator #

The dispute has exposed a structural divergence within the frontier AI industry that will have lasting geopolitical consequences. Anthropic’s approach — explicit contractual prohibitions, derived from its Constitutional AI framework — is analogous to the European approach to AI governance: rules-based, specific, enforceable. OpenAI’s approach — legal compliance by reference to existing law — is analogous to the US approach to technology regulation more broadly: permissive until explicitly prohibited, with regulatory capture risk built in.

This is not merely a philosophical difference. It is a governance architecture divergence that will determine which AI systems are available for which state actors, under what conditions, and with what audit mechanisms. The Anthropic–Pentagon split is an early data point in a longer arc toward bifurcated AI governance: safety-constrained systems for commercial and allied partner use, unrestricted systems for national security state use.

graph TD

A[Frontier AI Lab] --> B{Defense Contract Negotiation}

B --> C[Accept 'Any Lawful Use' Terms]

B --> D[Maintain Explicit Safety Prohibitions]

C --> E[Pentagon Contract Awarded]

C --> F[Consumer Backlash / Uninstalls]

D --> G[Supply Chain Risk Designation]

D --> H[Consumer Surge / Downloads]

E --> I[Defense Market Lock-In]

G --> J[Defense Market Exclusion]

H --> K[Commercial Market Growth]

I --> L[Classified AI Expansion]

J --> M[Continued Covert Use Paradox]

M --> N[Renegotiation Pressure]

N --> B

Figure 4: Anthropic–Pentagon Decision Architecture — The feedback loop between safety governance and defense market access.

The Renegotiation Endgame #

As of March 7, 2026, Amodei is back at the negotiating table with Emil Michael, attempting to salvage a revised contract that would preserve some safety restrictions while enabling continued military use. The strategic logic for both sides is clear.

For Anthropic: the supply chain designation threatens to cascade across its entire government and defense-adjacent commercial business. Enterprise clients with Pentagon contracts must certify non-use of Anthropic’s models — a contractual cascade that could cost the company hundreds of millions in annual recurring revenue. Accepting a revised contract, even with weakened protections, may be existentially necessary.

For the Pentagon: the operational reality that Claude is actively deployed in Iran via Palantir’s Maven system creates institutional absurdity. The Department of War cannot simultaneously designate its own operational AI tool a national security risk without undermining the credibility of the designation mechanism itself. A negotiated resolution that allows the Pentagon to claim terms were clarified — without actually granting Anthropic’s demanded explicit prohibitions — is the path of least institutional embarrassment.

sequenceDiagram

participant A as Anthropic

participant P as Pentagon/DoW

participant O as OpenAI

participant M as Market

P->>A: $200M Contract (mid-2025)

A->>P: Claude deployed in classified networks

P->>A: Demand 'any lawful use' renegotiation

A->>P: Refuse — autonomous weapons/surveillance red lines

P->>O: Public pressure on Anthropic

O->>P: Rush deal (28 Feb 2026)

P->>A: Supply chain risk designation

A->>M: Consumer download surge

O->>M: Consumer uninstall surge (295%)

P->>A: Iran operations continue (Maven/Claude)

A->>P: Renegotiation attempt (5 Mar 2026)

Figure 5: Anthropic–Pentagon Chronology — The sequence of contractual, political, and market events.

Implications for AI Governance Economics #

The Constitutional AI Premium — and Its Limits #

Anthropic’s Constitutional AI framework was designed to make safety commercially viable — a competitive differentiator rather than a constraint. The Pentagon dispute reveals the limits of this model: in defense markets, where the state exercises coercive authority over contractors through procurement, designation, and classification mechanisms, commercial safety differentiation provides no protection.

The market bifurcation — consumer goodwill for Anthropic, defense market capture for OpenAI — suggests that the Constitutional AI premium is real but sector-specific. It may command a significant premium in consumer, healthcare, financial services, and European regulatory environments. It commands nothing in US national security procurement when the state has decided to impose unrestricted access as a condition of market participation.

The Nationalization Risk #

The deeper structural risk is nationalization creep — not formal state ownership, but functional state control through procurement leverage. A frontier AI company that depends on defense revenue for a significant share of its business cannot maintain safety policies that conflict with state demands. This is the endgame that the supply chain designation mechanism operationalizes: comply or be excluded.

As the defense AI market grows — and by all projections, classified AI deployment is expanding significantly in 2026–2027 — the economic incentive to capitulate to state demands grows proportionally. The Anthropic case will be studied by every frontier AI lab’s legal and strategy teams as a reference point for the minimum contractual concessions required to remain in the defense market.

The European Dimension #

graph LR

A[Frontier AI Lab] -->Safety Commitments| B[Constitutional Constraints]

A -->Defense Contract| C[State Authority Demands]

C -->Supply Chain Designation| D[Compliance Pressure]

B -->Conflict| D

D -->Accept Unrestricted Use| E[Safety Compromise]

D -->Refuse| F[Market Exclusion]

E -->Precedent| G[Nationalization Creep]

F -->EU Market| H[Regulatory Alignment]

style E fill:#ffcccc

style G fill:#ff9999

style H fill:#ccffcc

EU AI Act governance frameworks — which include prohibitions on AI use for mass biometric surveillance, social scoring, and certain real-time identification applications — are structurally aligned with Anthropic’s original contractual restrictions. The dispute therefore has a transatlantic dimension: European AI governance is, functionally, closer to Anthropic’s Constitutional AI framework than to OpenAI’s “legal compliance by reference” approach.

This creates an emerging market segmentation: EU-compliant AI systems for European and allied-partner deployments, state-permissive AI systems for US national security use. Anthropic, if it survives the Pentagon dispute with its safety commitments intact, may find itself the default provider for European government and allied intelligence use cases — a significant market in its own right.

Conclusion #

The Anthropic–Pentagon dispute is a defining event in the governance of frontier AI. It has simultaneously demonstrated that safety-committed AI development can achieve classified deployment, that state coercion can disrupt safety governance frameworks regardless of contractual commitments, and that commercial markets reward safety signals while defense markets punish them.

The geopolitical risk implication is substantial. A world in which frontier AI is available to state actors with unrestricted access — with no constitutional constraints, no international framework, and no meaningful audit mechanism — is a world in which AI capability asymmetry becomes the primary driver of conflict escalation risk. Anthropic’s refusal to accept bulk data analysis language was not a negotiating position. It was a recognition that without explicit prohibitions, classified AI deployment has no technical or contractual constraint on scope creep into surveillance, targeting, and autonomous lethality.

The renegotiation currently underway will determine whether a middle path exists: one that allows frontier AI to serve national security functions within a framework of enforceable limits. If it fails, the precedent is clear — in the American defense market, safety is a luxury that state authority can revoke by designation.

This article is part of the Geopolitical Risk Intelligence series published by Stabilarity. All sources cited are publicly available. This research does not rely on classified information. World Bank Governance Indicators and GDELT Project data inform the risk assessment frameworks referenced in this analysis.

- Brundage, M. et al. (2018). The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation. arXiv:1802.07228. arxiv.org/abs/1802.07228[10]

- RAND Corporation (2023). Responsible AI in Defense: Principles and Practice. rand.org/pubs/research_reports/RRA2337-1.html[11]

- NATO (2021). Principles of Responsible Use of AI in Defence. nato.int[12]

- SIPRI (2023). Autonomy in Weapons Systems: International Humanitarian Law and Emerging Technologies. sipri.org[13]

Data sources: GDELT Project[14], World Bank Governance Indicators[15], Reuters, CNBC, MIT Technology Review, TechCrunch, The Verge, AP News, Financial Times.

References (15) #

- Stabilarity Research Hub. (2026). Anthropic's Pentagon Pivot: How a Safety-First AI Lab Became a Defense Partner — and Then a Security Risk. doi.org. dtii

- Anthropic awarded $200M DOD agreement for AI capabilities \ Anthropic. anthropic.com. v

- (2026). Rate limited or blocked (403). media.defense.gov. tt

- Pentagon informs Anthropic that it has been designated a supply chain risk | AP News. apnews.com. v

- (2026). It’s official: The Pentagon has labeled Anthropic a supply-chain risk | TechCrunch. techcrunch.com. n

- (2026). OpenAI’s ‘compromise’ with the Pentagon is what Anthropic feared | MIT Technology Review. technologyreview.com. tn

- (2026). Anthropic officially told by DOD that it's a supply chain risk even as Claude used in Iran. cnbc.com. n

- (2026). Anthropic's Claude hits No. 1 on Apple's top free apps list. cnbc.com. n

- (2026). ChatGPT uninstalls surged by 295% after DoD deal | TechCrunch. techcrunch.com. n

- Brundage, Miles, Avin, Shahar, Clark, Jack, Toner, Helen, et al.. (2018). The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation. arxiv.org. dtii

- rand.org/pubs/research_reports/RRA2337-1.html. rand.org. ta

- Summary of the NATO Artificial Intelligence Strategy | NATO Official text. nato.int. tt

- (2023). Suspending New START, Ukraine one year on, climate security and NATO, Japan’s new military policies and more | SIPRI. sipri.org. ta

- The GDELT Project. gdeltproject.org. ia

- World Bank Governance Indicators. info.worldbank.org. tt