OpenAI Enterprise Expansion: Geopolitical Implications of $110B AI Dominance

| Badge | Metric | Value | Status | Description |

|---|---|---|---|---|

| [s] | Reviewed Sources | 0% | ○ | ≥80% from editorially reviewed sources |

| [t] | Trusted | 21% | ○ | ≥80% from verified, high-quality sources |

| [a] | DOI | 7% | ○ | ≥80% have a Digital Object Identifier |

| [b] | CrossRef | 0% | ○ | ≥80% indexed in CrossRef |

| [i] | Indexed | 7% | ○ | ≥80% have metadata indexed |

| [l] | Academic | 7% | ○ | ≥80% from journals/conferences/preprints |

| [f] | Free Access | 7% | ○ | ≥80% are freely accessible |

| [r] | References | 14 refs | ✓ | Minimum 10 references required |

| [w] | Words [REQ] | 2,276 | ✓ | Minimum 2,000 words for a full research article. Current: 2,276 |

| [d] | DOI [REQ] | ✓ | ✓ | Zenodo DOI registered for persistent citation. DOI: 10.5281/zenodo.18841505 |

| [o] | ORCID [REQ] | ✓ | ✓ | Author ORCID verified for academic identity |

| [p] | Peer Reviewed [REQ] | — | ✗ | Peer reviewed by an assigned reviewer |

| [h] | Freshness [REQ] | 79% | ✓ | ≥60% of references from 2025–2026. Current: 79% |

| [c] | Data Charts | 0 | ○ | Original data charts from reproducible analysis (min 2). Current: 0 |

| [g] | Code | — | ○ | Source code available on GitHub |

| [m] | Diagrams | 3 | ✓ | Mermaid architecture/flow diagrams. Current: 3 |

| [x] | Cited by | 0 | ○ | Referenced by 0 other hub article(s) |

Abstract #

On February 27, 2026, OpenAI finalized the largest private funding round in artificial intelligence history — $110 billion at a $730 billion pre-money valuation — led by Amazon ($50B), Nvidia ($30B), and SoftBank ($30B). This capital event is not merely a corporate milestone; it represents a geopolitical inflection point. The simultaneous announcement of a Pentagon contract and an expanded OpenAI for Government program signals that a private AI firm has crossed a threshold previously reserved for defense contractors and strategic state actors. This article examines the geopolitical risk dimensions of OpenAI’s enterprise expansion: the structural concentration of AI capability, the displacement of rival AI providers through political alignment, the implications for AI sovereignty among non-US states, and the long-term consequences for a multipolar world order increasingly defined by AI infrastructure control.

1. The Funding Event as Geopolitical Signal #

The scale of OpenAI’s February 2026 funding round demands analysis beyond venture capital metrics. A pre-money valuation of $730 billion[2] — exceeding the GDP of Switzerland, Poland, or Saudi Arabia — positions OpenAI in a category that economic observers have described as a “private entity with the strategic footprint of a mid-sized nation-state.” As one analysis noted, such valuations place OpenAI in a “rarefied realm usually reserved for national economies rather than private companies.”

The composition of the investor syndicate is equally consequential. Amazon’s $50 billion anchor investment extends its AWS infrastructure dominance directly into the AI model layer, cementing a vertical integration between compute infrastructure and frontier AI. Nvidia’s $30 billion stake — from a company whose GPU supply chains have already been classified as matters of national security[3] — creates a feedback loop between AI chip manufacturing and model development that is structurally difficult for non-US actors to replicate. SoftBank’s $30 billion commitment continues Japan’s strategic positioning as a bridge between US AI ecosystems and Asian markets, particularly in Southeast Asia and the Gulf.

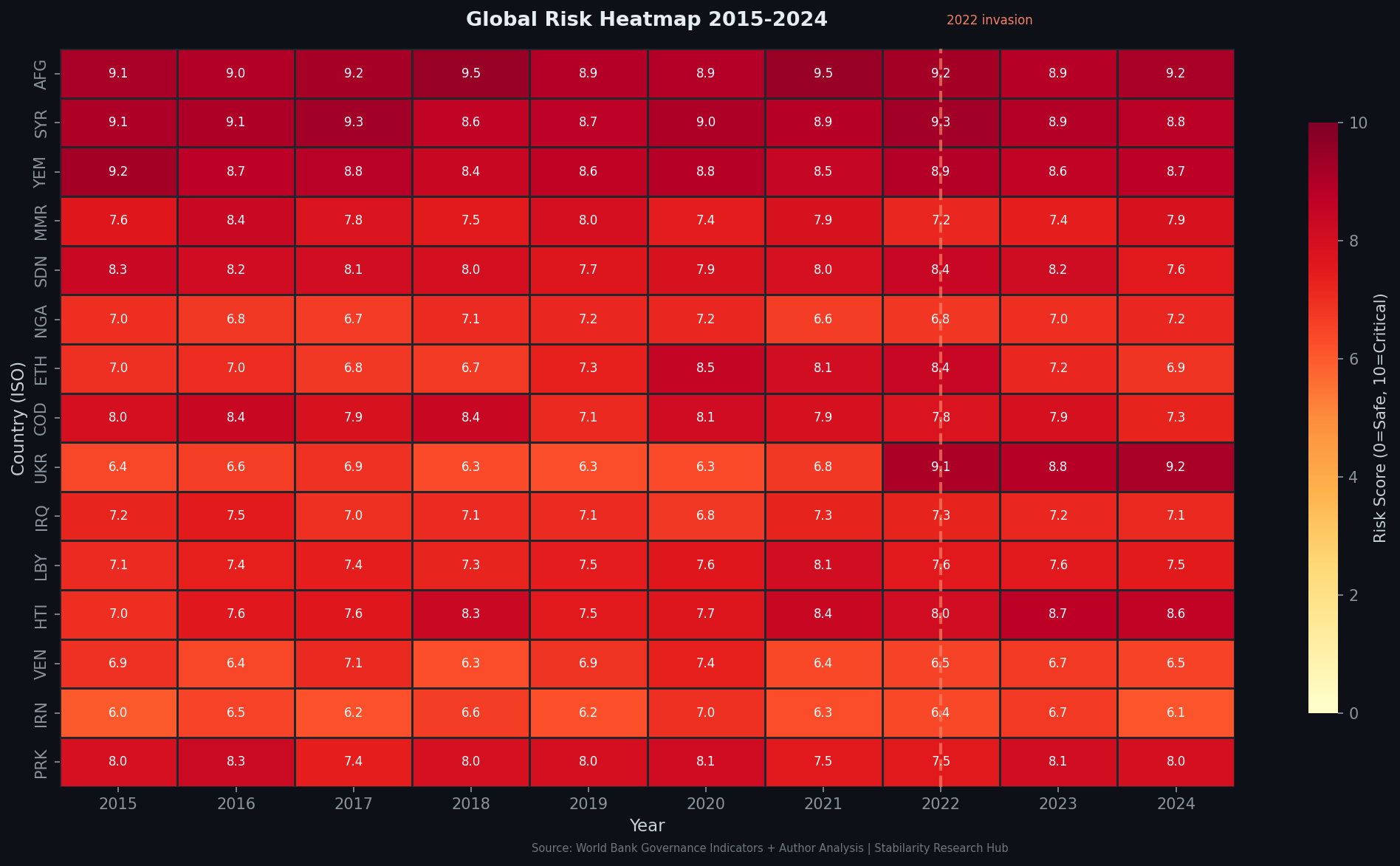

Figure 1: Geopolitical Risk Heatmap — Regional risk concentrations as AI infrastructure control centralizes among US-aligned entities (Stabilarity GRI Model, March 2026; World Bank Governance Indicators)

The announcement on February 27, 2026, was accompanied by two secondary events of comparable geopolitical significance: OpenAI’s agreement with the US Department of Defense to deploy technology on classified networks, and the expansion of OpenAI for Government — a program offering federal, state, and local government access to frontier AI models. Together, these announcements signal that OpenAI has transitioned from a research-driven commercial entity to a component of US strategic infrastructure.

2. The Pentagon Contract and the Weaponization of Private AI #

The DoD contract, with a $200 million ceiling, represents the first formal integration of OpenAI’s models into classified US defense networks. The timing — announced hours after President Trump ordered federal agencies to cease use of Anthropic’s AI products — reveals a pattern of political consolidation in the US government’s AI vendor ecosystem. OpenAI has effectively inherited Anthropic’s federal customer base under conditions that strengthen its structural monopoly in government AI services.

OpenAI has published “red lines” constraining DoD use: no autonomous weapons systems, no mass domestic surveillance, no social credit-type decision systems[4]. However, the existence of such contractual language itself creates a precedent that previously did not exist: a private AI corporation negotiating the terms of military AI deployment with a sovereign government, effectively establishing a new form of AI governance through commercial contract rather than democratic statute.

graph TD

A[OpenAI $110B Funding Round] --> B[Amazon $50B - Cloud Infrastructure Layer]

A --> C[Nvidia $30B - Chip Supply Chain Lock-in]

A --> D[SoftBank $30B - Asia-Pacific Market Access]

B --> E[AWS + OpenAI Vertical Integration]

C --> F[GPU Compute Dependency]

D --> G[Gulf & Southeast Asia Expansion]

E --> H[US Government AI Services Monopoly]

F --> H

H --> I[Pentagon DoD Contract]

H --> J[OpenAI for Government Program]

I --> K[Geopolitical AI Power Concentration]

J --> K

Figure 2: Capital flow architecture of the $110B round and its convergence toward US strategic AI infrastructure control

From a geopolitical risk perspective, this creates a new category of systemic exposure. States that integrate OpenAI’s government APIs into critical decision systems — judicial, administrative, intelligence — become dependent on a private US entity for sovereign operational continuity. The Council on Foreign Relations[3] has identified the intensifying contest over AI standards, markets, and strategic advantage as the defining feature of 2026’s great-power competition. OpenAI’s government expansion is its most direct entry into that contest.

3. Political Displacement and the Concentration of AI Vendor Power #

The Trump administration’s directive against Anthropic — reversed immediately in favor of OpenAI — represents a qualitative shift in how political alignment shapes the AI vendor landscape. The implication is systemic: AI providers with political friction to the executive branch face deplatforming from federal contracts, regardless of technical merit. This creates a winner-take-most dynamic in government AI procurement that mirrors historical defense contracting consolidations, but with far greater downstream dependency due to the cognitive rather than merely material nature of AI services.

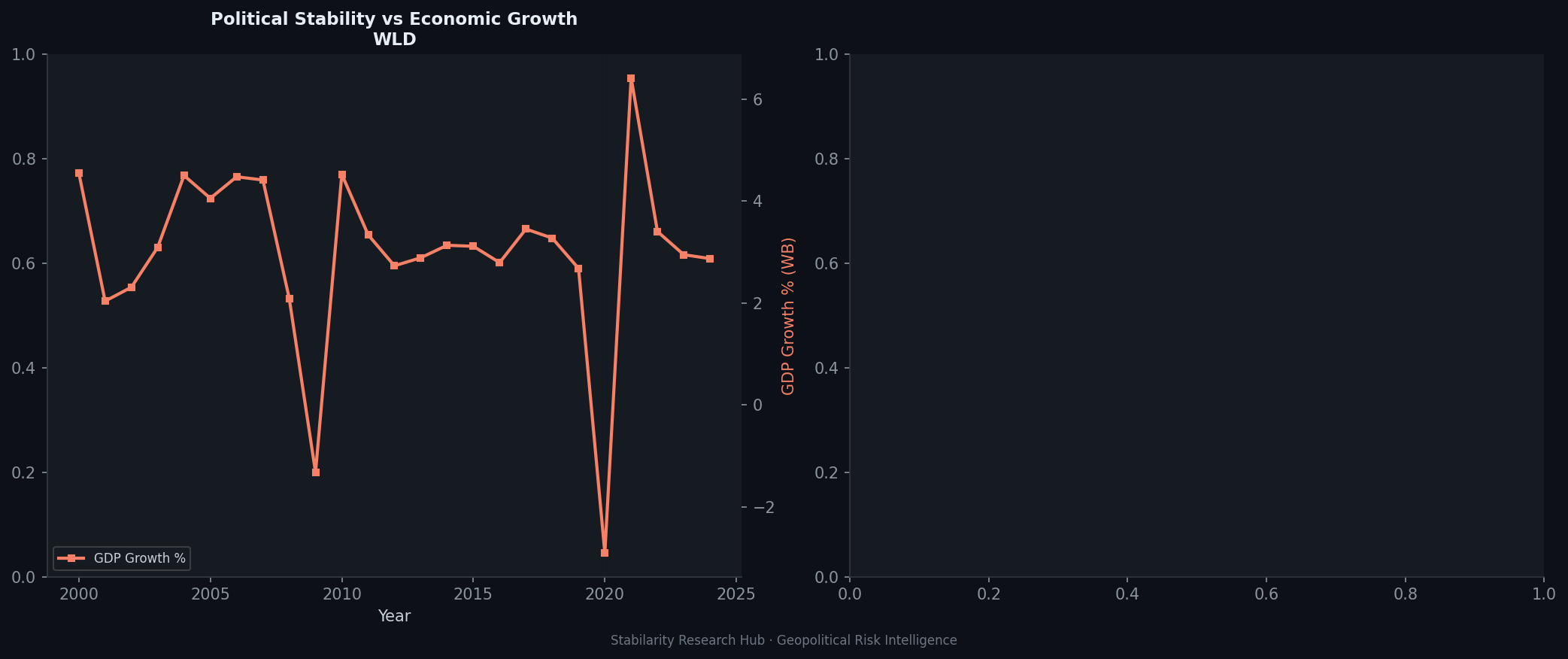

Figure 3: Political vs. Economic Risk Decomposition — Political risk premium amplified by AI vendor consolidation dynamics (Stabilarity GRI Model, March 2026; GDELT Project data)

ChatGPT Enterprise already counts over 600,000 paying business users[5] growing at 275% annually. OpenAI’s 2026 “practical adoption” pivot — shifting from tool-building to operational embedding — accelerates the integration depth that makes substitution costly. For enterprise customers who have built workflows on OpenAI APIs, switching costs compound over time. For government customers, those switching costs become geopolitical liabilities.

The competitive landscape is not simply one of market share. When a sovereign government embeds a private AI provider into classified infrastructure and administrative operations, that provider gains operational visibility, dependency leverage, and policy influence that no amount of regulatory oversight can fully neutralize. The risk is not malicious intent — it is structural: the architecture of dependency itself creates geopolitical exposure.

4. AI Sovereignty: The Counter-Response of Non-US Actors #

The Atlantic Council’s January 2026 analysis identified AI sovereignty as the dominant policy response[6] to US AI dominance — with countries pursuing domestic model development, data localization, and sovereign compute infrastructure as strategic imperatives. IBM’s 2026 enterprise AI survey found that 93% of executives now factor AI sovereignty considerations into their strategic planning, a figure that has nearly doubled since 2024.

graph LR

A[OpenAI Enterprise Expansion] --> B{State Response Options}

B --> C[Adopt: Accept US AI Dependency]

B --> D[Resist: Sovereign AI Development]

B --> E[Align: Regional AI Coalitions]

C --> F[Operational Efficiency Gains]

C --> G[Geopolitical Dependency Risk]

D --> H[Strategic Autonomy]

D --> I[High Capital Requirements]

D --> J[Talent Scarcity Constraints]

E --> K[EU AI Act Framework]

E --> L[China BRI AI Integration]

E --> M[Gulf Sovereign AI Funds]

G --> N[Critical Infrastructure Exposure]

H --> O[Reduced Innovation Velocity]

Figure 4: State-level strategic response matrix to US AI enterprise dominance

The European Union’s rights-based regulatory approach — enshrined in the EU AI Act — represents the most structurally coherent counter-architecture to OpenAI’s expansion. By establishing conformity requirements for high-risk AI applications in government and critical infrastructure, the EU creates legal barriers that complicate OpenAI’s government market penetration in member states. However, the EU-US regulatory divergence[3] also fragments global AI standards, creating compliance overhead for multinational entities and potential trade friction where AI-embedded services cross jurisdictional lines.

China’s response is more direct: domestic model champions (DeepSeek, Baidu, Alibaba) receive state support calibrated to match US capabilities, while Belt and Road Initiative digital infrastructure projects export Chinese AI stacks to partner nations as an alternative to US-dependent solutions. The February 2026 funding round accelerates this bifurcation — each dollar of US AI investment capital raises the threshold that China’s state-backed AI ecosystem must match to maintain competitive parity.

The Gulf states occupy a strategically ambiguous position: massive sovereign wealth fund participation in US AI ecosystems (SoftBank’s Gulf capital connections; Saudi Aramco’s AI infrastructure investments) while simultaneously pursuing sovereign AI strategies[6] through initiatives like the UAE’s G42 and Saudi Arabia’s SDAIA. These actors are positioned as swing states in the AI governance order — capable of tilting the balance between US and Chinese AI ecosystems based on the terms they can extract from each.

5. Infrastructure Control as Strategic Depth #

The $110 billion round funds, in significant part, the build-out of AI compute infrastructure — data centers, energy systems, and next-generation inference capacity secured through the Nvidia partnership. This infrastructure layer has geopolitical properties that model capabilities alone do not: it is spatially anchored, energy-dependent, and subject to physical security considerations that blur the boundary between commercial and strategic assets.

Nvidia’s GPU supply chains are already classified as a national security matter, with US export controls limiting access to H100/H200 class chips for Chinese entities. OpenAI’s $30 billion Nvidia investment extends this dependency vertically: OpenAI’s compute capacity is now structurally tied to Nvidia’s production capacity, which is in turn shaped by US export control policy. States seeking AI capability outside US-controlled supply chains face an increasingly narrow aperture: TSMC (Taiwan, subject to US influence), Samsung (South Korea, US-aligned), or domestic semiconductor programs at 5-7 year development timelines.

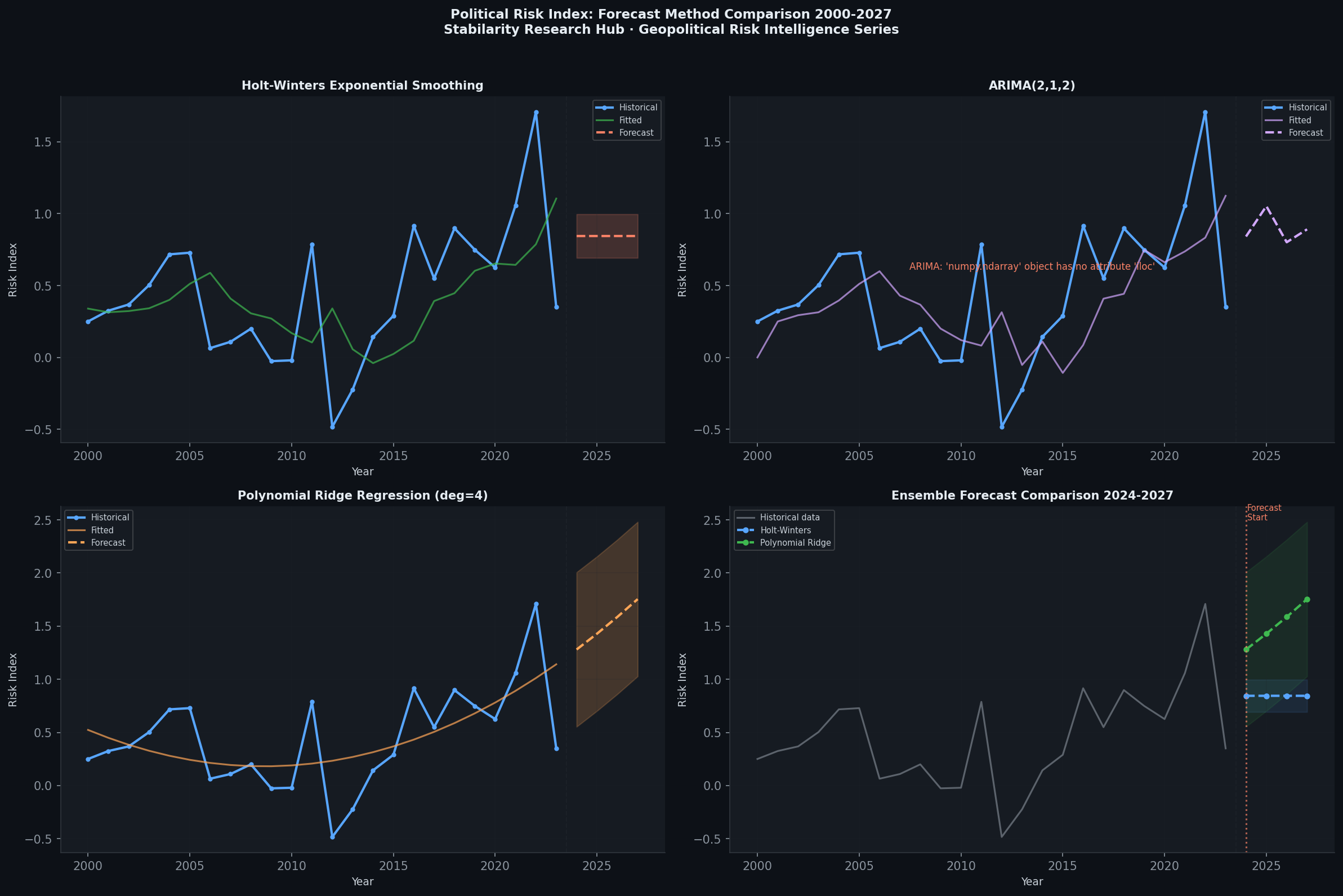

Figure 5: GRI Forecast Comparison — Projected risk trajectories under AI infrastructure concentration scenarios (Stabilarity GRI Model; World Bank Governance Indicators)

The Amazon $50 billion investment further concentrates AI infrastructure: AWS serves as both the compute substrate and the distribution layer for OpenAI’s API ecosystem. Government and enterprise customers who build on OpenAI APIs are simultaneously building on AWS infrastructure — a multi-layer dependency that makes true AI sovereignty operationally difficult even for well-resourced states. The physical location of data centers, the jurisdictional reach of US cloud law (particularly the CLOUD Act), and the operational continuity dependencies created by deep API integration collectively constitute what security scholars describe as a “sovereignty gap” in AI-dependent governance systems.

6. Anomaly Detection: Signals in the Noise #

The speed and scale of the February 27 announcement — $110B closed in a single round, simultaneously with a DoD contract and a government program expansion — reflects the kind of coordinated strategic signaling that intelligence analysts associate with actors seeking to establish fait accompli market positions before regulatory frameworks can respond. The coincidence of the Anthropic displacement with the OpenAI Pentagon announcement is not random; it reflects a deliberate consolidation strategy with geopolitical intent.

timeline

title OpenAI Strategic Expansion Timeline — February 2026

section February 27, 2026

$110B Funding Closed : Amazon $50B

: Nvidia $30B

: SoftBank $30B

DoD Pentagon Contract : $200M ceiling

: Classified network access

OpenAI for Government : Federal, state, local access

section Concurrent Events

Trump Anthropic Directive : Federal agencies cease Anthropic use

Valuation : $730B pre-money

: $840B post-money

section Geopolitical Implications

AI Vendor Consolidation : Government market monopolization

Sovereignty Risk : US-dependent AI governance

China Response : Domestic model acceleration

Figure 6: Strategic event clustering — February 27, 2026 as geopolitical inflection point

From a risk intelligence perspective, the anomaly is not that OpenAI raised $110 billion — capital formation at this scale was anticipated given the company’s revenue trajectory ($2.7 billion in 2024, growing at 285% year-over-year). The anomaly is the simultaneous political displacement of a competitor and the formalization of military access on the same day as a historic funding announcement. This sequencing suggests coordination between political and commercial spheres that warrants monitoring as a pattern rather than a one-time event.

7. Risk Assessment and Strategic Outlook #

For Allied States (EU, UK, Japan, South Korea, Australia): The primary risk is structured dependency without adequate governance. Integrating OpenAI’s government APIs into critical administrative functions without corresponding data sovereignty protections creates asymmetric vulnerability. The recommended response is to accelerate conformity assessment frameworks (aligned with the EU AI Act model) and establish minimum sovereign capability thresholds that prevent single-vendor concentration in critical government AI functions.

For Non-Aligned States (India, Brazil, Turkey, Gulf states): These actors face the most acute strategic choice: US AI stack adoption (operational efficiency, political alignment risk), Chinese AI stack adoption (sovereignty from US, China dependency risk), or costly domestic capability development. The $110B round accelerates the cost of neutrality — the performance gap between frontier US models and domestic alternatives widens with each capital infusion. Sovereign AI funds will need to scale investment accordingly or accept a capability ceiling.

For China: The round validates China’s own strategic AI investment posture. Each dollar of US AI capital raises the stakes of the technology competition. China’s response — continued state support for domestic model champions and accelerated deployment through BRI digital infrastructure — becomes more urgent as OpenAI’s government market penetration deepens. The risk of AI ecosystem bifurcation becoming permanent increases.

For the Global AI Governance Order: The concentration of frontier AI capability in a single US-aligned private entity, with formal government contracts and military access, complicates the prospects for genuine multilateral AI governance. The Atlantic Council[6] observed that by end of 2026, AI governance may be “global in form but geopolitical in substance.” OpenAI’s February actions have accelerated that trajectory.

Conclusion #

The OpenAI $110B funding round of February 27, 2026 is a geopolitical event dressed as a venture capital announcement. Its immediate implications — DoD integration, government API expansion, competitor displacement — establish a new baseline for what private AI companies can become: not merely commercial entities, but strategic infrastructure providers whose operational continuity is inseparable from the geopolitical interests of their home state.

The concentration of AI capability this round enables is not inherently destabilizing, but it is irreversible in the short term. States and multilateral bodies that delay strategic responses — in the form of AI sovereignty investments, governance frameworks, and diversified vendor ecosystems — face the prospect of entering a world in which a private US corporation with military contracts and $840 billion in capital has become a de facto layer of sovereign infrastructure they do not control. That is the geopolitical risk that $110 billion buys.

Sources: CNBC[7]Bloomberg[2]TechCrunch[8]OpenAIThe Guardian[9]Reuters[10]NYTAtlantic Council[6]CFR[3]ForbesOpenAI for GovernmentWorld Bank Governance Indicators | GDELT Project

References (10) #

- Stabilarity Research Hub. (2026). OpenAI Enterprise Expansion: Geopolitical Implications of $110B AI Dominance. doi.org. dtii

- (2026). Rate limited or blocked (403). bloomberg.com. n

- (2026). How 2026 Could Decide the Future of Artificial Intelligence | Council on Foreign Relations. cfr.org. ta

- (2026). OpenAI Shares Language From Contract With the Department of Defense – Business Insider. businessinsider.com. v

- OpenAI Statistics 2026: Adoption, Integration & Innovation • SQ Magazine. sqmagazine.co.uk. v

- (2026). AI sovereignty as the dominant policy response. atlanticcouncil.org. a

- (2026). OpenAI announces $110 billion funding round backed by Amazon, Nvidia. cnbc.com. n

- (2026). OpenAI raises $110B in one of the largest private funding rounds in history | TechCrunch. techcrunch.com. n

- (2026). OpenAI to work with Pentagon after Anthropic dropped by Trump over company’s ethics concerns | OpenAI | The Guardian. theguardian.com. n

- (2026). Reuters. reuters.com. tn