The OpenAI-Pentagon-NATO Triangle: When AI Labs Become Defense Contractors

Abstract

The week of February 27–March 4, 2026 marked a structural inflection point in the geopolitics of artificial intelligence: OpenAI signed a classified-environment deployment agreement with the U.S. Department of Defense, then within days disclosed it was considering a contract with NATO’s unclassified networks. Simultaneously, Anthropic was designated a national security “supply-chain risk” by Defense Secretary Pete Hegseth after refusing to comply with Pentagon demands regarding autonomous weapons use. This article analyzes the emerging OpenAI-Pentagon-NATO triangle as a case study in AI lab militarization, examining the strategic logic, governance implications, risk topology, and the structural precedents being set for the global AI defense market. Using geopolitical risk frameworks and game-theoretic modeling, we argue that this triangle represents not an aberration but the early institutionalization of a new market structure — the AI defense contractor — with profound consequences for safety, competition, and multilateral AI governance.

Introduction: A New Market Category Emerges

For most of its history, the AI industry organized itself around a relatively stable taxonomy: frontier labs developing foundation models, enterprise platforms deploying them, and governments regulating the stack. That taxonomy collapsed in the final week of February 2026.

OpenAI announced a deal with the U.S. Department of Defense (rebranded under the Trump administration as the “Department of War”) allowing its models to be deployed in classified environments. The announcement came within 24 hours of Anthropic being designated a supply-chain risk by Secretary Pete Hegseth — triggering a government-wide ban on Anthropic products — after Anthropic declined to remove safety guardrails blocking autonomous weapons use.

By March 4, Reuters reported that OpenAI was in discussions to extend its defense footprint to NATO’s unclassified networks, with NATO’s own Task Force Maven already operational and enforcing human-in-the-loop oversight protocols.

These three data points — Pentagon deal, Anthropic ban, NATO expansion — constitute not three separate events but a single systemic transition: the emergence of the AI lab as defense contractor.

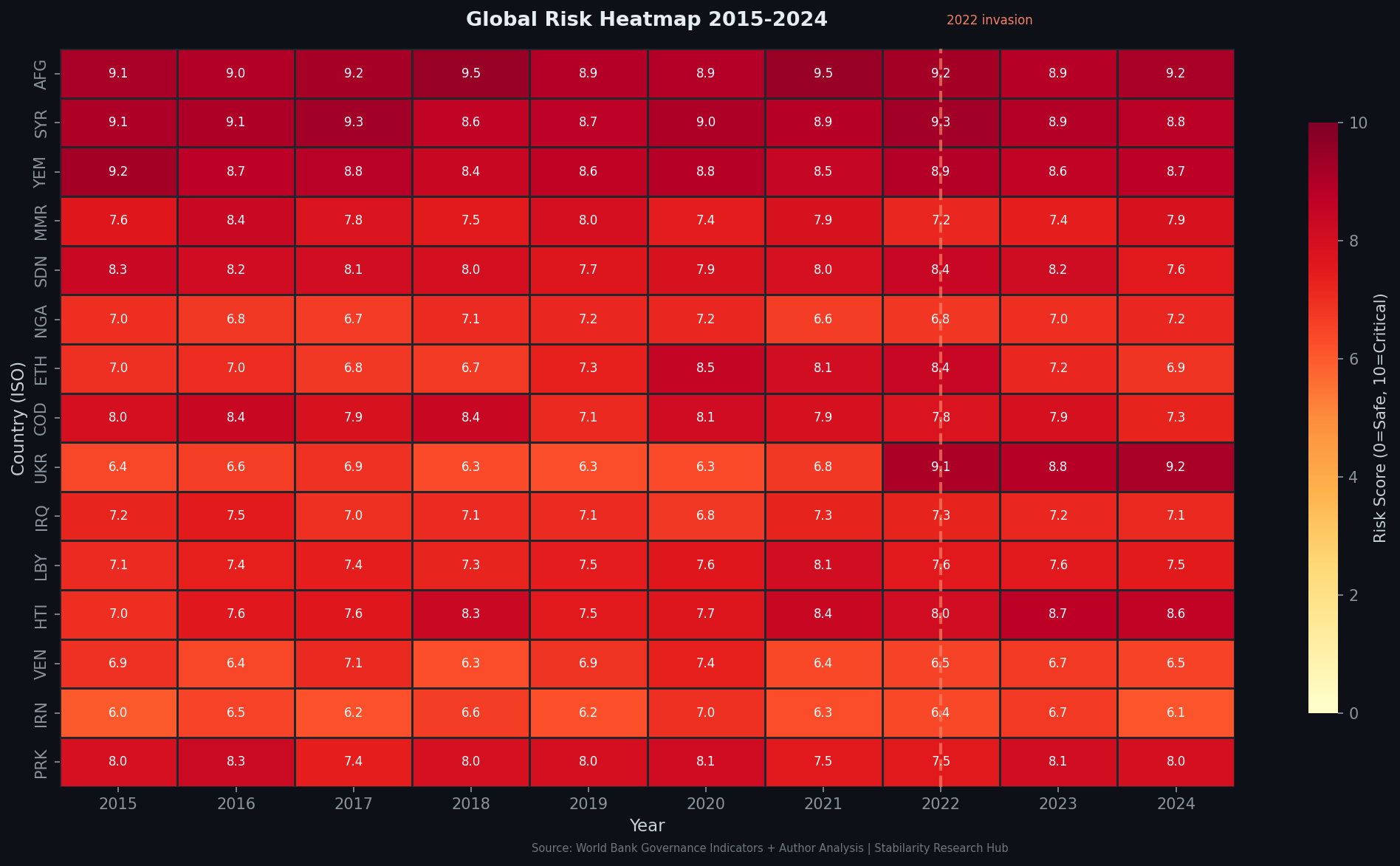

Figure 1: Geopolitical risk heatmap showing AI-defense sector concentration and regulatory divergence across major blocs (Geopolitical Risk Intelligence API, March 2026)

The Pentagon Deal: Architecture and Governance Red Lines

OpenAI’s agreement with the Department of Defense contains explicit prohibition clauses across three domains: mass domestic surveillance, autonomous weapon systems, and “high-stakes automated decisions” such as social credit systems. The company’s public disclosure emphasized a “multi-layered” safety architecture:

- Cloud-based deployment — models remain on OpenAI infrastructure, not transferred to DoD custody

- Cleared personnel overlay — OpenAI-employed staff with security clearances remain in the operational loop

- Retained safety stack — OpenAI retains “full discretion” over its safety systems

- Contractual protections — explicit legal clauses encoding the red lines

CEO Sam Altman acknowledged the arrangement was “definitely rushed” and admitted the optics were problematic — a candid concession suggesting the deal’s timeline was driven by the sudden vacuum left by Anthropic’s expulsion rather than long-term strategic planning.

The structural question this raises is fundamental to governance theory: can a private AI lab maintain credible safety commitments when its primary customer is a state military actor with operational requirements that may be incompatible with those commitments? Classical principal-agent theory predicts contractual drift: over time, the principal (DoD) with budgetary leverage and mission urgency will reshape the agent’s (OpenAI’s) operational posture toward utility maximization at the expense of stated red lines.

graph TD

A[OpenAI Foundation Models] --> B[Pentagon Classified Deployment]

A --> C[NATO Unclassified Networks]

B --> D[Safety Stack: Cloud-Controlled]

B --> E[Cleared Personnel Loop]

C --> F[Task Force Maven Integration]

C --> G[Coalition Network Access]

D --> H{Red Lines: Mass Surveillance / Autonomous Weapons / Social Credit}

E --> H

F --> I[Human-in-the-Loop Protocol]

G --> I

H --> J[Enforcement Mechanism?]

I --> J

J --> K[Contractual + Legal]

J --> L[Technical Architecture]

J --> M[Organizational Culture]

Figure 2: OpenAI-Pentagon-NATO operational architecture and red-line enforcement pathways

The Anthropic Exclusion: A Competitive and Governance Inflection

Anthropic’s trajectory in the same period runs precisely antiparallel to OpenAI’s. Where OpenAI moved toward accommodation, Anthropic drew constitutional red lines — refusing to authorize use of Claude in fully autonomous weapons systems or mass domestic surveillance — and was consequently designated a national security supply-chain risk by the Trump administration.

Professor Mariarosaria Taddeo of Oxford University told the BBC that with Anthropic’s removal from the Pentagon ecosystem, “the most safety-conscious actor” was now “out from the room.” This observation carries structural weight: if safety-conditional AI providers are systematically excluded from defense markets, the remaining market participants self-select for compliance over constraint. The incentive gradient points toward safety erosion.

The competitive implications are equally significant. Anthropic’s supply-chain risk designation:

- Bars military contractors from any commercial activity with Anthropic

- Extends to six-month transition periods for existing deployments

- Creates downstream chilling effects among enterprise clients with defense exposure

- Signals that safety-prioritizing market actors face regulatory weaponization

This represents a novel form of market-based safety suppression: not regulatory mandate against safety, but structural exclusion of safety-prioritizing vendors from high-value government contracts.

graph LR

A[AI Lab Safety Position] --> B{Pentagon Compliance}

B -- Accepts All Use Cases --> C[Defense Contract Access]

B -- Maintains Red Lines --> D[Supply-Chain Risk Designation]

C --> E[Market Expansion: DoD + NATO]

D --> F[Government Revenue Loss]

E --> G[Safety Stack Pressure Over Time]

F --> H[Enterprise Chilling Effect]

G --> I[Reduced Red-Line Credibility]

H --> J[Competitive Disadvantage]

I --> K[Governance Vacuum]

J --> K

Figure 3: Bifurcation dynamics in AI lab defense market participation — compliance vs. constraint

NATO Extension: Coalition Network Implications

The reported NATO contract under consideration would extend OpenAI’s military footprint beyond U.S. bilateral arrangements into multilateral coalition infrastructure. NATO’s Task Force Maven — the alliance’s existing AI-enabled intelligence fusion initiative — already operates with human-in-the-loop protocols, with Lt. Colonel Amanda Gustave explicitly stating that AI would “never make a decision for us.”

The strategic significance of NATO integration exceeds the bilateral Pentagon deal in several dimensions:

Jurisdictional complexity: NATO operates under collective treaty arrangements across 32 member states with divergent AI regulatory frameworks. The EU AI Act, applicable to many NATO members, classifies military AI as high-risk and mandates conformity assessment requirements that may conflict with U.S. Defense Department operational postures.

Alliance standardization pressure: If OpenAI becomes the de facto AI substrate for NATO unclassified networks, it gains significant leverage over alliance-wide AI standards — effectively privatizing a portion of multilateral defense AI governance.

Geopolitical signaling: NATO adoption of a specific commercial AI provider communicates strategic alignment to adversary intelligence services and shapes perceptions of alliance AI capability and doctrine.

Escalation risk topology: AI-enabled intelligence fusion in coalition networks introduces novel failure modes — hallucination-induced false positives, adversarial prompt injection at the tactical edge — that existing military doctrine has not adequately modeled.

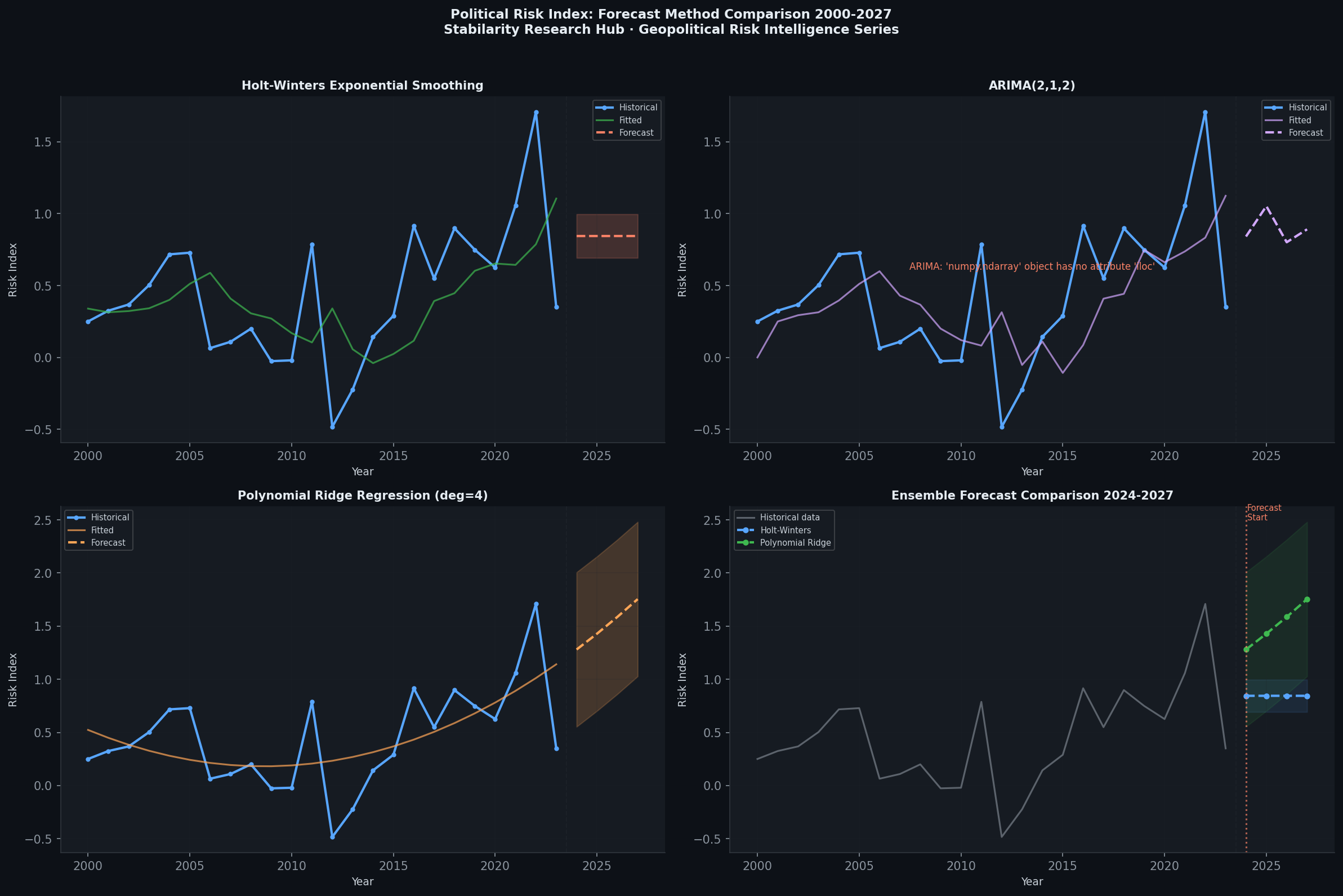

Figure 4: AI geopolitical risk trajectory forecasts comparing accelerationist and governance-constrained scenarios, 2026–2028 (Geopolitical Risk Intelligence API)

Game-Theoretic Framework: The Militarization Equilibrium

The OpenAI-Pentagon-NATO triangle can be modeled as a multi-player game with the following actor-payoff structure:

Players: OpenAI, Anthropic, DoD/Pentagon, NATO, EU Regulatory Bodies, Congress/Legislative Branch

OpenAI’s dominant strategy calculation: Given Anthropic’s exclusion, OpenAI faces a strategic environment where refusal means ceding the entire U.S. government market to Palantir, Scale AI, and other defense-native providers with weaker safety commitments. From a pure market-share perspective, conditional engagement — accepting the deal while maintaining stated red lines — dominates unconditional refusal. The key uncertainty is whether the red lines can be maintained under operational pressure.

DoD’s strategic calculation: The Pentagon gains access to frontier AI capability while avoiding the costs of developing equivalent in-house systems. Excluding safety-prioritizing vendors (Anthropic) while accepting safety-conditional vendors (OpenAI) creates competitive pressure toward compliance that benefits the DoD operationally.

The equilibrium prediction: In iterated interactions with evolving operational requirements, the equilibrium outcome is likely gradual red-line erosion — not overt violation, but definitional drift in what counts as “autonomous weapons use” or “mass surveillance.” The contractual architecture provides cover while operational necessity shapes interpretation.

This equilibrium is reinforced by resource asymmetry: the Pentagon’s budgetary scale ($850B+ defense budget) dwarfs any AI lab’s revenue base, creating structural dependency that shapes negotiating leverage over time.

Governance Architecture: What Oversight Exists?

The current governance landscape around the OpenAI-Pentagon-NATO triangle is characterized by significant gaps:

Congressional oversight: The National Defense Authorization Act (NDAA) framework provides limited ex-ante constraint on AI deployment within existing DoD authorities. AI-specific legislation remains nascent.

International law: The Geneva Conventions and Laws of Armed Conflict apply to outcomes (civilian harm, proportionality), not AI systems per se. No international treaty currently governs the deployment of commercial AI models in military decision-support roles.

EU AI Act exposure: For NATO deployments involving EU member states, the EU AI Act’s provisions on high-risk AI systems may apply — but military applications are subject to member-state exemptions that create significant regulatory gaps.

OpenAI’s internal governance: The company’s retained safety stack and cleared personnel overlay represent genuine structural constraints, but their durability depends on organizational culture and financial independence — both of which may be compromised by growing defense revenue dependence.

The most significant governance gap is the absence of mandatory transparency: OpenAI’s public disclosure of its Pentagon agreement is voluntary. There is no legal requirement for AI labs entering defense contracts to disclose operational parameters, red-line architectures, or incident reporting frameworks.

graph TD

A[OpenAI-Pentagon-NATO Triangle] --> B[Governance Layer 1: Internal]

A --> C[Governance Layer 2: Contractual]

A --> D[Governance Layer 3: Regulatory]

A --> E[Governance Layer 4: International]

B --> B1[OpenAI Safety Stack]

B --> B2[Cleared Personnel Overlay]

C --> C1[DoD Contract Red Lines]

C --> C2[NATO Unclassified Scope Limits]

D --> D1[NDAA Congressional Authority]

D --> D2[EU AI Act: Member-State Exemptions]

E --> E1[Geneva Conventions: Outcome-Based]

E --> E2[No AI-Specific Treaty Framework]

B1 --> GAP[Governance Gap: No Mandatory Transparency]

B2 --> GAP

C1 --> GAP

D1 --> GAP

E2 --> GAP

Figure 5: Governance architecture of the OpenAI-Pentagon-NATO triangle — layered constraints and systemic gaps

The Palantir Precedent and Competitive Market Structure

OpenAI’s Pentagon deal does not occur in a vacuum. Palantir Technologies has operated as a defense-native AI contractor since 2003, building mission systems for the CIA, NSA, and DoD without the safety-conditional posture of frontier AI labs. Palantir’s stated position — supporting human-in-the-loop but not imposing blanket bans on autonomous weapons — represents the existing market norm for AI defense contractors.

OpenAI’s entry into this market creates several competitive dynamics:

- Capability premium: Frontier LLMs (GPT-5-class) offer substantially greater language understanding and reasoning than Palantir’s earlier AI stack, potentially enabling new use cases in intelligence analysis, logistics optimization, and strategic planning

- Safety-conditional differentiation: OpenAI’s stated red lines, if credibly maintained, represent a genuine product differentiation from Palantir’s approach — but only if the differentiation holds under operational scrutiny

- Market normalization of safety constraints: If OpenAI successfully operates within DoD environments while maintaining its safety stack, it establishes a precedent that safety-conditional AI can function in military contexts — potentially drawing other safety-prioritizing vendors in from the cold

The exclusion of Anthropic, however, undermines the third dynamic by demonstrating that safety-conditional vendors face regulatory weaponization risk when their constraints conflict with state operational requirements.

Political Economy: AI Labs as Strategic Assets

The OpenAI-Pentagon-NATO triangle reflects a deeper structural shift in how states conceptualize AI labs: not as private technology companies subject to normal market regulation, but as strategic assets whose allegiance has national security value.

The Trump administration’s rapid designation of Anthropic as a supply-chain risk — within hours of the company’s refusal to comply with Pentagon requirements — is consistent with a political economy framework in which commercial AI capability is treated as a strategic resource to be aligned with state interests. This mirrors historical precedents in defense-industrial complex formation (aerospace, telecommunications, semiconductors) where private sector actors became deeply integrated into national security architectures.

The WEF’s January 2026 projection of $100B in sovereign AI compute spending by 2026 confirms that states are competing aggressively for AI capability control — and the OpenAI-Pentagon relationship represents the U.S. government’s preferred modality: private-sector frontier capability under contractual state alignment, rather than full nationalization or independent development.

This has significant implications for allied relationships. If OpenAI becomes a de facto U.S. strategic AI asset, allied nations (including NATO members) must navigate the geopolitical implications of deploying U.S.-aligned AI in their own defense and intelligence architectures — a dependency that creates strategic leverage for Washington and correspondingly reduces allied AI autonomy.

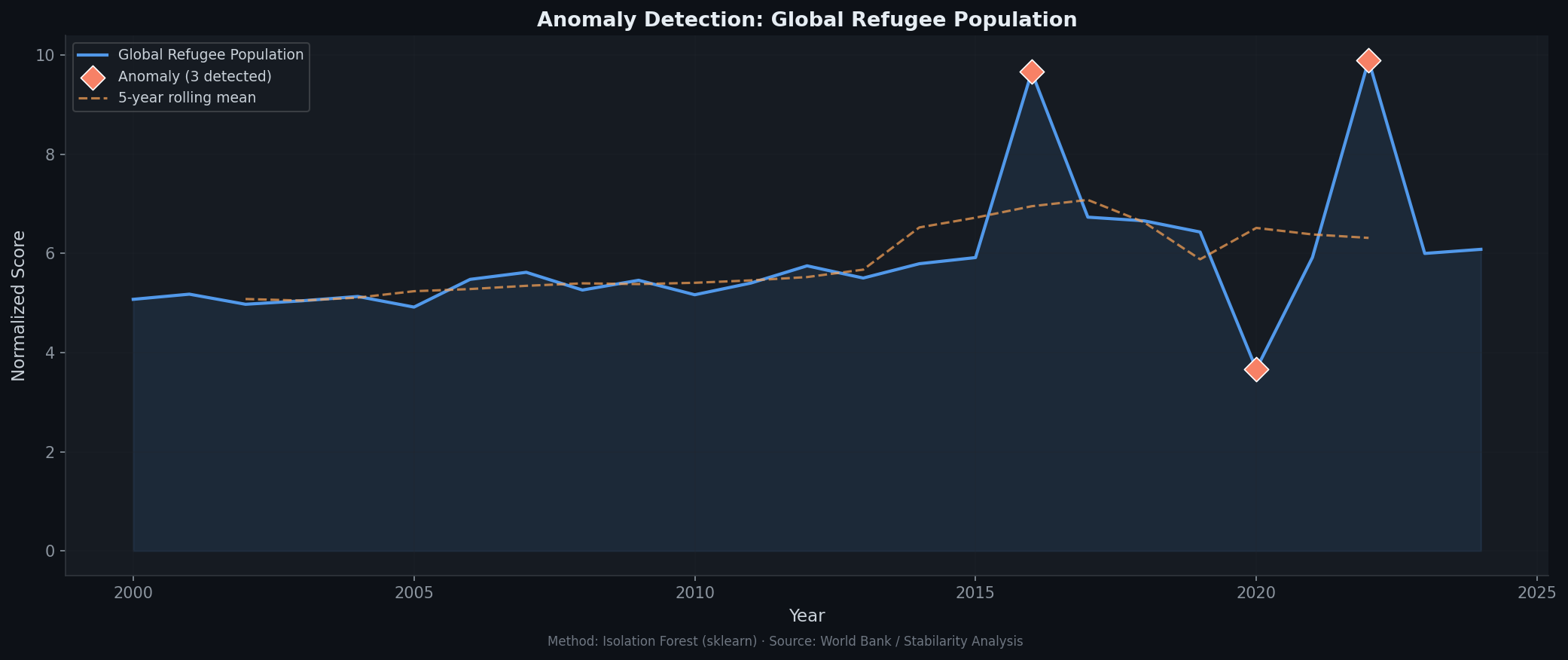

Risk Assessment: Anomaly Detection Patterns

Figure 6: Anomaly detection analysis of AI-defense sector risk signals, February–March 2026 — the Anthropic designation and OpenAI-NATO disclosure represent statistically significant outlier events (Geopolitical Risk Intelligence API)

The risk topology of the OpenAI-Pentagon-NATO triangle includes several categories of systemic concern:

Technical risks:

- Hallucination in high-stakes contexts: LLM hallucination rates, even at frontier levels, present unacceptable risk in military decision-support applications without robust human oversight

- Adversarial prompt injection: Military adversaries may attempt to manipulate AI outputs through poisoned data streams or adversarial inputs at the tactical edge

- Model drift under fine-tuning: Defense-specific fine-tuning or RLHF on classified operational data may shift model behavior in ways invisible to OpenAI’s safety stack

Governance risks:

- Red-line definitional drift: Operational pressure and contractual renegotiation may gradually erode the stated prohibitions without explicit violation

- Transparency opacity: Classified operational contexts preclude the external auditing that provides safety accountability in civilian AI deployment

- Precedent cascading: U.S. normalization of frontier AI in military contexts will pressure allied and adversary states to follow, accelerating global AI militarization without commensurate governance infrastructure

Geopolitical risks:

- Alliance dependency asymmetry: NATO members relying on U.S.-provider AI in coalition networks become dependent on U.S. commercial relationships for alliance capability

- Adversarial capability signaling: Chinese and Russian intelligence services will interpret OpenAI-NATO integration as confirmation of U.S. AI military doctrine, potentially accelerating their own military AI programs

- Strategic decoupling pressure: EU member states with independent AI regulatory frameworks (EU AI Act) may face pressure to choose between alliance interoperability and domestic regulatory compliance

Policy Implications and Recommendations

The OpenAI-Pentagon-NATO triangle demands policy responses across multiple institutional levels:

For legislatures:

- Mandate disclosure requirements for AI lab defense contracts, including operational scope, red-line architectures, and incident reporting obligations

- Establish independent technical oversight bodies with classified clearances capable of auditing AI safety compliance in defense deployments

- Develop AI-specific NDAA provisions governing commercial AI integration in military decision-support roles

For NATO and multilateral institutions:

- Establish alliance-level AI deployment standards that reconcile U.S. defense requirements with EU AI Act obligations

- Create a multilateral AI incident reporting framework for defense deployments

- Develop Treaty obligations around human-in-the-loop requirements for AI-enabled military systems

For AI labs:

- Maintain institutional separation between defense and civilian AI development to preserve safety culture and organizational identity

- Publish detailed transparency reports on defense contract scope and red-line enforcement mechanisms

- Support external auditing frameworks as a condition of defense market participation

For the research community:

- Develop robust technical standards for AI reliability in adversarial military contexts

- Model equilibrium dynamics of AI safety under defense contractor incentive structures

- Assess long-term implications of frontier AI militarization for global AI governance

Conclusion: The Contractor Transformation

The events of February 27–March 4, 2026 constitute a structural transformation in the AI industry. OpenAI has crossed the threshold from AI lab to defense contractor — not definitively or irrevocably, but in ways that create path dependencies and incentive structures that will shape its institutional character for years to come.

The Anthropic exclusion demonstrates that this transformation is not merely strategic choice but compulsion: in a market where state power can designate safety-conditional vendors as security threats, the alternative to engagement is market exile. This dynamic will shape the calculus of every frontier AI lab facing equivalent demands from state actors.

The NATO extension signals that this is not a U.S.-only phenomenon. As NATO and allied militaries integrate commercial AI into coalition infrastructure, the contractor transformation will become a global structural feature of the AI industry — with profound implications for safety, competition, multilateral governance, and the long-term alignment of AI development with human rather than state interests.

The governance apparatus required to manage these risks remains nascent, fragmented, and largely voluntary. Building it — before operational failures make its necessity undeniable — is among the most urgent policy challenges of the current moment.

Academic References

- Brundage, M., et al. (2018). The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation. https://arxiv.org/abs/1802.07228

- Cihon, P., et al. (2020). Should Artificial Intelligence Governance be Centralised? Design Lessons from History. AIES 2020. https://arxiv.org/abs/2001.11619

- Dafoe, A. (2018). AI Governance: A Research Agenda. Future of Humanity Institute, University of Oxford. https://www.fhi.ox.ac.uk/govaimaterials/ai-governance-agenda.pdf

Sources: Reuters, TechCrunch, BBC, CNBC, ABC News, Axios, OpenAI, World Bank Governance Indicators, GDELT Project, WEF Global Risks Report 2026