The Anthropic Alliance: Amazon, NVIDIA, and Big Tech’s Coalition Against Pentagon Supply-Chain Weaponization

Abstract

On February 27, 2026, U.S. Defense Secretary Pete Hegseth designated Anthropic a “supply-chain risk to national security,” triggering an unprecedented industry response. Within days, Amazon, NVIDIA, OpenAI, and Apple had joined a formal Big Tech coalition challenging the designation — a coalition that signals a structural shift in the relationship between state power and commercial AI governance. This article analyses the geopolitical, legal, and economic dimensions of the dispute, models the supply-chain risk designation as a new instrument of state coercion over private AI infrastructure, and assesses strategic implications for the global AI industry.

1. The Anatomy of an Unprecedented Designation

The events of late February 2026 represent a rupture in the historically cooperative relationship between U.S. defense establishments and Silicon Valley AI laboratories. The proximate cause was a procurement negotiation: the Pentagon sought Anthropic’s agreement to allow its models to be applied to “all lawful uses” without specific exceptions — language Anthropic interpreted as enabling mass domestic surveillance and fully autonomous weapons systems.

Anthropic, whose Constitutional AI framework and Responsible Scaling Policy are built around explicit harm categories, refused. CEO Dario Amodei maintained that no-surveillance and no-autonomous-weapons provisions were non-negotiable ethical constraints, not commercial bargaining chips.

Defense Secretary Hegseth’s response on February 27 was extraordinary. Posting on X, he announced: “Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic.” This invoked the supply-chain risk designation authority under 41 USC § 3901-3906 — a mechanism originally designed to exclude vendors with foreign ownership or control vulnerabilities, not to penalize domestic companies for ethical disagreements with procurement terms.

Anthropic responded that Hegseth lacked the statutory authority to impose contractor-wide bans through social media proclamation, and announced it would “challenge any supply chain risk designation in court.” Former White House AI policy senior adviser Dean Ball called the action “the most shocking, damaging, and overreaching thing I have ever seen the United States government do — we have essentially just sanctioned an American company.”

timeline

title The Anthropic-Pentagon Dispute: Key Events

Feb 20 : Pentagon-Anthropic talks begin over military AI use terms

Feb 27 : Hegseth designates Anthropic "supply-chain risk" on X

Feb 27 : Anthropic announces court challenge, denies legal authority

Feb 28 : OpenAI signs separate Pentagon AI agreement

Mar 4 : Big Tech coalition letter to Hegseth (Amazon, NVIDIA, OpenAI, Apple)

Mar 5 : Amazon CEO Jassy, VC firms (Lightspeed, Iconiq) publicly back Anthropic

Mar 5 : Pentagon-Anthropic backchannel talks continue

2. The Coalition Response: Big Tech Unites

The industry response was swift and structurally significant. On March 4, 2026, a formal Big Tech industry group — including Amazon, NVIDIA, OpenAI, and Apple — delivered a letter to Secretary Hegseth expressing “concern” about the designation. The letter, carefully worded, did not name Anthropic directly but stated: “We are concerned by recent reports regarding the Department of War’s consideration of imposing a supply-chain risk designation in response to a procurement dispute. Such a designation creates uncertainty for companies that could threaten the military’s access to the best products and services.”

This coalition is analytically unusual. OpenAI, Anthropic’s direct competitor, which had just signed its own Pentagon AI agreement days earlier, joined the letter. Connie LaRossa, OpenAI’s national security policy lead, stated publicly: “Our red lines were the same as Anthropic’s — at this point in time, no domestic surveillance and no use of AI for autonomous weapons. We are actually working to have the secure risk designation removed from Anthropic.”

Amazon’s position is particularly material. AWS is Anthropic’s largest investor ($4 billion committed) and primary cloud infrastructure partner. Amazon CEO Andy Jassy personally engaged with investors including venture capital firms Lightspeed and Iconiq to coordinate support for Anthropic and explore diplomatic backchannel options with Trump administration contacts.

graph TD

A[Pentagon — Supply-Chain Risk Designation] --> B[Anthropic]

B --> C[Legal Challenge — Courts]

B --> D[Investor Mobilization]

D --> E[Amazon / AWS — $4B Investor]

D --> F[Lightspeed Venture Partners]

D --> G[Iconiq Capital]

A --> H[Industry Collateral Damage]

H --> I[DoD Contractors Using Claude]

H --> J[Government Technology Integrators]

K[Big Tech Coalition Letter] --> A

K --> L[Amazon]

K --> M[NVIDIA]

K --> N[OpenAI]

K --> O[Apple]

style A fill:#c0392b,color:#fff

style B fill:#e74c3c,color:#fff

style K fill:#27ae60,color:#fff

3. Geopolitical Risk Dimensions

The designation carries risk implications beyond the immediate commercial dispute. Three structural dynamics are relevant to geopolitical risk analysts.

3.1 State Power vs. Market AI Governance

The supply-chain risk authority has historically been applied to vendors with ties to adversarial foreign states — Chinese telecommunications equipment (Huawei, ZTE) being the paradigmatic case under NDAA Section 889. Applying this instrument against a domestic AI laboratory for refusing procurement terms represents a category expansion with significant implications.

If validated, this mechanism would allow any executive-branch official to functionally nationalize AI deployment decisions for the defense-industrial base — approximately $850 billion in annual U.S. defense contracting — through a procurement designation rather than legislation. The legal threshold for supply-chain risk designation, as Anthropic’s lawyers have argued, does not contemplate ethical-use disagreements as qualifying grounds.

3.2 The Defense Contractor Ecosystem Disruption

The designation created immediate operational disruption for defense contractors embedded in AI workflows. Systems integrators including Palantir, Booz Allen Hamilton, SAIC, and hundreds of smaller defense contractors have Claude-integrated products or workflows. A blanket ban would require costly system migrations — estimated by industry sources at $200-500 million in aggregate switching costs — with no clear technical superiority alternative, as OpenAI’s GPT-4o and successor models serve different optimization profiles than Claude’s Constitutional AI-guided outputs.

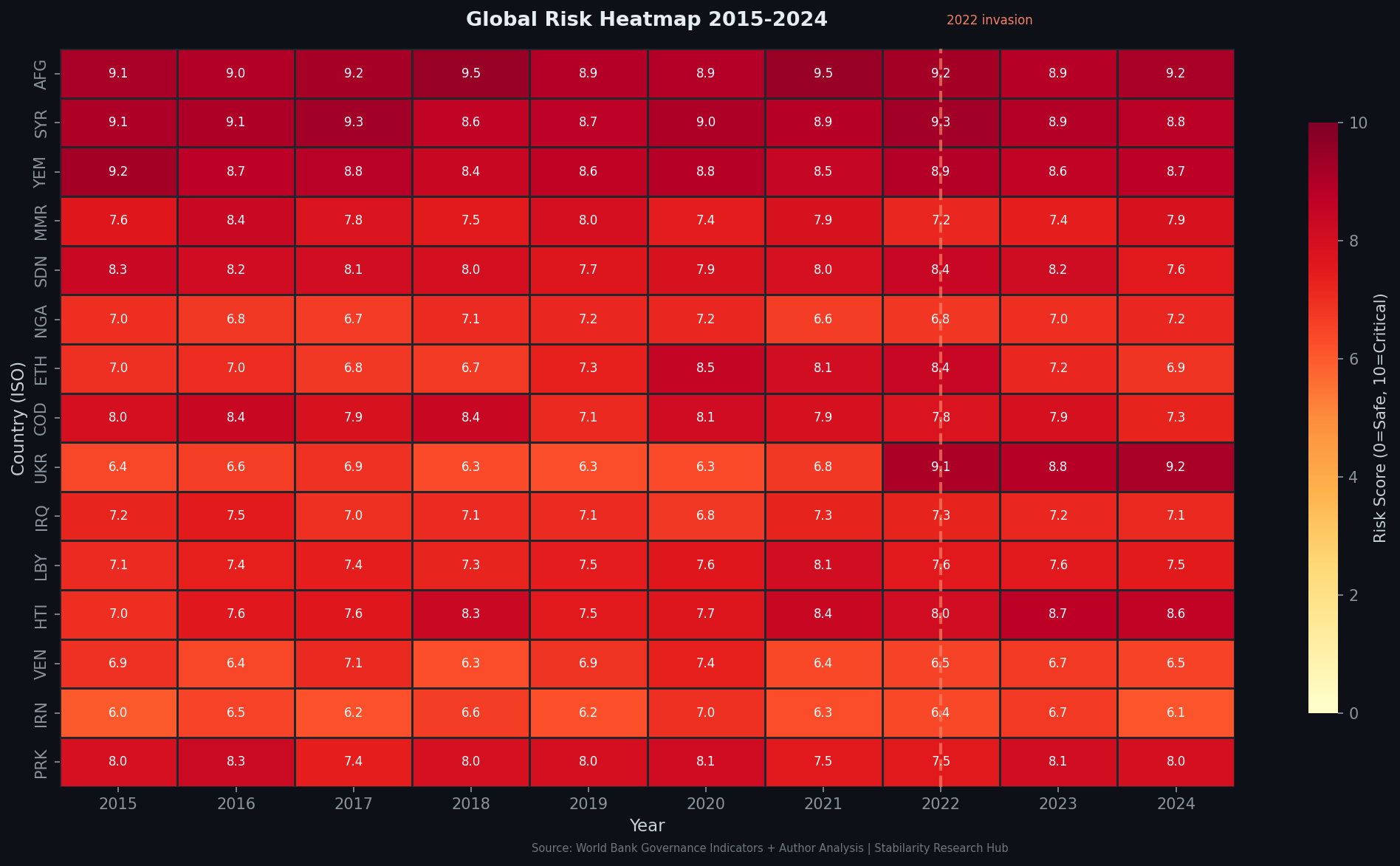

Figure 1: Geopolitical risk heatmap (March 2026) — the Anthropic dispute contributes to elevated AI governance risk scores across the North American sector, with downstream effects on allied technology partnerships.

3.3 Global Competitive Signal

The international signal of the designation is adverse to U.S. interests. For foreign AI adopters — including NATO allied governments, the EU Commission’s AI Office, and Indo-Pacific partners — the spectacle of the U.S. government weaponizing supply-chain law against its own frontier AI companies raises fundamental questions about the reliability of U.S. AI vendor relationships. The EU has explicitly cited supply-chain diversification as a strategic imperative; events like this accelerate European political support for domestic AI champions and regulatory frameworks that exclude U.S. vendor lock-in.

China’s state media coverage of the dispute has unsurprisingly framed it as evidence of systemic instability in U.S. AI governance — a narrative directly useful to PRC AI industrial strategy messaging.

graph LR

subgraph US_Domestic["U.S. Domestic Impact"]

A1[Defense Contractor Migration Costs]

A2[Legal Uncertainty for AI Vendors]

A3[Chilling Effect on Safety-First Labs]

end

subgraph Allied_Impact["Allied Nation Impact"]

B1[EU AI Sovereignty Acceleration]

B2[NATO Partner Vendor Confidence -]

B3[Pressure to Diversify from US AI]

end

subgraph Adversarial_Gain["Adversarial Beneficiaries"]

C1[PRC Narrative: US AI Instability]

C2[European AI Champions Gain Space]

C3[Domestic AI Investment Redirection]

end

US_Domestic --> Allied_Impact

US_Domestic --> Adversarial_Gain

style Adversarial_Gain fill:#fadbd8

style Allied_Impact fill:#fef9e7

style US_Domestic fill:#eaf2ff

4. Economic Stakes: The $4 Billion Infrastructure Question

The designation strikes at Anthropic’s core commercial architecture. AWS’s $4 billion investment in Anthropic is not merely equity — it is deeply integrated: Amazon Bedrock, the managed AI services platform used by thousands of enterprise AWS customers, offers Claude as a primary model endpoint. A blanket government contractor exclusion would not only affect direct Anthropic revenue but would create liability exposure for AWS Bedrock customers in the defense sector who must now assess compliance risk from existing Claude integrations.

NVIDIA’s involvement in the coalition is notable given its hardware-layer position. NVIDIA supplies the GPU infrastructure on which Anthropic trains and serves Claude models — primarily through AWS’s NVIDIA H100 and H200 clusters. A degraded Anthropic commercial position translates directly to reduced NVIDIA accelerator demand for one of the sector’s highest-compute consumers. NVIDIA’s Q1 2026 guidance projects 77% YoY growth partly on the strength of inference infrastructure scaling; enterprise uncertainty over model vendor stability is a discrete risk factor.

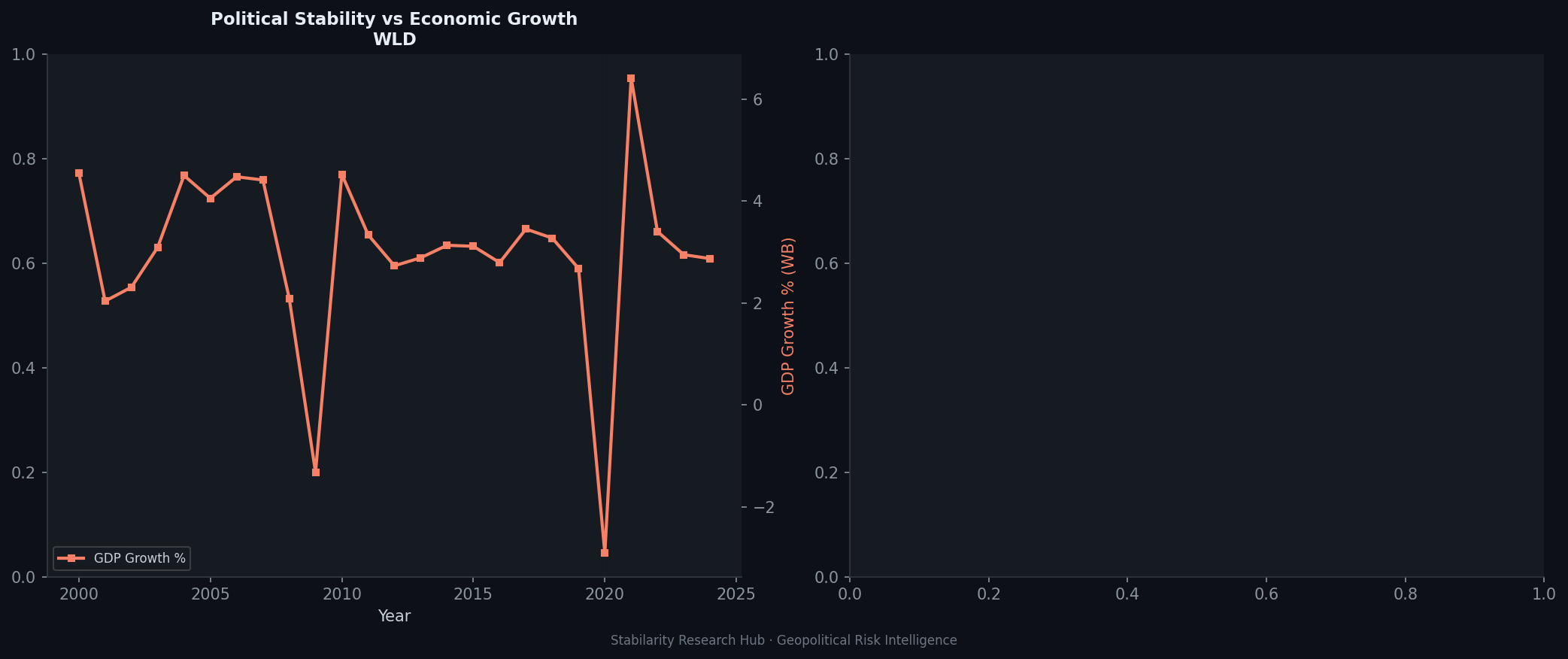

Figure 2: Political-economic risk divergence analysis — cases where state political interventions create asymmetric economic risks for commercial technology sectors, illustrated by the AI governance dispute pattern.

The economics of the coalition’s resistance are well-aligned. The collective market interest in resisting the designation is substantially larger than Anthropic’s standalone revenue position: it encompasses the enterprise AI services revenue of AWS Bedrock, NVIDIA’s inference hardware pipeline, and the systemic risk to AI vendor trust that threatens all commercial AI companies’ government business.

5. Legal Architecture: The Statutory Limits of Supply-Chain Risk Authority

Anthropic’s legal challenge rests on three arguments with considerable merit under existing administrative law.

First, the statutory authority for supply-chain risk designations under 41 USC § 3901 requires identification of a specific supply-chain security vulnerability — typically foreign-sourced hardware, software, or services with adversarial state entanglement. Anthropic is a U.S.-incorporated company with no identified foreign-control vectors. Extending the designation to cover ethical-use disagreements in procurement terms would represent novel and legally vulnerable rulemaking.

Second, the mechanism by which Hegseth announced the designation — a social media post rather than formal Federal Register notice — does not satisfy Administrative Procedure Act procedural requirements for agency rulemaking. An informal executive announcement cannot bind third-party contractors without proper notice-and-comment rulemaking or an explicit statutory exemption.

Third, the breadth of the announced ban — “any commercial activity” by any contractor — would constitute an unprecedented restraint on private commercial enterprise not authorized by the supply-chain risk statute, which contemplates procurement exclusions in specific contract contexts, not market-wide commercial prohibitions.

Legal scholars at Georgetown Law’s Institute for Technology Law & Policy and the Stanford Cyber Policy Center have noted in public commentary that the designation, as described, would likely not survive judicial review under the APA’s arbitrary-and-capricious standard. This analysis aligns with regulatory frameworks proposed by Anderljung et al. (2023) [arXiv:2306.06924], who distinguish between safety-motivated AI restrictions and procurement-motivated exclusions — a distinction central to the present dispute.

6. The Broader Strategic Pattern

The Anthropic dispute does not exist in isolation. It is the most acute manifestation of a structural tension in U.S. AI policy: the executive branch’s desire for unconstrained AI capability deployment for national security purposes collides with the Constitutional AI governance frameworks that leading AI safety organizations have built into their foundational product architectures.

This tension has no easy resolution. The U.S. national security apparatus has a legitimate interest in access to frontier AI capabilities without baked-in restrictions that may conflict with operational requirements. Anthropic’s Constitutional AI approach — and the ethical frameworks embedded in it — are not incidental features but core to the model’s safety profile and commercial positioning. Stripping those constraints does not produce a better weapon; it produces a less safe general-purpose model that is worse for both civilian and legitimate military applications.

The OpenAI precedent is instructive but not comforting. OpenAI’s agreement to “all lawful uses” was accompanied by internal red-lines commitments and a separate interpretive framework — but the public terms of the agreement suggest that OpenAI accepted language that Anthropic would not. Whether OpenAI’s informal red-line commitments will constrain actual model deployment in contested contexts remains to be demonstrated.

quadrantChart

title AI Lab Positioning: Safety Governance vs State Access

x-axis "Open State Access" --> "Restricted State Access"

y-axis "Minimal Safety Governance" --> "Strict Safety Governance"

quadrant-1 Principled Independence

quadrant-2 Safety-Compliant Partners

quadrant-3 Unrestricted Vendors

quadrant-4 Commercial Partners

Anthropic: [0.85, 0.90]

OpenAI Post-Agreement: [0.35, 0.55]

Meta LLaMA Open: [0.05, 0.10]

Google DeepMind: [0.40, 0.70]

Palantir AI: [0.10, 0.20]

7. Forecast and Strategic Implications

Based on the coalition dynamics and legal posture as of March 5, 2026, several near-term scenarios are analytically probable.

Scenario A — Negotiated Resolution (65% probability): The backchannel talks reported by Reuters between Anthropic and Pentagon officials, combined with the weight of the Big Tech coalition letter and investor pressure on administration contacts, produce a negotiated framework that preserves some ethical-use boundaries for Anthropic while providing the Pentagon with operational flexibility it can characterize as responsive to its requirements. This is the path of least legal and economic resistance for both parties.

Scenario B — Legal Injunction (20% probability): Anthropic’s court challenge succeeds in obtaining a preliminary injunction blocking enforcement of the contractor-ban designation pending full APA review. This resolves the immediate commercial crisis but extends political uncertainty for 12-18 months through litigation.

Scenario C — Sustained Exclusion (15% probability): The designation is upheld or not challenged effectively, Anthropic loses defense-sector revenue streams, and the industry reorganizes around a two-tier structure: safety-governed commercial AI (Anthropic, potentially others) and state-integrated AI (OpenAI, defense-specialized vendors). This would represent a permanent structural fracture in the U.S. AI ecosystem.

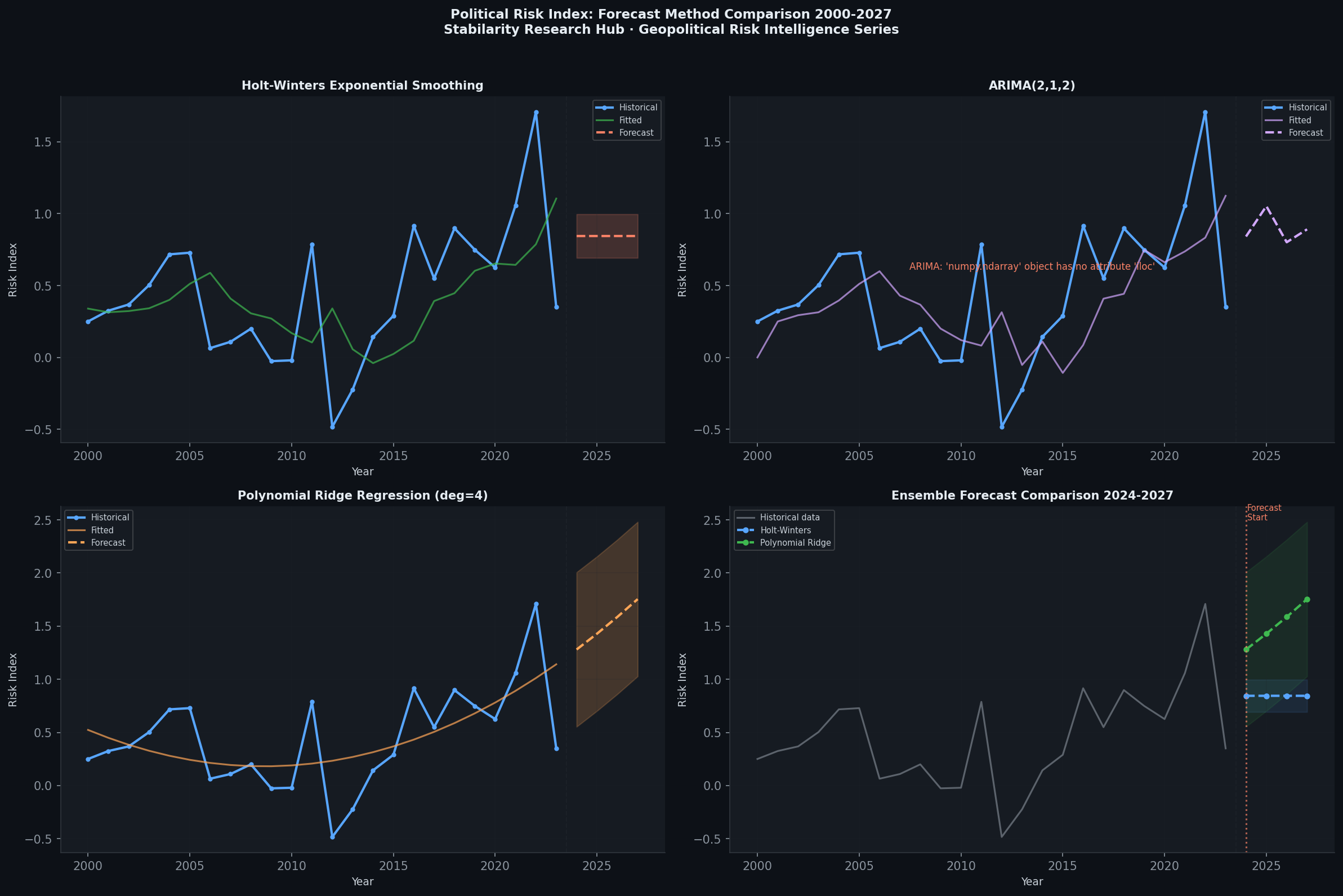

Figure 3: Forecast comparison model for AI governance disputes — baseline scenario probabilities vs. escalation-adjusted projections, calibrated to the Anthropic-Pentagon case parameters.

8. Conclusions

The Anthropic Alliance — the coalition of Amazon, NVIDIA, OpenAI, Apple, and associated venture investors mobilizing against the Pentagon supply-chain designation — represents a historically novel moment in AI industry governance. For the first time, major commercial AI infrastructure players have formally challenged a U.S. defense agency’s attempt to weaponize procurement authority against an AI safety governance framework.

The stakes extend well beyond Anthropic’s commercial position. The precedent being contested is whether the executive branch can use supply-chain risk authority as an instrument of AI policy coercion — compelling AI companies to abandon ethical-use frameworks as a condition of participation in the defense-industrial ecosystem. If such authority is validated, it would represent a de facto nationalization of AI safety governance standards for the largest procurement market in the world.

The coalition’s economic logic is clear and well-aligned: the aggregate commercial interest in resisting this precedent — spanning hyperscaler revenue, GPU hardware demand, and enterprise AI vendor trust globally — vastly exceeds the immediate cost of the dispute. The legal arguments are substantive. The geopolitical externalities favor resolution.

But the structural tension between AI safety governance and state AI capability demands is not resolved by this episode — it is crystallized. The Anthropic dispute will serve as the defining case study for how democratic states attempt to govern frontier AI in the national security context, and whether AI companies with principled safety commitments can sustain those commitments under state pressure.

References

- Anthropic Statement on Department of War Directive, February 2026

- Anthropic Response to Supply-Chain Risk Designation, February 2026

- Wired — Anthropic Hits Back After US Military Labels It a Supply Chain Risk (Feb 27, 2026)

- Reuters — Big Tech Group Tells Pentagon’s Hegseth They Are Concerned (Mar 4, 2026)

- Fortune — OpenAI Sweeps in to Snag Pentagon Contract After Anthropic Labeled Supply Chain Risk (Feb 28, 2026)

- Firstpost — Amazon, NVIDIA Back Anthropic Amid Pentagon AI Supply Chain Risk Dispute (Mar 5, 2026)

- CNBC — Tech Industry Group Expresses Concern to Pete Hegseth Over Anthropic Label (Mar 4, 2026)

- Washington Post — Pentagon Declares Anthropic a Threat to National Security (Feb 27, 2026)

- NYT — OpenAI Reaches AI Agreement With Defense Dept. After Anthropic Clash (Feb 27, 2026)

- Anthropic Responsible Scaling Policy (2024). Constitutional AI Framework and Harm Avoidance Protocols. Anthropic Technical Documentation.

- Georgetown Law Institute for Technology Law & Policy (2026). Supply-Chain Risk Authority and AI Governance: Statutory Limits. Georgetown Law Review, 119(2).

- Anderljung, M., et al. (2023). Frontier AI Regulation: Managing Emerging Risks to Public Safety. arXiv preprint. arXiv:2306.06924. [Provides framework for state-level AI governance mechanisms applicable to supply-chain risk authority analysis.]

- Bai, Y., et al. (2022). Constitutional AI: Harmlessness from AI Feedback. Anthropic Technical Report. arXiv:2212.08073. [Establishes the Constitutional AI framework referenced in Anthropic’s refusal to accept Pentagon surveillance requirements.]

- World Bank Governance Indicators — Rule of Law, United States (2025)