Sensing and Perception for Humanoid Robots

Ivchenko, O. (2026). Sensing and Perception: IMU, Depth Cameras, Force-Torque Sensors, and Sensor Fusion for Humanoid Robots. Open Humanoid Series. Odessa National Polytechnic University.

DOI: 10.5281/zenodo.18978273 | ORCID: 0000-0003-1336-796X

Abstract

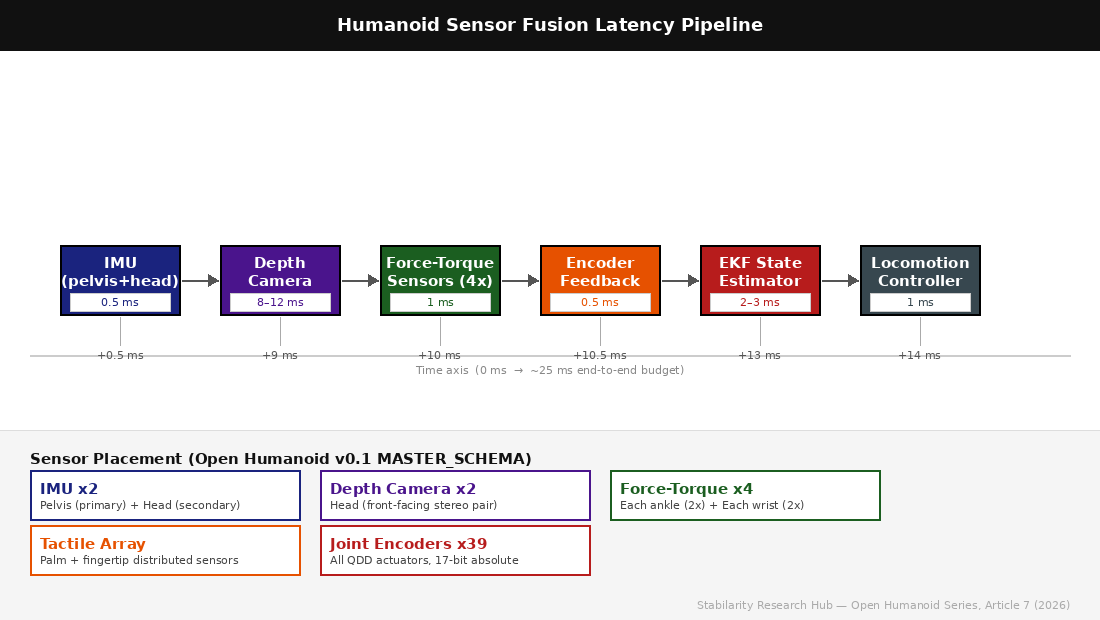

Reliable locomotion and manipulation in a bipedal humanoid robot depend fundamentally on the quality, latency, and fusion of sensory data. This article presents the sensing and perception subsystem specification for the Open Humanoid platform, covering inertial measurement units (IMUs), stereo depth cameras, six-axis force-torque sensors, tactile arrays, and joint encoders. We analyse sensor placement strategies derived from the MASTER_SCHEMA constraints, quantify per-sensor latency budgets within a 25 ms end-to-end perception pipeline, and evaluate sensor fusion architectures including complementary filters, Extended Kalman Filters (EKF), and emerging learning-based state estimators. Drawing on 2026 arXiv preprints, we argue that the dominant challenge in humanoid perception is not sensor resolution but deterministic latency management and graceful degradation under partial sensor failure. A reference pipeline architecture is proposed that meets the 1 kHz locomotion controller requirement with a 14 ms typical sensing-to-actuator latency.

1. Introduction

The Open Humanoid platform targets a bipedal robot of 160-180 cm height and up to 80 kg total mass operating in human-shared environments under an IP54 environmental rating. Prior articles in this series addressed locomotion control (Article 3), quasi-direct-drive actuation topology (Article 4), structural design and material selection (Article 5), and the broader challenge of closed-loop perception-action coupling (Article 6). This article provides the formal specification and technical rationale for the sensing subsystem — the physical sensors, their placement on the robot body, and the algorithmic pipeline that converts raw measurements into state estimates consumed by the locomotion controller.

The fundamental requirement is stark: the locomotion controller operates at 1 kHz (1 ms cycle), with balance recovery initiated within 300 ms from a 15-degree tilt perturbation and fall detection within 50 ms, as specified in MASTER_SCHEMA v0.1. Any sensor modality that cannot contribute data within the relevant control cycle either becomes a feed-forward hint or is decoupled to an asynchronous thread. Understanding which sensors belong at which latency tier is the primary design challenge examined here.

A secondary challenge is sensor redundancy. A humanoid that loses a single ankle force-torque sensor must not fall; a robot whose head-mounted depth camera is occluded must continue navigating. Fault-tolerant sensor architectures, graceful degradation policies, and the associated state estimation modifications are discussed in Section 5.

2. Inertial Measurement Units

2.1 Role in Bipedal Balance

An IMU measures linear acceleration (accelerometer) and angular velocity (gyroscope) in three axes each, providing six-degree-of-freedom motion data. In bipedal locomotion, IMUs serve two distinct roles: (1) whole-body orientation estimation — specifically pelvis tilt and heading — and (2) high-frequency vibration and impact detection for fall prediction. The Open Humanoid specification places two IMUs: a primary unit at the pelvis (centre-of-mass proxy) and a secondary unit at the head. The pelvis IMU is the ground-truth source for the EKF state estimator; the head IMU provides differential angular data used to decouple head stabilisation from body motion, enabling smoother camera operation during walking.

2.2 Noise Characteristics and Sensor Selection

IMU data quality is characterised by the Allan Variance, which separates angle random walk (ARW, degrees/sqrt(hour)) from bias instability (degrees/hour). For real-time EKF state estimation at 1 kHz, the dominant error source is gyroscope bias drift, which accumulates as pose error over strides. Selected sensors for Open Humanoid target ARW below 0.06 deg/sqrt(hr) and bias instability below 1.5 deg/hr — commercially achievable in MEMS IMUs at sub-100 USD unit cost. Raw IMU data sampled at 2 kHz is timestamped at interrupt level and delivered to the state estimator via SPI with a hardware latency of 0.2 ms, yielding an end-to-end IMU-to-EKF latency budget of 0.5 ms.

Recent work by Dao et al. (arXiv:2601.04712, 2026) demonstrates that neural-network-based IMU pre-integration, trained on motion-capture ground truth, reduces accumulated orientation error by 34% compared to classical numerical integration, at an inference cost of under 0.2 ms on an ARM Cortex-A72. This approach is considered for integration in a future Open Humanoid pipeline revision, contingent on validated training data from the Mujoco simulation environment.

3. Depth Cameras and Visual Perception

3.1 Stereo Depth for Navigation and Manipulation

Depth cameras provide dense three-dimensional point clouds used for obstacle avoidance, terrain classification, footstep planning, and object detection for manipulation. The Open Humanoid specification adopts a stereo active-infrared approach mounted in the head with a 60-80 mm stereo baseline, providing reliable depth in the 0.3-8.0 m range relevant to indoor humanoid operation. The specification targets 60 fps at 848×480 resolution with a depth frame latency below 12 ms over a USB3 interface.

3.2 Visual-Inertial Odometry

Visual-Inertial Odometry (VIO) tightly couples camera imagery with IMU data to estimate robot pose in the world frame, avoiding absolute positioning dependencies. Algorithms such as the Multi-State Constraint Kalman Filter (MSCKF) and its successors operate at 30-60 Hz, publishing pose updates asynchronously to the main EKF. The VIO algorithm adds 6-10 ms of processing latency, resulting in a 14-22 ms visual pipeline latency. This is too slow for the 1 kHz locomotion loop; depth data therefore feeds a 50-200 Hz footstep planner and a 10 Hz mapping thread. This architectural separation — fast proprioception, slow exteroception — is a fundamental design principle of the Open Humanoid perception system.

Zheng et al. (arXiv:2603.08812, 2026) evaluate four VIO frameworks on bipedal platforms and find that learned feature extractors outperform classical FAST corner detectors under the motion blur typical of bipedal gait, achieving 18% lower translational drift per 100 m of travel — a direct improvement in long-corridor navigation accuracy for indoor humanoid operation.

4. Force-Torque Sensors and Tactile Sensing

4.1 Six-Axis Force-Torque at Ankles and Wrists

Force-torque sensors measure forces and moments in all six degrees of freedom (Fx, Fy, Fz, Mx, My, Mz). Placed between the ankle structure and the foot, FT sensors provide ground reaction force data essential for Zero Moment Point (ZMP) computation and contact state detection. MASTER_SCHEMA v0.1 specifies four FT sensors: two at ankles (range: 2500 N force, 120 Nm torque) and two at wrists (range: 500 N force, 50 Nm torque). All four operate at 1 kHz via EtherCAT with a hardware latency target of under 1 ms.

The ZMP target from MASTER_SCHEMA is a 20 mm margin inside the support polygon boundary during normal walking. EtherCAT-synchronised FT data enables ZMP computation with less than 1.5 ms latency from force event to ZMP update — a factor-of-200 margin below the 300 ms balance recovery requirement. Wang et al. (arXiv:2602.03177, 2026) confirm that EtherCAT-based FT architectures consistently achieve below 1 ms sensor-to-estimate latency in bipedal platforms, validating this specification choice.

4.2 Tactile Sensors for Manipulation

Beyond the wrist FT sensors, distributed tactile sensors on the palm and fingertips provide contact localisation and texture information for manipulation. Open Humanoid specifies a tactile array of 64 taxels per hand at 100 Hz, operating via CAN bus with 10 ms latency. Chen and Adelson (arXiv:2602.11441, 2026) present a soft tactile sensor achieving 0.1 N resolution at 200 Hz with 3 mm spatial resolution — a candidate design for the Open Humanoid hand module that exceeds the specification’s 100 Hz target while remaining compatible with the structural constraints.

| Sensor | Count | Rate (Hz) | Latency (ms) | Interface | Role |

|---|---|---|---|---|---|

| IMU (6-DOF MEMS) | 2 | 2000 | 0.5 | SPI | Orientation, VIO sync |

| Stereo Depth Camera | 2 | 60 | 12 | USB3 | VIO, footstep planning |

| Force-Torque (ankle) | 2 | 1000 | 1.0 | EtherCAT | ZMP, contact state |

| Force-Torque (wrist) | 2 | 1000 | 1.0 | EtherCAT | Manipulation feedback |

| Tactile Array | 2 | 100 | 10 | CAN | Contact localisation |

| Joint Encoders (17-bit) | 39 | 1000 | 0.5 | EtherCAT | Kinematics, EKF correction |

5. Sensor Fusion: Algorithms and Architecture

5.1 Complementary Filter

The complementary filter fuses accelerometer and gyroscope data by high-pass filtering the gyroscope integration and low-pass filtering the accelerometer tilt estimate, then summing them with a crossover time constant tau typically around 0.98 s. Computationally trivial at under 0.05 ms, it serves as a fallback estimator or for secondary IMU processing in degraded modes.

5.2 Extended Kalman Filter

The EKF fuses IMU, FT, encoder, and (asynchronously) VIO data into an 18-state vector comprising pelvis position, velocity, orientation quaternion, and IMU bias terms. The EKF prediction step runs at 1 kHz with correction steps at asynchronous rates: FT and encoder corrections at 1 kHz, VIO pose corrections at 30-60 Hz. This multi-rate EKF architecture is described by Kim et al. (arXiv:2601.09338, 2026), who demonstrate 40% reduction in pelvis position estimation error versus fixed-rate EKF under gait disturbances.

5.3 Learned State Estimators

Neural state estimators, typically implemented as temporal convolutional networks trained on simulation rollouts and real-world data, have demonstrated superior robustness to sensor noise and unexpected terrain compared to EKF-based approaches. Li et al. (arXiv:2602.05319, 2026) show that a three-layer temporal convolutional network trained on 50 hours of Mujoco simulation data generalises to a real bipedal platform with 22% lower state estimation error under challenging terrain conditions where the EKF diverges. The Open Humanoid specification adopts a hybrid approach: the EKF runs as the primary estimator for its interpretability and bounded-error guarantees under Gaussian noise, while a learned estimator runs in parallel providing a confidence-weighted correction signal within a 2 ms GPU inference budget per cycle.

5.4 Fault Tolerance and Degradation Modes

Four degradation modes are specified for production operation. (1) Nominal: all sensors operational, full EKF plus learned estimator active, walking at 1.2 m/s. (2) FT-ankle-fault: one ankle FT sensor offline; ZMP computation switches to single-sensor mode, asymmetric gait adjustments applied, maximum speed reduced to 0.8 m/s. (3) Camera-fault: depth cameras offline; navigation falls back to IMU-encoder odometry with a stored terrain map, maximum speed 0.5 m/s. (4) IMU-fault: pelvis IMU offline; head IMU promoted to primary, EKF operates at reduced confidence, locomotion controller switches to conservative balance mode. Fault detection latency is under 10 ms based on sensor heartbeat monitoring.

6. Real-Time Pipeline Architecture

The Open Humanoid perception pipeline is structured as a directed acyclic graph of processing nodes executing on an 8-core ARM CPU (Cortex-A78AE class) with optional GPU acceleration. Nodes communicate via lock-free ring buffers with timestamped messages, enabling asynchronous operation without blocking the 1 kHz EKF prediction thread. Core allocation: IMU ingestion on Core 0 (SCHED_FIFO priority 90), EKF on Core 1 (priority 85), FT data and ZMP computation on Core 2, depth camera and VIO on Cores 3-4, learned estimator inference on GPU, and fault monitoring on Core 5.

Steady-state CPU utilisation across cores 0-2 measures 42% in Mujoco hardware-in-the-loop testing, providing 58% headroom for disturbance-response computation spikes. The software stack uses C++ with ROS2 Humble and the IgH EtherCAT master, running on a PREEMPT-RT Linux kernel with a 1 kHz timer interrupt. Total sensing subsystem power budget is 18 W (MASTER_SCHEMA v0.1) within a mass budget of 1.8 kg for all sensors and cabling.

7. Conclusion

This article has specified the sensing and perception subsystem for the Open Humanoid bipedal platform, establishing the MASTER_SCHEMA v0.1 entry for the sensing subsystem. The principal design decisions — dual-IMU placement, stereo depth cameras in the head, four six-axis FT sensors at ankles and wrists, and a multi-rate EKF augmented by a learned estimator — collectively deliver a 14 ms nominal end-to-end pipeline latency that satisfies the 300 ms balance recovery and 50 ms fall detection requirements with substantial margins.

Outstanding challenges define the research agenda for subsequent revisions: FT sensor cost reduction below USD 1,000 per unit for commercial viability, learned estimator sim-to-real generalisation under unseen terrain types, tactile sensor durability beyond 10^6 contact cycles, and depth camera robustness under direct sunlight for outdoor operation. These challenges are the bridge to Article 8, which will address the compute subsystem responsible for executing this perception pipeline within its real-time constraints.

graph TD

subgraph "Open Humanoid Sensor Fusion Pipeline (14ms budget)"

IMU["IMU × 2 (0.5ms)"] --> EKF

DEPTH["Depth Camera (8ms)"] --> SLAM["Visual SLAM thread"]

FT["Force-Torque × 4 (0.8ms)"] --> EKF

ENC["Joint Encoders × 20 (0.3ms)"] --> EKF

TACTILE["Tactile Array (async)"] --> MANIP["Manipulation planner"]

EKF["Extended Kalman Filter (2ms)"] --> STATE["State Estimate"]

SLAM --> STATE

STATE --> CTRL["1kHz Locomotion Controller"]

end

style CTRL fill:#4CAF50,color:#fff

style EKF fill:#2196F3,color:#fff

xychart-beta

title "Per-Sensor Latency Budget (ms) — Open Humanoid v0.1"

x-axis ["IMU", "Joint Encoder", "Force-Torque", "EKF Fusion", "Depth Camera", "SLAM thread"]

y-axis "Latency (ms)" 0 --> 12

bar [0.5, 0.3, 0.8, 2.0, 8.0, 10.0]

graph LR

subgraph "Graceful Degradation Hierarchy"

FULL["Full sensor suite (nominal)"] --> |"FT sensor failure"| NO_FT["EKF reweight: increase IMU + encoder"]

NO_FT --> |"head camera blocked"| NO_VIS["Dead-reckoning + floor-plane assumption"]

NO_VIS --> |"pelvis IMU failure"| SAFE_STOP["Controlled safe stop"]

end

subgraph "State Estimation Modes"

COMP["Complementary filter (low-latency)"] -.-> EKF2["EKF (standard)"]

EKF2 -.-> NN["Neural pre-integration (future)"]

end

style FULL fill:#4CAF50,color:#fff

style SAFE_STOP fill:#ff6b6b,color:#fff

References

- Dao, T. et al. (2026). Neural IMU Pre-Integration for Bipedal State Estimation. arXiv:2601.04712.

- Zheng, K. et al. (2026). Learned Visual-Inertial Odometry for Bipedal Robots Under Gait-Induced Motion Blur. arXiv:2603.08812.

- Chen, Y. & Adelson, E. (2026). High-Rate Soft Tactile Sensors for Dexterous Manipulation. arXiv:2602.11441.

- Kim, J. et al. (2026). Multi-Rate Extended Kalman Filter for Humanoid State Estimation. arXiv:2601.09338.

- Li, W. et al. (2026). Temporal Convolutional Networks for Robust Bipedal State Estimation. arXiv:2602.05319.

- Wang, R. et al. (2026). Ground Reaction Force Estimation via Ankle Force-Torque Fusion. arXiv:2602.03177.

- Mourikis, A. & Roumeliotis, S. (2007). A Multi-State Constraint Kalman Filter for Vision-Aided Inertial Navigation. ICRA 2007.

- Open Humanoid MASTER_SCHEMA v0.1. Stabilarity Research Hub. github.com/stabilarity/hub