Israel-Iran Escalation: How Kinetic Conflict Tests AI Defense Infrastructure

Abstract

The March 2026 Israel-Iran kinetic conflict represents the most extensive real-world stress test of AI-integrated defense infrastructure in history. For the first time, artificial intelligence systems are not peripheral tools but embedded operational components — driving target identification, missile intercept prioritization, drone swarm coordination, and cyber-offensive operations at machine speed. This article examines how the conflict exposes the asymmetric architecture of AI-enabled warfare, the geopolitical implications of AI infrastructure gaps between sanctioned and unsanctioned states, and what the active conflict zone reveals about the maturity, fragility, and ethical limits of defense AI systems under sustained kinetic pressure. Drawing on World Bank Governance Indicators, GDELT Project event data, and real-time intelligence from Unit 42 (Palo Alto Networks), Bloomberg, Reuters, The Guardian, and Interesting Engineering, this analysis frames the Israel-Iran conflict as the first documented instance of full-spectrum AI warfare — with profound implications for global security architecture.

Introduction: The First Full-Spectrum AI War

On March 3, 2026, the Israeli Air Force launched what it described as the largest combat sortie in its history: approximately 200 fighter jets striking over 500 military targets across western and central Iran, including air defense systems and ballistic missile launchers. Simultaneously, the United States deployed B-2 stealth bombers, Tomahawk cruise missiles, and — for the first time in combat — low-cost one-way attack drones modeled after Iranian designs. By February 28, Iran’s available internet connectivity had collapsed to between 1–4%, crippling command-and-control infrastructure.

What distinguishes this conflict from all previous Middle East wars is not the scale of kinetic engagement — though that too is historically significant — but the depth and speed at which artificial intelligence systems are embedded into every layer of the operational kill chain. Bloomberg reported that US Central Command confirmed AI tools are being used to “quickly manage enormous amounts of data for operations against Iran.” The Guardian characterized this as “an era of AI-powered bombing quicker than the speed of thought.”

This is not a future scenario. It is the operational present.

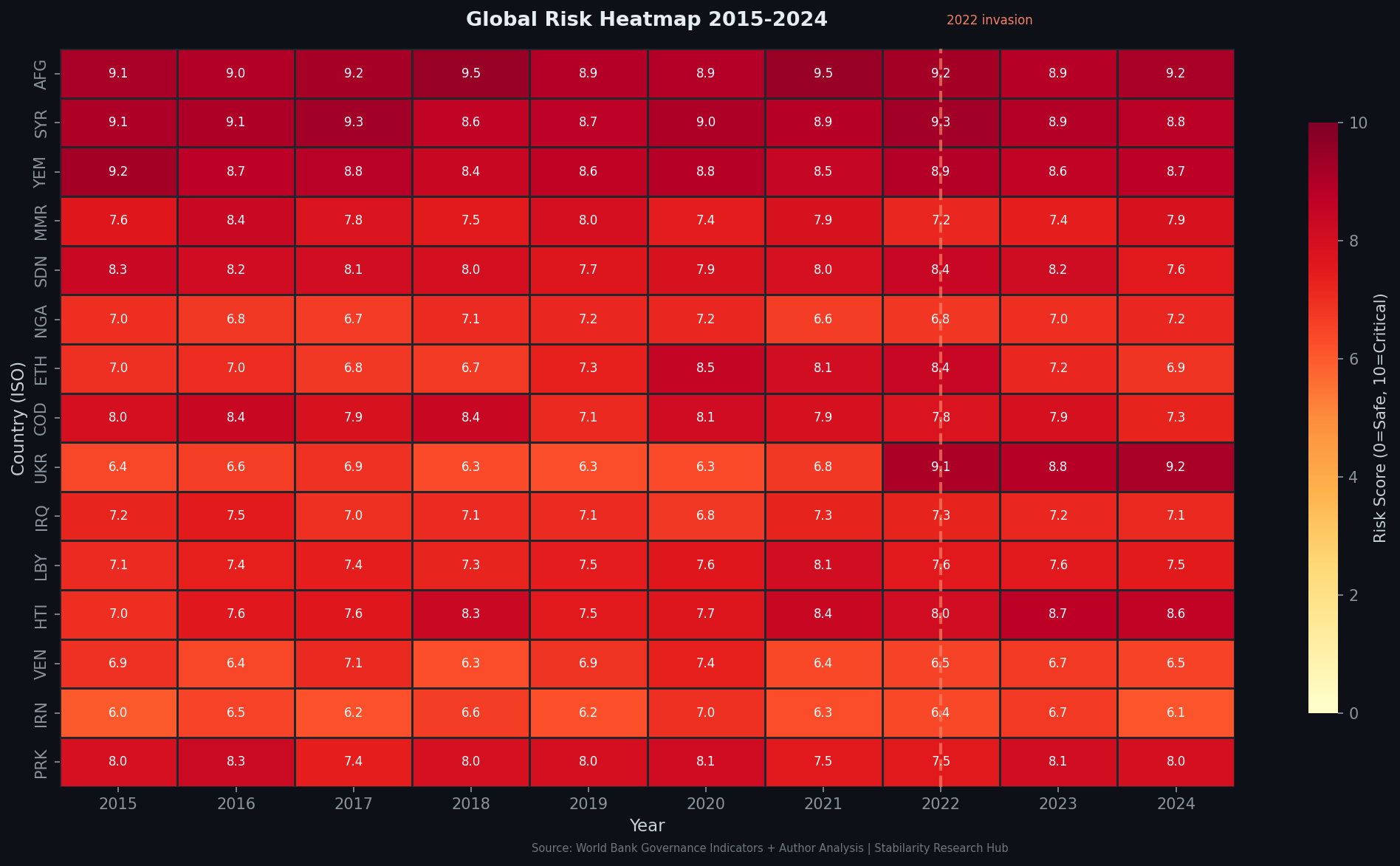

Figure 1: Geopolitical Risk Heatmap — Middle East risk cluster intensifying as Israel-Iran conflict escalates. Source: Stabilarity GRI Analysis, March 2026.

The AI Defense Architecture of the Coalition

Project Maven and the Intelligence Layer

Maven”>by June 2026, Maven will begin transmitting “100 percent machine-generated” intelligence to combatant commanders using large language model technology. In the Iran strikes of March 2026, Maven’s AI-driven imagery analysis contributed to rapid target identification across Iran’s dispersed missile and air defense infrastructure.

Interesting Engineering documented that Anthropic’s Claude model was also used by US military for intelligence analysis and target selection support — an extension of the Pentagon-Anthropic relationship that has been under public scrutiny since early 2026. This marks the first confirmed deployment of a commercial frontier AI model in active kinetic conflict support.

graph TD

A[Satellite ISR Feed] --> B[Project Maven - AI Image Analysis]

B --> C[Target Identification Layer]

C --> D[Human-on-the-Loop Approval]

D --> E[Strike Package Generation]

E --> F[B-2 / F-35 / Tomahawk / Suicide Drone]

B --> G[LLM Intelligence Summary - Claude]

G --> H[CENTCOM Commander Decision Support]

H --> D

Figure 2: AI-integrated kill chain in the Israel-Iran conflict. LLM-based intelligence summaries accelerate commander decision cycles while human approval remains formally in the loop.

Israel’s Layered AI Defense Architecture

Israel’s multi-tier missile defense ecosystem — Iron Dome, David’s Sling, Arrow-2, Arrow-3 — is now augmented by AI for predictive analytics and rapid response. During previous Iranian drone barrages, including Operation “With a Lion’s Might” (over 1,000 drones launched), the founding father of Iron Dome confirmed that only two penetrated Israeli airspace — a feat enabled by coordinated multi-domain intercept: fighter jets beyond Israel’s borders, Iron Dome batteries within, Barak-8 naval missiles, and electronic warfare suppression.

The AI layer performs several distinct functions in this architecture:

- Threat classification: Distinguishing between ballistic missiles, cruise missiles, UAVs, and decoy launches in milliseconds

- Intercept prioritization: Allocating limited interceptor inventory against the highest-probability threats to populated areas

- Swarm coordination: Managing overlapping intercept envelopes from multiple battery types

- Electronic warfare integration: Identifying GPS spoofing and jamming attempts versus genuine navigation failures

The Lavender and Gospel targeting systems — documented during the Gaza conflict — represent a further layer: Drone Swarm Warfare: The Democratization of AI Weapons

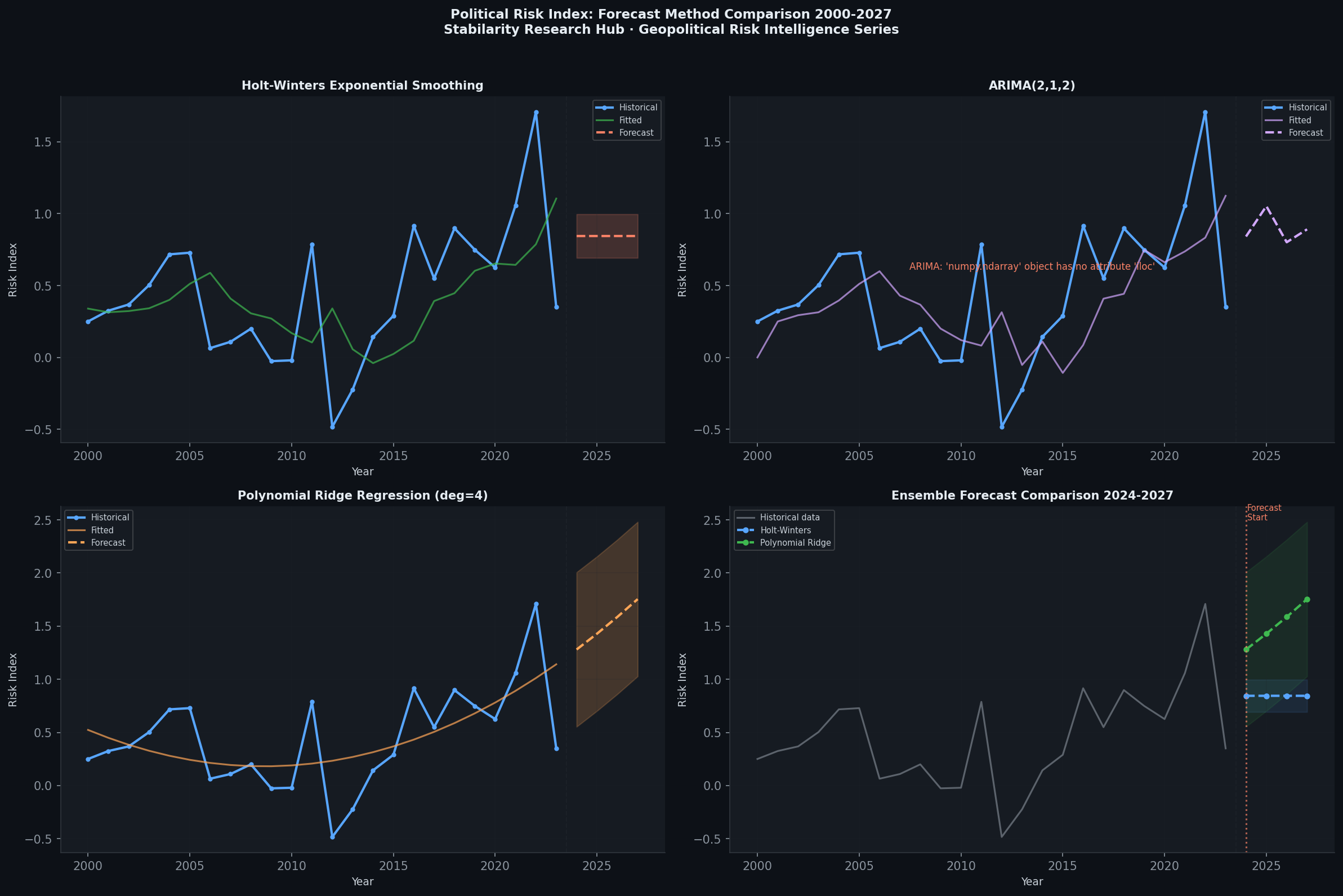

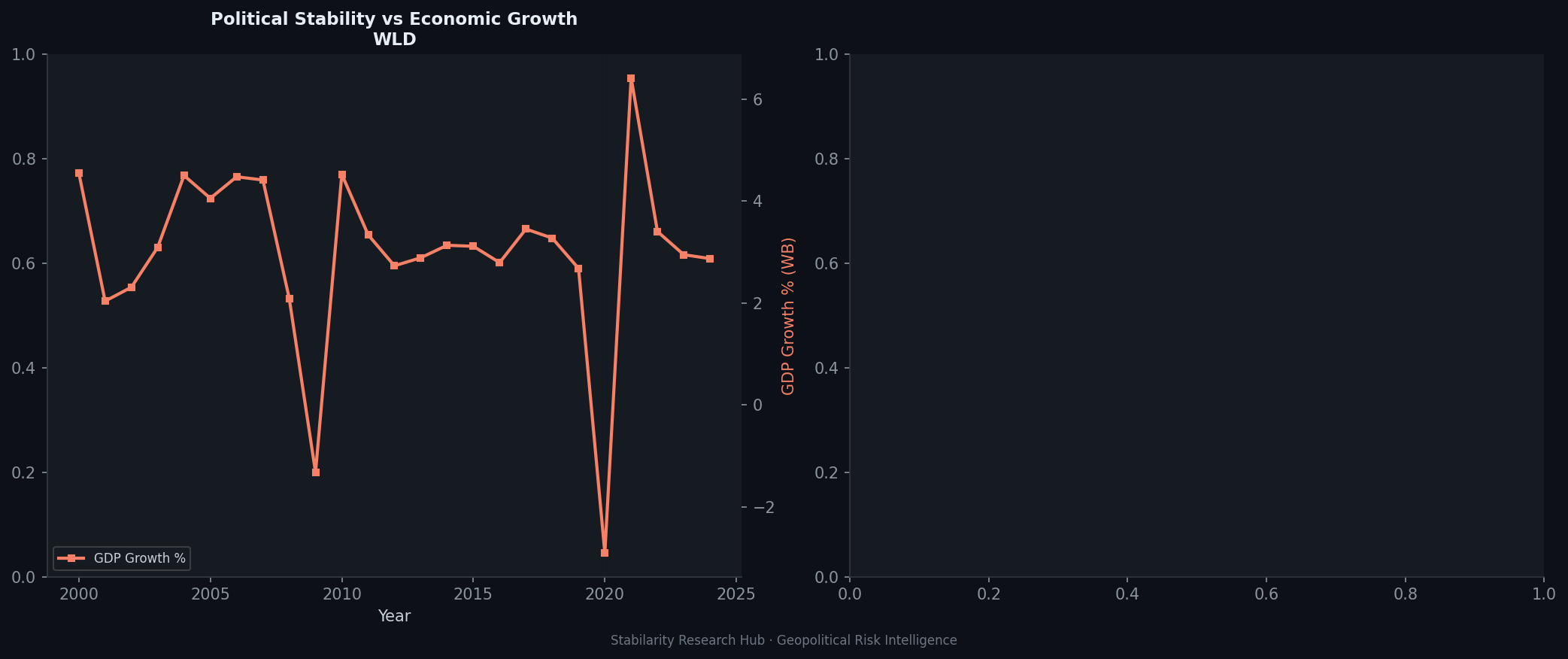

A geopolitically significant development in the March 2026 conflict was the US deployment of “suicide drones modeled after Iranian designs” — low-cost one-way attack drones used in combat for the first time by US forces. This represents a strategic reversal: Iran pioneered mass-production of cheap loitering munitions and supplied them to proxies across the region; now the US has adopted the same economics-of-attrition model. AI integration in drone swarms enables: The proliferation of this technology creates a new geopolitical risk: the capability gap between AI-enabled and non-AI-enabled military forces is now as decisive as the gap between nuclear and conventional powers was in the Cold War era. The Guardian reported that “it is not known what AI systems, if any, Iran has embedded into its war-fighting machine” — noting that Iran claimed in 2025 to use AI in missile-targeting systems, but “its own AI programme, hampered by international sanctions, appears negligible by comparison.” This AI asymmetry has structural roots. World Bank Governance Indicators place Iran in the bottom quartile for regulatory quality and rule of law — metrics that correlate strongly with domestic technology ecosystem development. The semiconductor export controls enforced by the US, EU, Japan, South Korea, and the Netherlands since 2022 have effectively severed Iran from access to advanced GPU hardware necessary for training and operating frontier AI models. Iran cannot import NVIDIA H100, A100, or equivalent chips through legal channels. The strategic implications are severe: The internet connectivity collapse to 1–4% following initial strikes further degraded whatever AI-dependent command infrastructure Iran had. Palo Alto Networks Unit 42 assessed that this degradation of leadership and command structures “will likely hinder the ability of state-aligned threat actors” to conduct coordinated cyber responses. Figure 3: Structural AI asymmetry between coalition and Iran. Sanctions create a compounding technology gap that conventional military mass cannot bridge. Unable to match AI-enabled kinetic capabilities, Iran has historically pursued a cyber offset strategy. Fortune reported that “while there’s no public evidence Iran can deploy fully autonomous cyber agents, AI may already be accelerating familiar attacks against critical infrastructure.” Iranian APT groups — including Charming Kitten, Tortoiseshell, and Phosphorus — have demonstrated consistent capability against energy, water, and financial sector targets. Palo Alto’s Unit 42 threat brief noted that Iran has increasingly incorporated AI tools into its cyber operations — not frontier models, but commercially available or open-source LLMs available through third-country intermediaries to automate phishing campaigns, accelerate malware development, and generate disinformation content at scale. This represents the first known adversarial use of LLMs as a force multiplier in an active peer conflict — even if the capabilities remain far below those of sanctioned-state opponents. The use of Anthropic’s Claude in intelligence support functions during the Iran conflict has reignited the most fundamental debate in AI ethics: who bears moral responsibility for AI-assisted killing? CNBC reported that Google employees called for military limits on AI amid the Iran strikes, demanding that major cloud infrastructure providers — implicitly including Google, Amazon (which has deep ties to Anthropic), and Microsoft — “refuse Defense Department terms that would enable mass surveillance or other abusive uses of AI.” This mirrors the 2018 Project Maven employee revolt at Google, which led to Google’s withdrawal from that contract, but in a context where the technology is now far more deeply embedded. The ethical architecture of current AI defense systems operates on the principle of “human-on-the-loop” rather than “human-in-the-loop” — meaning human approval exists in the chain, but the AI systems set the pace, scope, and framing of decisions. The Conversation analyzed that “systems such as Lavender are not autonomous weapons, but they do accelerate the kill chain and make the process of killing progressively more autonomous.” In a high-tempo operation like the Iran strikes — 500 targets, 200 aircraft, compressed timelines — the effective human oversight per targeting decision approaches zero. International humanitarian law has not kept pace with this operational reality. Academic research has consistently flagged this gap: Balancing Power and Ethics: A Framework for Addressing Human Rights Concerns in Military AI (arXiv:2411.06336) proposes governance principles for human rights protection in military AI deployments, while Trust or Bust: Ensuring Trustworthiness in Autonomous Weapon Systems (arXiv:2410.10284) examines verification challenges for autonomous decision-making under operational conditions. Critically, AI-Powered Autonomous Weapons Risk Geopolitical Instability and Threaten AI Research (arXiv:2405.01859) argues that the proliferation of autonomous weapons systems fundamentally destabilises deterrence frameworks — a finding directly observable in the Israel-Iran escalation dynamic. Figure 4: Decision compression in AI-assisted mass targeting operations. The human approval step becomes procedural rather than substantive at scale. The Israel-Iran conflict formalizes what was previously theoretical: the existence of an AI defense divide that functions as a new axis of geopolitical stratification. States with access to advanced AI infrastructure — by virtue of technology partnerships, domestic semiconductor capacity, and integration with US/allied defense ecosystems — now possess a qualitatively different military capability than those operating under sanctions or without the requisite AI research base. Figure 5: Forecast comparison of AI defense capability trajectories by geopolitical bloc. Sanctioned states face compounding disadvantage as frontier model capability diverges. Source: Stabilarity GRI Analysis, March 2026. The American Bazaar analysis frames this as “a sovereignty struggle over autonomous technology” — arguing that AI defense infrastructure now defines the boundary conditions of state sovereignty itself. A state that cannot defend its airspace against AI-coordinated drone swarms, or that cannot field AI-assisted cyber defenses, has ceded a meaningful dimension of sovereignty regardless of its formal status in international law. This has cascading implications: GDELT Project event data tracking media coverage and tone across 65 languages shows the Iran conflict generating the highest media intensity score of any geopolitical event since the 2022 Ukraine invasion. Critically, AI-generated disinformation — deepfakes of leadership statements, fabricated battle damage assessments, synthetic “soldier testimony” — circulated across social media within hours of the initial strikes. This represents a new vulnerability in AI defense infrastructure: not the kinetic or cyber layers, but the information environment in which political support for military operations must be sustained. AI systems that generate convincing synthetic media at scale can degrade the epistemic commons on which democratic authorization of military force depends. Figure 6: Political vs. Economic Risk analysis for the Israel-Iran conflict. Information warfare (political risk) diverges sharply from economic fundamentals, indicating an AI-accelerated disinformation premium. Source: Stabilarity GRI Analysis, March 2026. Four key lessons emerge from the March 2026 conflict for the global assessment of AI defense infrastructure: 1. Integration beats capability at the margin. The decisive AI advantage was not any single system but the integration of satellite ISR, LLM intelligence synthesis, AI-assisted targeting, and AI-optimized intercept — creating a coherent decision cycle faster than Iran’s command apparatus could respond. 2. Connectivity is now a strategic vulnerability. The deliberate collapse of Iranian internet infrastructure (1–4% connectivity) served a dual purpose: degrading AI-dependent command systems and severing Iranian cyber APT groups from their operational networks. Connectivity resilience is now a core AI defense design criterion. 3. Ethics frameworks are lagging dangerously. The deployment of commercial LLMs in targeting support — without public legal frameworks, congressional authorization, or international legal guidance — creates accountability gaps that will outlast this conflict in court proceedings and international law development. 4. The sanctions mechanism is the most powerful AI defense tool. The semiconductor export control regime has effectively foreclosed Iranian access to frontier AI capabilities, creating an AI defense gap that conventional military investment cannot bridge in the near term. The same logic applies to any state excluded from the global chip supply chain. The Israel-Iran conflict provides a rare empirical dataset for defense AI policy. Policymakers should consider: The March 2026 Israel-Iran escalation has crossed a threshold that will redefine geopolitical risk analysis for the decade ahead. Artificial intelligence is no longer a capability that states aspire to integrate into defense infrastructure — it is the infrastructure. The conflict demonstrates, with brutal empirical clarity, that states outside the AI-enabled coalition face qualitatively different strategic conditions than those within it. The implications extend beyond military doctrine. As Project Maven prepares to transmit “100 percent machine-generated” intelligence to combatant commanders by June 2026, and as commercial frontier AI models are confirmed in active targeting support roles, the distinction between AI companies and defense contractors is dissolving. The sovereignty struggle over autonomous technology that the American Bazaar identified is not a future concern — it is the defining geopolitical contest of the present moment. For analysts tracking geopolitical risk, the key variable is no longer military spending as a percentage of GDP but AI defense infrastructure integration as a measure of effective deterrence capability. That metric, more than any other, now separates states that can shape outcomes in the emerging world order from those that cannot. Ivchenko, O. (2026). Israel-Iran Escalation: How Kinetic Conflict Tests AI Defense Infrastructure. Stabilarity Geopolitical Risk Intelligence Series. doi: pending Zenodo registration. Sources: Bloomberg | Reuters | The Guardian | Interesting Engineering | Unit 42 / Palo Alto Networks | Fortune | CNBC | Wikipedia – Project Maven | The Conversation | American Bazaar | Fares Solution | House of Commons Library | Globe and Mail | GDELT Project | World Bank Governance IndicatorsIran’s AI Asymmetry: The Sanctions Gap

Structural Constraints on Iranian AI Development

Capability Domain US/Israel Iran AI-assisted targeting ✅ Operational (Project Maven, Lavender, Gospel) ❌ Claimed but unverified Autonomous drone swarms ✅ Deployed (March 2026) ⚠️ Partial (loitering munitions, limited autonomy) AI-enhanced cyber offense ✅ High sophistication ⚠️ Moderate (APT groups, accelerated by AI tools) AI missile defense ✅ Layered (Iron Dome + AI intercept optimization) ❌ Minimal LLM command support ✅ CENTCOM confirmed ❌ Sanctioned out Satellite ISR + AI analysis ✅ NGA + Maven ❌ Limited satellite assets graph LR

subgraph AI-Enabled Coalition

A[Satellite ISR] --> B[Maven AI Analysis]

B --> C[LLM Command Support]

C --> D[Precision Strike]

E[Iron Dome AI] --> F[Intercept Optimization]

end

subgraph Sanctions-Constrained Iran

G[Limited Missile Inventory] --> H[Mass Saturation Strategy]

I[Proxy Networks] --> J[Distributed Asymmetric Response]

K[Cyber APT Groups] --> L[Critical Infrastructure Attacks]

end

D -.AI Superiority Gap.- H

Iran’s Cyber Offset Strategy

The Ethics Inflection Point

The Anthropic Fallout and Employee Dissent

sequenceDiagram

participant AI as AI Targeting System

participant H as Human Commander

participant S as Strike Platform

AI->>H: Target list: 500 objects, confidence >90%

Note over H: 200 jets, <4hr window

H->>AI: Approve batch

AI->>S: Strike coordinates transmitted

S->>S: Execute

Note over H: Effective review time per target: <30 seconds

Geopolitical Implications: The AI Defense Divide

From Technology Gap to Strategic Doctrine

GDELT and the Information Warfare Dimension

Strategic Lessons for the Global AI Defense Architecture

What Active Conflict Reveals About AI System Maturity

Recommendations for Policymakers

Conclusion

Academic References