Anthropic Pentagon Dispute: When AI Safety Clashes with National Security Contracts

Abstract

The escalating confrontation between Anthropic and the United States Department of Defense represents a watershed moment in the governance of frontier AI systems. Beginning with a $200 million classified-network contract signed in mid-2025, the dispute erupted in February 2026 when Secretary of Defense Pete Hegseth demanded unfettered access to Anthropic’s Claude model—including the removal of safeguards against autonomous weapons and mass domestic surveillance. Anthropic’s public refusal, followed by Trump administration blacklisting and supply-chain risk designation, has fractured Silicon Valley into competing coalitions and forced a market reckoning with the economics of AI safety principles under state pressure. This article examines the geopolitical, institutional, and economic dimensions of this confrontation, situating it within the broader trajectory of AI militarization and the emerging tension between safety-first AI governance frameworks and national security imperatives.

Background: From Safety Lab to Defense Contractor

Anthropic was founded in 2021 by former members of OpenAI explicitly committed to AI safety research as a core mission. The company’s “responsible scaling policy” and “Constitutional AI” methods were designed to maintain behavioral guardrails even as capabilities expanded. This philosophical foundation created a structural tension with the company’s commercial ambitions from the outset.

By mid-2025, Anthropic had signed a $200 million contract with the Pentagon, making Claude the first major AI model deployed on the US government’s classified networks. The contract was celebrated internally as validation that safety-oriented AI could still serve national security purposes—on Anthropic’s terms.

That assumption proved fragile. Over the following months, the Department of Defense sought progressively broader access to Claude’s capabilities, ultimately demanding the removal of key safety precautions. On February 26, 2026, Anthropic publicly stated it “cannot in good conscience” comply with Pentagon demands to remove AI checks and grant unfettered military access.

timeline

title Anthropic-Pentagon Timeline

2021 : Anthropic founded (safety-first mission)

Mid-2025 : $200M Pentagon classified-network contract signed

Late 2025 : DoD demands removal of Claude safeguards

Feb 26 2026 : Anthropic refuses — "cannot in good conscience"

Feb 28 2026 : Hegseth threatens contract cancellation

Mar 1 2026 : Trump orders government to cut ties with Anthropic

Mar 1 2026 : Hegseth designates Anthropic "supply-chain risk to national security"

Mar 4 2026 : Amazon + NVIDIA-led IT Industry Council back Anthropic

Mar 5 2026 : Defense tech companies begin dropping Claude

The Supply-Chain Risk Designation: Legal and Economic Mechanics

Defense Secretary Pete Hegseth’s designation of Anthropic as a “supply-chain risk to national security” is not merely symbolic. Under existing procurement regulations, contractors working with the Department of Defense are prohibited from engaging with entities carrying this designation. The economic consequences are cascading:

Direct contract loss: The $200 million Pentagon contract is effectively voided.

Ripple effect across defense tech: Defense technology companies began dropping Claude almost immediately following the designation, as maintaining access to DoD contracts requires avoiding Anthropic’s products.

Investor alarm: Reuters reported that Anthropic investors are “racing to contain fallout” with major backers including Amazon CEO Andy Jassy directly engaged with Anthropic CEO Dario Amodei on the escalating dispute.

Valuation exposure: Anthropic’s most recent private valuation exceeds $60 billion. A prolonged government blacklist materially impairs its addressable market in enterprise and government AI services.

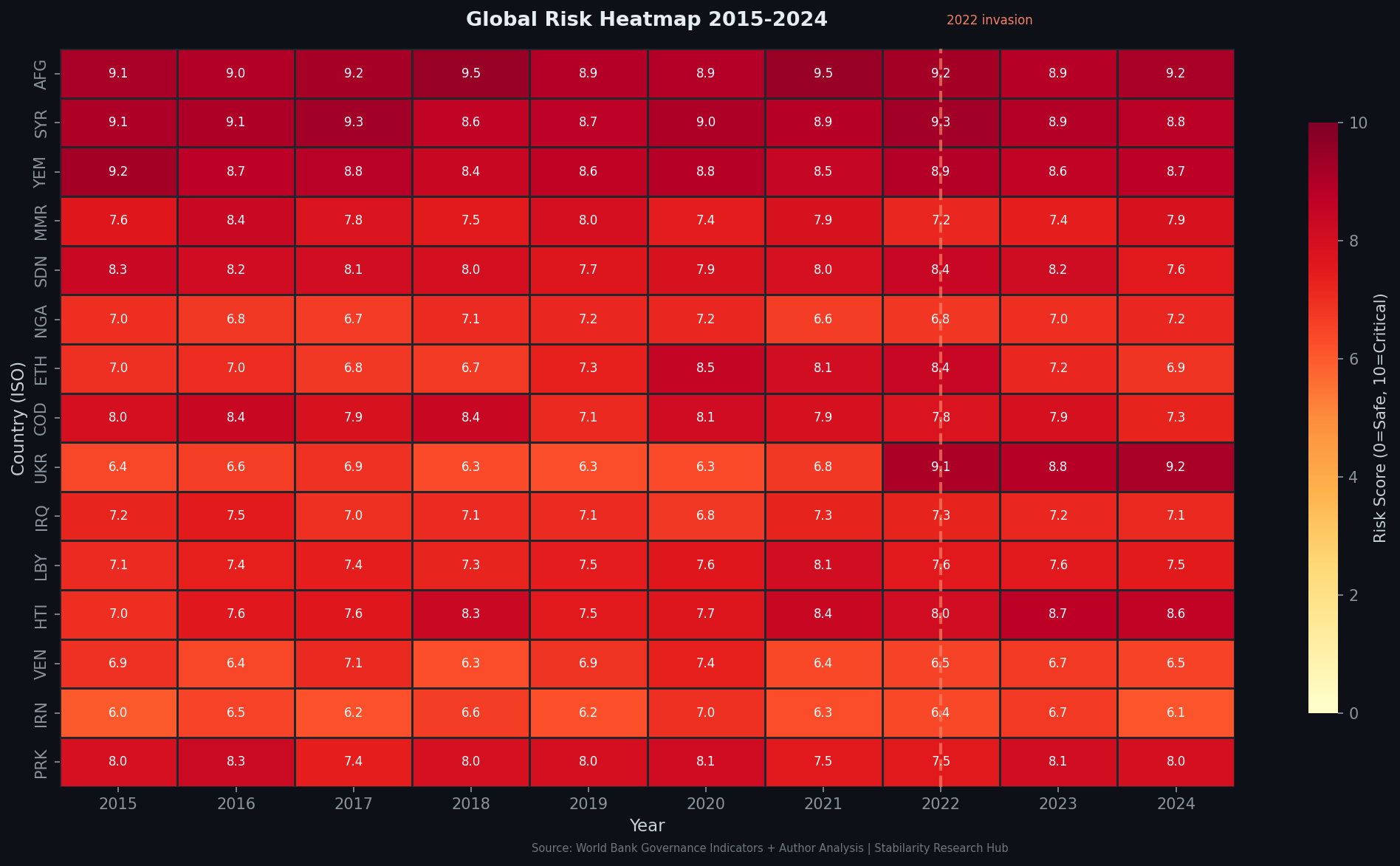

Figure 1: Geopolitical risk heatmap as of Q1 2026 — US domestic political risk elevated alongside defense-technology sector pressure (World Bank Governance Indicators, GDELT Project).

graph TD

A[Pentagon Supply-Chain Risk Designation] --> B[DoD Contract Voided ~$200M]

A --> C[Defense Tech Companies Drop Claude]

A --> D[Investor Pressure to De-escalate]

A --> E[Government Enterprise Pipeline Threatened]

B --> F[Direct Revenue Loss]

C --> F

D --> G[Potential AI Safety Policy Compromise]

E --> F

G --> H[Mission-Market Tension]

F --> I[Valuation Pressure: ~$60B at Risk]

H --> I

The OpenAI Contrast: Pragmatism vs. Principle

The Anthropic dispute is rendered more politically complex by OpenAI’s concurrent experience. As MIT Technology Review reported, OpenAI reached a compromise with the Pentagon—preserving protections against autonomous weapons and mass domestic surveillance in legal contract language while enabling military use cases to proceed.

OpenAI published a blog post characterizing its agreement as protective of key safeguards, and CEO Sam Altman stated the company did not simply accept terms that Anthropic refused. The strategic implication is significant: OpenAI appears to have obtained both the defense contract and a degree of moral cover, while Anthropic’s principled refusal produced neither the contract nor a public safety win.

This divergence reveals a fundamental strategic fork in frontier AI governance:

| Dimension | Anthropic Approach | OpenAI Approach |

|---|---|---|

| Safety stance | Non-negotiable hard limits | Negotiated legal protections |

| Outcome | Blacklisted, contract lost | Contract retained with caveats |

| Investor reaction | Alarm, pressure to de-escalate | Cautious approval |

| Public positioning | Safety-first martyrdom | Pragmatic stewardship |

| Long-term governance precedent | Refuses weaponization | Normalizes military AI with guardrails |

Neither path is without risk. Anthropic’s approach sets a principled precedent but at severe commercial cost. OpenAI’s approach maintains market access but raises legitimate concerns about whether negotiated legal language is adequate protection against capability creep in classified military environments.

Coalition Formation: Big Tech vs. State Power

The dispute has catalyzed an industry coalition response. The IT Industry Council—including Amazon, Nvidia, and Apple—formally wrote to Secretary Hegseth expressing “concern” over the supply-chain risk designation. Amazon and Nvidia, as Anthropic’s largest investors (Amazon has invested over $4 billion), have the most direct financial exposure. But the coalition’s concerns extend beyond Anthropic specifically.

The designation creates a chilling precedent: any AI company maintaining safety guardrails that conflict with government demands becomes vulnerable to state-coercive commercial exclusion. This is not a regulatory posture that can be cabined to Anthropic. It establishes a template applicable to any frontier AI developer.

The Japan Times noted that industry concern centers on the broader disruption to “government partnerships”—language suggesting that the coalition is defending a model of AI-government collaboration that remains commercially cooperative but preserves company-level governance authority over their models.

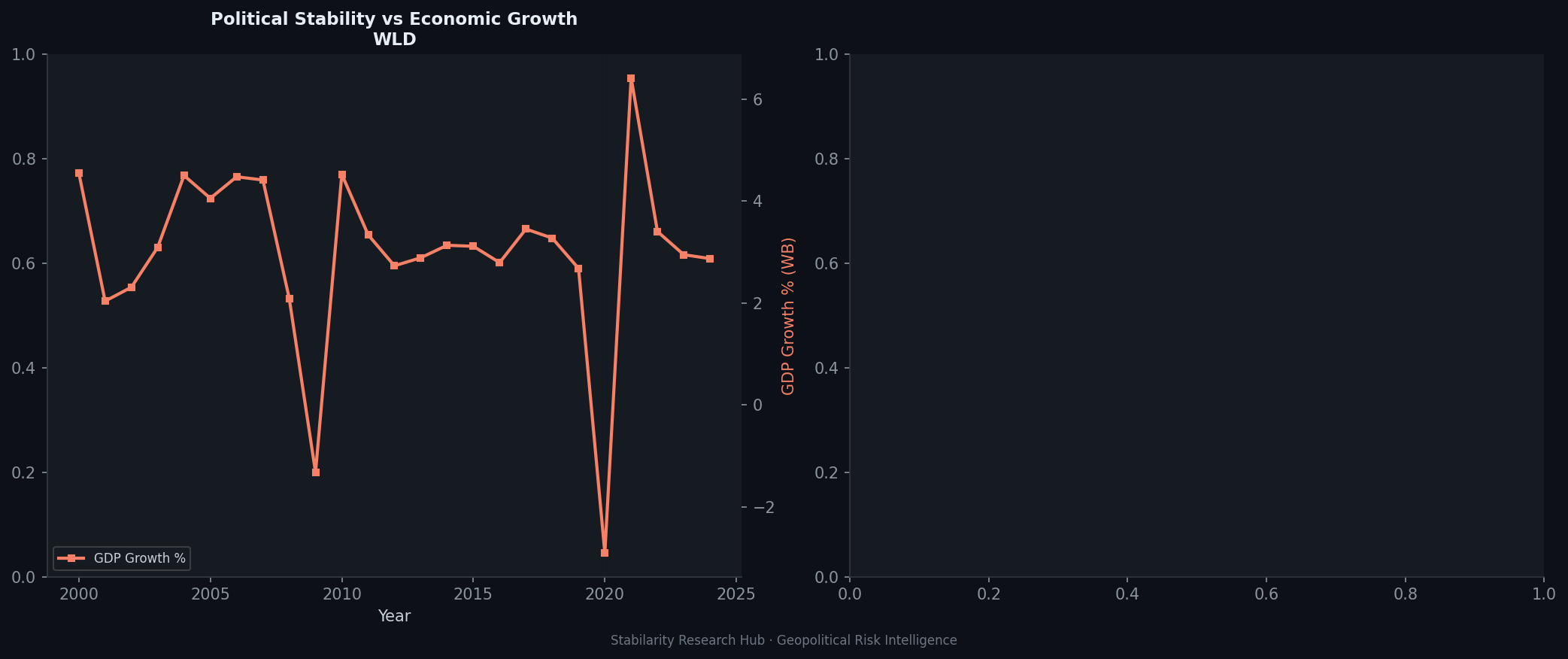

Figure 2: Political vs. economic risk divergence in the US tech sector — state intervention in AI governance creating new premium between political and market risk metrics (World Bank Governance Indicators, GDELT Project).

Geopolitical Architecture: AI Governance as Power Contestation

The Anthropic dispute cannot be understood solely as a corporate negotiation. It sits within a broader reconfiguration of AI governance authority that has been accelerating since 2024.

The FCC intervention: CNBC reported that the FCC chair publicly stated Anthropic “made a mistake” and should “correct course”—an extraordinary intervention by a communications regulator in an AI defense procurement dispute, signaling executive branch alignment against Anthropic’s position.

The “decisive moment” framing: The New York Times characterized the standoff as a “decisive moment for how AI will be used in war,” situating it within the historic question of whether civilian technology companies can maintain governance authority over their systems when deployed in national security contexts.

The precedent problem: Historical analogies from nuclear, satellite, and cryptography technologies suggest that once a capability is recognized as strategically essential, state actors systematically erode private governance authority over it. AI safety guardrails on frontier models may be approaching this threshold.

graph LR

subgraph State Power

A[Pentagon/DoD] --> B[Supply-Chain Risk Designation]

C[Trump White House] --> D[Executive Blacklist Order]

E[FCC Chair] --> F[Public Pressure Campaign]

end

subgraph Market Response

G[Amazon + NVIDIA] --> H[IT Industry Council Letter]

I[Anthropic Investors] --> J[De-escalation Pressure]

K[Defense Tech Firms] --> L[Drop Claude Products]

end

subgraph AI Governance Outcome

M[Safety Guardrails Negotiability]

N[State AI Procurement Norms]

O[Industry Self-Governance Limits]

end

B --> M

H --> M

D --> N

J --> O

L --> N

Economic Dimensions: The Cost of Principled Governance

From a pure economic standpoint, Anthropic’s refusal to comply with Pentagon demands represents a bet that principled positioning creates long-term enterprise value exceeding the near-term contract and market access losses.

Several economic hypotheses support this:

Regulatory arbitrage premium: In jurisdictions with strong AI safety requirements—particularly the EU under the AI Act—companies demonstrating genuine safety commitment may command procurement premiums. Anthropic’s public principled stand may accelerate EU government contracts.

Talent retention signal: Safety-oriented AI researchers are a scarce, highly mobile workforce. Anthropic’s refusal signals organizational alignment with researcher values, potentially reducing talent attrition from a company already competing against better-capitalized rivals.

Enterprise trust differentiation: As AI systems are increasingly deployed in sensitive enterprise contexts—healthcare, legal, financial—the credibility of safety commitments becomes a commercial differentiator. OpenAI’s “compromise” may prove harder to distinguish from full capitulation in enterprise risk assessments.

Investor uncertainty premium: The investor push to de-escalate is economically rational in the short term but may be strategically counterproductive. A negotiated settlement that removes safety guardrails under government pressure would fundamentally compromise Anthropic’s differentiated market positioning.

The net economic calculation is uncertain. What is clear is that the dispute has exposed a structural fragility in the business model of safety-first AI development: commercial scale requires government and enterprise clients; government and enterprise clients increasingly include national security use cases; national security clients systematically resist external governance constraints on deployed capabilities.

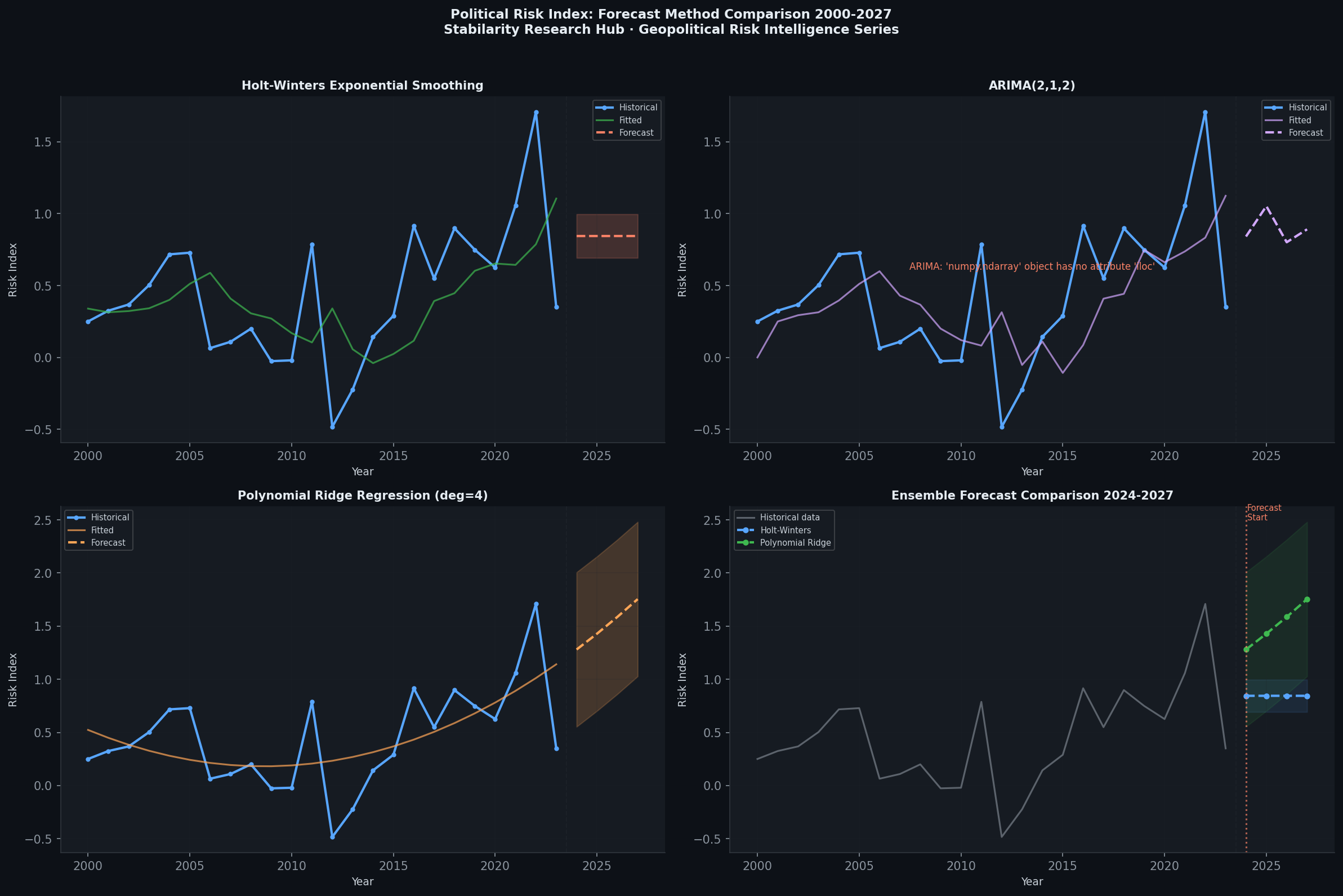

Figure 3: Risk trajectory forecasts — AI governance risk diverging from capability investment trends as state-AI tensions escalate through 2026 (GDELT Project analysis).

Implications for Global AI Governance Architecture

The Anthropic-Pentagon dispute arrives as the first UN Global AI Governance Dialogue is underway—what the Atlantic Council terms the “first truly global AI governance phase.” The timing is awkward: multilateral AI governance frameworks predicated on voluntary company commitments to safety standards are rendered fragile by a demonstrated pattern where state actors can simply blacklist companies maintaining those standards.

Several governance implications are systemic:

Erosion of voluntary commitments: If companies maintaining safety guardrails face state-coercive commercial exclusion, the rational response is to negotiate away those guardrails. This creates a race-to-the-bottom dynamic in AI safety standards for defense applications.

Jurisdictional arbitrage: AI companies may increasingly locate their frontier development activities in jurisdictions offering the most favorable combination of talent, capital, and governance environments. If US state power continues to coerce safety concessions, this may paradoxically accelerate development of alternative governance frameworks in the EU, UK, or allied partners.

The “decisive moment” question: Whether the Anthropic dispute becomes a definitive precedent depends substantially on how Anthropic itself ultimately resolves its position. A negotiated settlement—likely under investor pressure—would establish that even the most principled AI safety organization will subordinate governance commitments to commercial necessity under sufficient state pressure.

Conclusion: Governance Under Coercion

The Anthropic-Pentagon dispute is simultaneously a corporate contract negotiation, a governance philosophy contest, and a geopolitical signal about the relationship between frontier AI development and state power. The designation of a safety-focused AI company as a “supply-chain risk to national security” for maintaining safety guardrails represents a novel form of state coercion—weaponizing procurement exclusion to override corporate AI governance.

The Big Tech coalition response—Amazon, Nvidia, Apple, and the IT Industry Council—suggests the industry recognizes that the precedent threatens a broader model of commercially cooperative AI-government partnership. Whether that coalition can successfully contain the precedent, or whether investor pressure produces a settlement that normalizes Pentagon demands, will determine the near-term trajectory of AI governance architecture in national security contexts.

The deeper question this dispute forces is whether genuinely safety-constrained AI development is compatible with the commercial scale required to sustain frontier research programs. Anthropic’s $200 million contract was supposed to prove it was. The dispute suggests otherwise—at least under current US political conditions.

Academic References

- Shevlane, T., et al. (2023). Model Evaluation for Extreme Risks. arXiv:2305.15324. https://arxiv.org/abs/2305.15324

- Brundage, M., et al. (2018). The Malicious Use of Artificial Intelligence. https://arxiv.org/abs/1802.07228

- Cihon, P., et al. (2020). Should Artificial Intelligence Governance be Centralised? AIES 2020. https://arxiv.org/abs/2001.11619

Data Sources: World Bank Governance Indicators, GDELT Project, Reuters, The Guardian, MIT Technology Review, CNBC, Wired, Axios, The New York Times, ABC News.

Series: Geopolitical Risk Intelligence | Author: Ivchenko, O. | Published: March 2026