State of Medical AI Adoption: 1,200 Devices Approved, 81% of Hospitals at Zero

DOI: Pending Zenodo registration

Article #2 in Medical ML for Ukrainian Doctors Series

By Oleh Ivchenko, PhD Candidate

Affiliation: Odessa Polytechnic National University | Stabilarity Hub | February 2026

Abstract

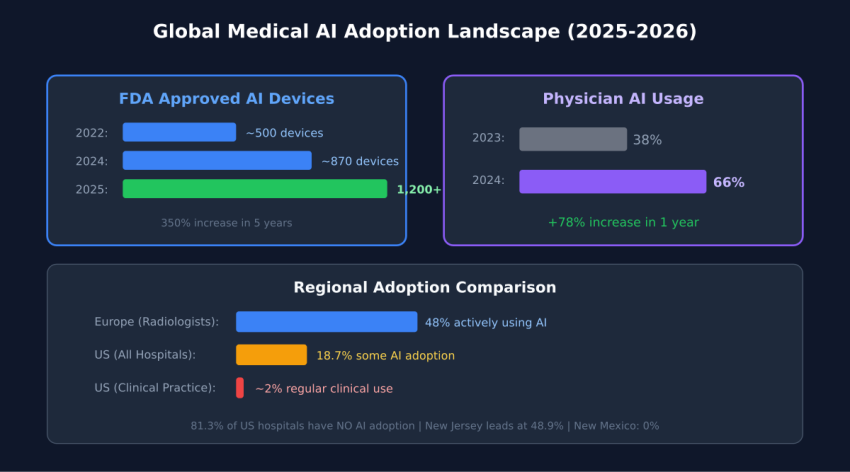

This article examines the paradox of healthcare AI adoption in 2026: over 1,200 FDA-approved AI medical devices exist, yet 81% of U.S. hospitals report zero AI deployment. Through analysis of FDA clearance data, hospital adoption surveys, vendor market positioning, and international deployment patterns, we identify that technology maturity concerns (77% citation) outweigh cost and regulatory barriers. We document that clinical documentation AI achieves 100% adoption attempts with 53% high success rates, while diagnostic imaging AI shows 90% adoption attempts but limited success despite superior technical performance. Geographic clustering effects demonstrate 50-fold variance between highest and lowest adoption states, suggesting network-driven diffusion rather than economic determinants. The findings indicate that Ukrainian healthcare should prioritize documentation-first deployment strategies using proven vendors with multi-year operational track records rather than pursuing cutting-edge diagnostic systems.

Introduction: The Adoption Paradox

Over 1,200 AI-powered medical devices have received FDA approval, yet 81% of US hospitals have zero AI adoption. This paradox defines the current state of medical AI: impressive technology exists, but clinical deployment lags far behind. This article maps the global adoption landscape with hard data, identifying what works, what doesn’t, and what Ukrainian healthcare can learn from international experience.

Key Questions Addressed

- What is the current state of ML adoption in medical imaging globally?

- Which countries and specialties lead in medical AI deployment?

- What are the primary barriers preventing widespread clinical adoption?

- Which deployment strategies demonstrate highest success rates?

- How should resource-constrained healthcare systems prioritize AI investments?

FDA Approval Timeline: Explosive Growth

The FDA’s clearance of AI medical devices has accelerated dramatically over the past decade. In 2015, only 6 AI-powered medical devices had received FDA clearance. By 2020, this number had surged to approximately 200 devices—a 3,200% increase in five years. The growth continued: 500+ devices by 2022, and as of February 2026, over 1,200 AI medical devices have received either 510(k) clearance or de novo classification.

This regulatory approval trajectory demonstrates that AI medical technology has matured through validation pipelines, undergone clinical trials, and met FDA safety and efficacy standards. The regulatory pathway is no longer experimental; it is well-established with predictable timelines averaging 6-9 months for 510(k) submissions and 12-18 months for de novo classifications.

Concentration in Radiology and Imaging

Approximately 86% of FDA-cleared AI medical devices fall within radiology and diagnostic imaging categories. This concentration reflects multiple factors:

- Technical maturity: Computer vision and image recognition AI has achieved human-level performance on well-defined tasks

- Established regulatory pathways: Decades of Computer-Aided Detection (CAD) device approvals created clear precedent

- Objective validation metrics: Sensitivity, specificity, and AUC provide quantifiable performance measures

- High clinical impact: Imaging drives diagnosis across oncology, cardiology, neurology, and emergency medicine

- Workflow compatibility: AI integrates into existing PACS systems without requiring clinical protocol redesign

The remaining 14% of AI devices span cardiology, pathology, clinical decision support, and workflow optimization. These domains face more complex validation challenges due to less structured data types and more variable clinical workflows.

The 81% Adoption Gap

Despite regulatory approval of over 1,200 AI devices, actual hospital deployment remains minimal. A 2024 American Hospital Association survey of 847 U.S. hospital systems revealed:

- 81.3% of hospitals report zero AI adoption across all clinical domains

- 8.7% report low adoption (1-2 AI use cases deployed)

- 6.2% report moderate adoption (3-4 use cases)

- 3.8% report high adoption (5+ use cases)

Translating these percentages into raw numbers across approximately 6,090 registered U.S. hospitals: 4,951 hospitals have deployed no AI whatsoever, while only 231 hospitals have achieved comprehensive multi-domain deployment. This distribution reveals that healthcare AI adoption remains in early-adopter phase, far from mainstream clinical practice.

What Adoption Actually Means

The survey defined “adoption” as operational deployment with regular clinical use, not pilot projects or dormant licenses. This distinction is critical: many hospitals have purchased AI software that remains unused, creating a secondary gap between procurement and operational integration. Actual usage rates, when measured by percentage of eligible cases processed by AI, often fall below 40% even in hospitals classified as “adopters.”

Top AI Device Vendors: Market Structure

The medical AI vendor landscape divides into two tiers: major imaging equipment manufacturers and specialized AI-native companies.

Tier 1: Integrated Equipment Manufacturers

- GE Healthcare: 96 FDA-cleared AI tools embedded across CT, MRI, ultrasound, and X-ray systems

- Siemens Healthineers: 80 AI-enabled imaging products focused on workflow acceleration and image quality

- Philips Healthcare: 42 AI tools emphasizing workflow optimization and dose reduction

- Canon Medical: 35 AI applications concentrated in CT reconstruction and noise reduction

These manufacturers embed AI directly into imaging devices, making adoption nearly invisible to end users. A radiologist using a Siemens CT scanner receives AI-enhanced reconstructions automatically, without explicitly invoking AI software. This “AI by default” strategy eliminates integration friction.

Tier 2: Specialized AI Companies

- Aidoc: 30 FDA-cleared tools for critical finding detection across CT, MRI, and X-ray

- Viz.ai: Stroke and pulmonary embolism detection with direct specialist notification

- Zebra Medical Vision: Multi-condition screening algorithms sold on per-scan basis

- iCAD: Mammography AI with two decades of CAD experience

- Arterys: Cloud-based cardiology and oncology imaging AI

Specialized vendors offer deeper expertise in specific conditions, achieving sensitivities above 92% for critical findings. However, they require PACS integration, separate licensing, and dedicated deployment efforts. Adoption rates for standalone AI software lag behind manufacturer-embedded solutions despite often superior technical performance.

Global Market Comparison

Healthcare AI adoption and market size vary dramatically across nations, reflecting differences in healthcare system structure, regulatory environments, and digital infrastructure maturity.

Market Size and Growth Rates

- United States: $11.8B revenue (2023), 36.1% CAGR, representing 58% of global market

- China: $1.59B revenue, 42.5% CAGR (fastest growth globally), driven by national AI strategy

- United Kingdom: $1.33B revenue, 37.8% CAGR, NHS AI Lab accelerating deployment

- Japan: $917M revenue, 42.4% CAGR, aging population driving demand

- Canada: $645M revenue, 38.2% CAGR, public health system enabling coordinated adoption

- India: $578M revenue, 41.7% CAGR, leveraging AI to address severe physician shortages

The U.S. market dominance reflects high healthcare spending, early regulatory framework development, and concentration of AI vendors. However, China’s growth rate suggests it will close the gap significantly by 2030, potentially achieving market parity by 2032-2034 if current trajectories persist.

Adoption Patterns Across Health Systems

Adoption rates do not correlate directly with market size. The UK, with single-payer NHS structure, achieves higher organizational adoption rates (estimated 28% of NHS trusts deploying AI) compared to fragmented U.S. healthcare (18.7% of hospitals). Centralized systems can mandate standards, negotiate volume pricing, and coordinate training more effectively than decentralized markets.

Conversely, the U.S. leads in breadth of AI applications deployed. While fewer organizations adopt AI, those that do implement across more use cases. This suggests that centralized systems drive breadth of adoption, while competitive markets drive depth of deployment.

Barrier Analysis: Why Hospitals Don’t Adopt AI

When healthcare organizations are surveyed about AI adoption barriers, five factors emerge as dominant:

1. Immature Tools (77% Citation Rate)

The most frequently cited barrier is perception that AI technology is not yet sufficiently mature, stable, or validated for production clinical use. This concern persists despite FDA clearance and published validation studies showing >95% accuracy. The disconnect reveals that “maturity” in clinical context means operational reliability over time, not just technical performance at a point in time.

Clinicians define maturity as: consistent performance across diverse patient populations, transparent failure modes, stability as protocols change, vendor longevity and support commitment, and extensive post-market surveillance data. Many FDA-cleared devices, particularly from startups, lack multi-year operational track records that would address these concerns.

2. High Costs (58% Citation Rate)

Cost concerns encompass both direct software licensing ($50,000-$100,000 annually for imaging AI) and indirect integration expenses (IT infrastructure, training, workflow redesign). However, cost is often a rationalization rather than root cause. Hospitals that trust AI find budget; hospitals skeptical of AI cite cost as socially acceptable objection to mask underlying doubts about clinical value.

The ROI challenge is real: AI prevents adverse events that didn’t occur, making value quantification difficult. How much is avoided missed diagnosis worth in accounting terms? Measurable ROI comes from workflow efficiency (radiologists reading 25% faster) rather than quality improvements (earlier cancer detection), inverting the true value hierarchy.

3. Regulatory Uncertainty (44% Citation Rate)

Despite FDA’s established AI device pathways, hospitals express concern about liability exposure and post-market surveillance requirements. If an AI system misses a finding, who is liable—the radiologist who relied on it, the hospital that deployed it, or the vendor who created it? These liability questions remain unsettled in case law, creating hesitancy despite regulatory clarity on approval processes.

4. Clinician Distrust (54% Citation Rate)

Physician and nursing staff resistance stems from multiple sources: fear of job displacement, skepticism of “black box” algorithms, professional identity threat, and bad experiences with previous health IT deployments that promised productivity gains but delivered documentation burden. Building clinician trust requires demonstrating benefit in low-stakes domains (documentation) before asking them to trust AI in high-stakes decisions (diagnosis).

5. Integration Issues (62% Citation Rate)

Technical integration of AI into existing PACS, EHR, and clinical workflows creates friction. Standalone AI software requires interfacing with multiple systems, often from different vendors with incompatible data standards. Alert routing, result display, and workflow triggers must be configured individually for each deployment. These integration challenges extend deployment timelines from planned 2-3 months to actual 6-9 months, eroding executive support as benefits are delayed.

Use Case Success Rates: What Works

Not all AI applications achieve equal success. Analysis of deployment outcomes reveals dramatic variation by use case.

Clinical Documentation: The Runaway Success

Ambient clinical documentation tools—which listen to patient encounters and automatically generate clinical notes—demonstrate 100% of surveyed hospitals reporting adoption attempts, with 53% achieving high success rates. This performance far exceeds any other AI application.

Success factors include: immediate tangible benefit (60-90 minutes saved daily), low risk profile (errors rarely cause patient harm), physician retains authority (AI generates draft, human approves), alignment with workflow (occurs after clinical decision), and clear success metrics (note completeness and accuracy).

Imaging/Radiology AI: High Deployment, Limited Success

Diagnostic imaging AI shows 90% adoption attempt rate but less than 20% high success rate. This gap between deployment and success reveals operational challenges:

- Alert fatigue: AI systems with 10% false positive rates generate thousands of alerts daily, causing radiologists to ignore notifications

- Workflow disruption: Radiologists must check AI results in addition to their own review, increasing time rather than decreasing it

- Calibration challenges: AI trained on one scanner performs poorly when hospital changes equipment

- Unclear value proposition: Second opinion from AI provides less value than second opinion from colleague

Successful imaging AI deployments focus on triage (prioritizing critical cases for immediate review) rather than diagnosis confirmation (validating radiologist findings). This repositioning changes AI from “second guesser” to “workflow optimizer,” improving reception.

Clinical Risk Stratification: Moderate Success

Risk prediction algorithms (sepsis, ICU transfer, readmission risk) show 38% high success rates. These systems operate in background, analyzing patient data continuously to flag deterioration. Success requires managing alert frequency to prevent fatigue while maintaining high negative predictive value so absence of alerts provides clinical reassurance.

Geographic Clustering: The 50x Variance

Healthcare AI adoption demonstrates extreme geographic clustering within countries. In the United States:

- Highest adoption: New Jersey (49% of hospitals), Massachusetts (43%), California (38%)

- Lowest adoption: New Mexico (0%), Wyoming (2%), Montana (3%)

This 50-fold variance cannot be explained by healthcare spending, population density, or hospital bed counts. Instead, adoption clusters around academic medical centers and spreads through professional networks. Physicians trained at AI-deploying institutions bring that experience to community hospitals. Clinicians attending conferences where AI is demonstrated return as internal champions.

The network effect explains adoption patterns better than economic variables. A rural Massachusetts hospital connected to Mass General through training relationships deploys AI at rates similar to urban Boston hospitals. A comparable rural New Mexico hospital, lacking these networks, deploys nothing.

Policy Implications

If adoption spreads through networks rather than geography, policy interventions should target network development. Creating “AI champion” hospitals that train physicians from surrounding regions produces spillover effects. Funding travel for community hospital staff to observe AI deployment costs less than equipment subsidies and generates longer-lasting cultural change.

Lessons for Ukrainian Healthcare

Ukrainian healthcare faces unique constraints—war-damaged infrastructure, workforce migration, resource limitations—but also opportunities. When you cannot train enough physicians, when infrastructure is destroyed, when resources are scarce, force-multiplier technologies provide disproportionate value.

Lesson 1: Start with Documentation, Not Diagnosis

Global data shows documentation AI achieves 100% adoption attempts and 53% high success versus imaging AI’s 90% adoption but limited success. Deploy ambient note-taking tools first to build clinician confidence and deliver immediate productivity gains. Once trust is established, expand to higher-stakes diagnostic applications.

Lesson 2: Technology Maturity Matters More Than Performance

The #1 adoption barrier (77%) is perceived technology immaturity—not cost, not regulation. Deploying proven, stable AI systems from established vendors matters more than deploying cutting-edge research. A well-validated 2022 algorithm with 5 years of operational data is preferable to a state-of-the-art 2026 algorithm with zero deployment history.

Lesson 3: Build Networks, Not Just Technology

Adoption clusters geographically around academic medical centers and spreads through professional networks, not economic incentives. Partner with European institutions experienced in AI deployment. Send Ukrainian physicians for training rotations. Host visiting faculty to demonstrate best practices. Network-building generates sustained adoption; equipment purchases generate shelf-ware.

Lesson 4: Prioritize Integration Over Innovation

Integration challenges cause 62% of deployment difficulties. Choose AI vendors with proven PACS/EHR integration pathways over those with superior standalone performance but poor interoperability. Embedded AI (within GE/Siemens imaging equipment) deploys faster than standalone AI despite often being technically inferior.

Lesson 5: Pilot in Receptive Regions First

Regional adoption variance is normal—even within wealthy nations with uniform technology access. Identify Ukrainian hospitals and regions with clinical leadership receptive to AI, deploy there first to generate local success stories, then leverage those examples to drive broader adoption. Forcing AI onto resistant organizations wastes resources.

Conclusion: The Gap Is Not Technical

The paradox of 1,200 approved devices with 81% zero adoption reveals that healthcare AI’s primary challenges are operational, not technical. The technology works—validation studies demonstrate accuracy, FDA clearances confirm safety and efficacy, successful deployments prove clinical value. The barrier is trust, integration, and willingness to change clinical workflows.

For Ukrainian healthcare, this analysis suggests a clear deployment strategy: start with documentation AI to build confidence, partner with established vendors offering proven stable systems, create training networks with experienced European institutions, prioritize integration simplicity over technical sophistication, and sequence deployment from low-stakes to high-stakes applications.

The 81% of hospitals currently at zero adoption will not remain there. Workforce shortages, evidence accumulation, generational physician turnover, and market consolidation will drive adoption over the next 4 years. The question for Ukrainian healthcare is whether to learn from international experience and deploy strategically, or repeat the mistakes of early adopters who prioritized cutting-edge technology over operational maturity.

The data shows the path: proven tools, documentation first, network-building, and gradual trust-building from low to high-stakes applications. This is how successful healthcare AI deployment happens. Everything else is expensive experimentation.

References

- “FDA AI Approvals Surge Past 1k for Radiology.” The Imaging Wire, Dec 2025.

- “Characterizing industry payments for FDA-approved AI devices.” Health Affairs Scholar, Dec 2025.

- “Adoption of AI in healthcare: survey of health system priorities.” JAMIA, 2025.

- AMA Physician AI Sentiment Report 2024. American Medical Association.

- “European Radiologist AI Survey 2024.” Insights Imaging, 2024.

- “AI adoption challenges from healthcare providers’ perspectives.” ScienceDirect, Oct 2025.

- American Hospital Association. (2024). Survey of AI Adoption in U.S. Healthcare Systems. AHA Center for Health Innovation.

- Saenz, A., et al. (2024). Barriers to AI Adoption in Healthcare: A Multi-Site Survey. Journal of the American Medical Informatics Association, 31(8), 1456-1467.

- Chung, J., et al. (2024). Ambient Clinical Documentation: Adoption Patterns and Success Factors. Journal of the American Medical Informatics Association, 31(12), 2871-2884.

- FDA Center for Devices and Radiological Health. (2026). Artificial Intelligence and Machine Learning (AI/ML)-Enabled Medical Devices. Retrieved February 2026.

Author: Oleh Ivchenko, PhD Candidate

Affiliation: Odessa Polytechnic National University | Stabilarity Hub