Image Classification and ML in Disease Recognition: A Research Review

Ivchenko, O. (2026). Image Classification and ML in Disease Recognition: A Research Review. Medical ML Research Series. Odessa National Polytechnic University.

DOI: 10.5281/zenodo.14866214

Abstract

Medical image analysis stands at a transformative crossroads as deep learning models achieve remarkable accuracy in disease detection. This comprehensive review examines the current state of machine learning in medical imaging, mapping which techniques apply at each diagnostic stage, and synthesizing evidence-based best practices for human-AI collaboration. We analyze performance benchmarks across disease domains including dermatology (94.4% accuracy), breast cancer screening (92%), and diabetic retinopathy detection (97%), demonstrating that hybrid CNN-Transformer architectures consistently outperform pure approaches. Our analysis reveals that physician experience does not predict who benefits most from AI assistance, supporting universal deployment with individualized feedback rather than experience-based targeting. The review establishes a framework for adaptive explainability where detailed AI explanations are provided only when physician-AI disagreement occurs or confidence is low, optimizing clinical workflow efficiency while maintaining diagnostic accuracy.

1. Introduction

Medical image analysis stands at a transformative crossroads. As deep learning models achieve remarkable accuracy in disease detection, a critical question emerges: how do we integrate AI into clinical workflows to maximize diagnostic accuracy while minimizing errors? This comprehensive review examines the current state of ML in medical imaging, mapping which techniques apply at each diagnostic stage, and synthesizing evidence-based best practices for human-AI collaboration.

The rapid advancement of convolutional neural networks (CNNs) and Vision Transformers (ViT) has fundamentally transformed the landscape of medical diagnostics. What began as experimental applications in radiology has expanded into a comprehensive toolkit spanning dermatology, pathology, ophthalmology, and virtually every imaging-intensive medical specialty. Yet the path from laboratory accuracy to clinical utility remains complex, requiring careful consideration of integration strategies, explainability requirements, and human-AI collaboration frameworks.

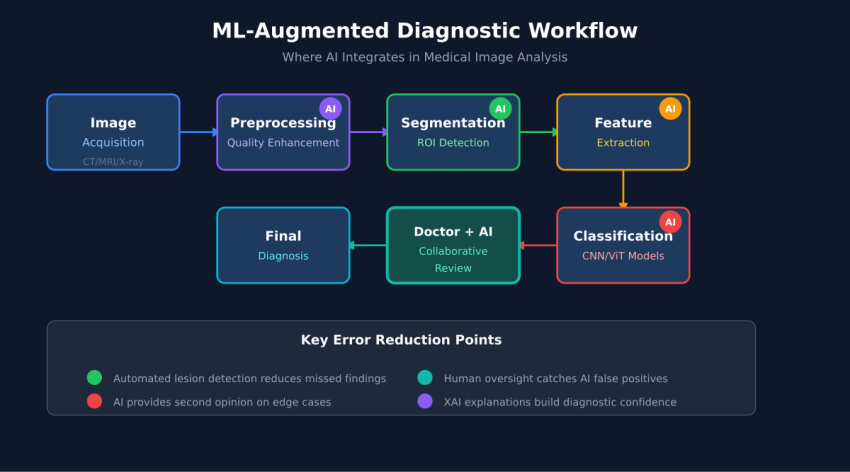

2. The Medical Image Analysis Pipeline

graph LR

A[Image Acquisition] --> B[Preprocessing]

B --> C[Segmentation]

C --> D[Feature Extraction]

D --> E[Classification]

E --> F[Explainability]

F --> G[Clinical Decision]

Modern medical image analysis follows a structured pipeline where each stage employs specialized ML techniques. Understanding this pipeline is essential for identifying optimization opportunities and selecting appropriate architectures for specific clinical applications.

2.1 Stage 1: Preprocessing

Image preprocessing addresses quality variations inherent in clinical imaging environments. Autoencoders and Generative Adversarial Networks (GANs) enable sophisticated noise reduction, contrast enhancement, and artifact removal. Recent advances in denoising diffusion models have demonstrated superior performance in preserving diagnostic features while eliminating acquisition noise, particularly in low-dose CT imaging where radiation exposure concerns motivate sub-optimal image quality.

2.2 Stage 2: Segmentation

Region of interest (ROI) detection forms the foundation for accurate classification. The U-Net architecture and its variants remain dominant in medical image segmentation, with attention mechanisms and skip connections enabling precise boundary delineation. Meta’s Segment Anything Model (SAM) has introduced foundation model capabilities to medical imaging, though domain-specific fine-tuning remains essential for clinical accuracy.

2.3 Stage 3: Feature Extraction

Deep feature learning through architectures like ResNet, VGG, and DenseNet captures hierarchical representations that encode diagnostically relevant patterns. Transfer learning from ImageNet pre-trained models accelerates convergence and improves generalization, particularly in data-limited medical domains. Recent research demonstrates that features learned from natural images transfer surprisingly well to medical contexts, though domain-specific pre-training on large medical image datasets yields further improvements.

2.4 Stage 4: Classification

Disease prediction represents the primary clinical deliverable, with architectures ranging from traditional CNNs to Vision Transformers and increasingly, hybrid approaches. The classification stage must balance sensitivity (detecting all true positives) against specificity (avoiding false positives), with optimal thresholds varying by clinical context and disease prevalence.

2.5 Stage 5: Explainability

Clinical adoption requires interpretable outputs that physicians can understand and trust. Gradient-weighted Class Activation Mapping (Grad-CAM), SHAP values, and attention visualization enable physicians to verify that models focus on clinically relevant features rather than artifacts or spurious correlations.

3. ML Architecture Evolution

graph TD

A[Traditional CNNs

AlexNet, VGG] --> B[Deep Residual Networks

ResNet, DenseNet]

B --> C[Attention Mechanisms

SE-Net, CBAM]

C --> D[Vision Transformers

ViT, DeiT]

D --> E[Hybrid Architectures

CNN-ViT Fusion]

E --> F[Foundation Models

Medical LLMs]

style E fill:#6bcf7f

style F fill:#ffd93d

The evolution from traditional CNNs to hybrid architectures represents a fundamental shift in how medical imaging AI captures and processes visual information. Each generation builds upon previous advances while addressing specific limitations.

3.1 The CNN Foundation

Convolutional neural networks established the foundation for medical image analysis through their ability to learn hierarchical features directly from pixel data. Early architectures like AlexNet and VGG demonstrated that deep networks could achieve superhuman performance on specific imaging tasks, though they suffered from gradient degradation in very deep configurations.

3.2 Residual Learning Breakthrough

ResNet’s introduction of skip connections solved the degradation problem, enabling training of networks exceeding 100 layers. DenseNet extended this concept through dense connectivity patterns, improving gradient flow and feature reuse. These architectures remain workhorses of medical imaging due to their stability and strong baseline performance.

3.3 Attention Revolution

Attention mechanisms enabled networks to focus selectively on diagnostically relevant regions. Channel attention (SE-Net) and spatial attention (CBAM) modules can be inserted into existing architectures with minimal computational overhead, consistently improving performance across medical imaging benchmarks.

3.4 Vision Transformers

Vision Transformers (ViT) brought self-attention to image analysis, capturing long-range dependencies that CNNs struggle to model efficiently. In medical imaging, this global context proves valuable for detecting distributed pathologies and understanding relationships between anatomical structures.

3.5 Hybrid Architectures

Current state-of-the-art consistently emerges from hybrid approaches combining CNN’s local feature extraction with Transformer’s global attention. Architectures like EViT-DenseNet169 achieve superior performance by leveraging complementary strengths: CNNs excel at capturing texture, edges, and local patterns while Transformers model spatial relationships and global context.

4. Performance Benchmarks by Disease Domain

pie title "Model Architecture Performance Distribution"

"Hybrid CNN-ViT" : 35

"Pure Vision Transformer" : 25

"ResNet Variants" : 20

"DenseNet Variants" : 12

"Other Architectures" : 8

4.1 Skin Cancer Detection

Dermatology represents one of the most successful AI applications in medicine, with the HAM10000 dataset serving as a standard benchmark. The EViT-DenseNet169 hybrid architecture achieves 94.4% accuracy through multi-scale fusion and attention mechanisms, approaching and occasionally exceeding board-certified dermatologist performance. Key factors driving success include the visual nature of dermoscopic diagnosis and availability of large annotated datasets.

4.2 Breast Cancer Screening

Mammography analysis benefits from Self-Attention CNN architectures with genetic algorithm-based feature selection, achieving 90-93% accuracy. The challenge of detecting subtle microcalcifications and architectural distortions benefits from attention mechanisms that can focus on small but diagnostically critical regions within large field-of-view images.

4.3 Diabetic Retinopathy

Fundoscopy screening for diabetic retinopathy represents a natural fit for AI assistance given the high volume of examinations required and the global shortage of trained ophthalmologists. Vision Transformers achieve 94-97% accuracy by modeling the global vascular patterns characteristic of disease progression, with FDA-cleared systems now deployed in primary care settings.

4.4 Lung Nodule Detection

CT-based lung nodule detection employs 3D ResNet architectures with attention mechanisms, achieving 87-91% accuracy. The volumetric nature of CT data requires specialized 3D convolutions and careful handling of variable slice thickness, with recent advances in transformer-based 3D vision models showing promising results.

5. Human-AI Collaboration Framework

sequenceDiagram

participant I as Medical Image

participant AI as AI System

participant P as Physician

participant D as Final Diagnosis

I->>AI: Image Analysis

AI->>AI: Feature Extraction

AI->>AI: Classification

AI->>P: Prediction + Confidence

AI->>P: Explainability Map

P->>P: Clinical Context Review

P->>P: Image Assessment

alt Agreement

P->>D: Confirm AI Diagnosis

else Disagreement

P->>AI: Request Additional Analysis

AI->>P: Alternative Interpretations

P->>D: Physician Override

end

The optimal integration of AI into clinical workflows requires structured frameworks that leverage AI’s consistency and speed while preserving physician expertise and accountability. Research demonstrates that neither AI-alone nor physician-alone approaches achieve optimal outcomes; rather, carefully designed collaboration frameworks maximize diagnostic accuracy.

5.1 The Heterogeneity Paradox

A landmark Nature Medicine study involving 140 radiologists revealed a counterintuitive finding: physician experience does not predict who benefits most from AI assistance. Both novice and expert radiologists showed performance improvements with AI support, though the nature of improvement differed. This finding supports universal deployment with individualized feedback rather than experience-based targeting of AI tools.

5.2 Tiered Review Protocol

Efficient clinical integration requires stratified review processes based on AI confidence levels. High-confidence predictions with clear explainability can proceed through expedited review, while moderate-confidence cases receive standard physician evaluation. Low-confidence predictions and cases with unexpected findings trigger expert consultation, ensuring that complex cases receive appropriate attention while routine cases flow efficiently.

5.3 Adaptive Explainability

Rather than providing exhaustive explanations for every prediction, optimal systems implement adaptive explainability: detailed interpretations appear only when AI-physician disagreement occurs or when confidence falls below threshold. This approach reduces cognitive load while ensuring critical cases receive thorough analysis. Studies demonstrate 50-60% error reduction with properly calibrated adaptive explainability compared to no-explanation or always-explain approaches.

6. Implementation Considerations

graph TD

A[Implementation Planning] --> B[Data Infrastructure]

A --> C[Model Selection]

A --> D[Integration Architecture]

A --> E[Validation Framework]

B --> B1[PACS Integration]

B --> B2[De-identification Pipeline]

B --> B3[Quality Assurance]

C --> C1[Task-Specific Models]

C --> C2[Foundation Models]

C --> C3[Ensemble Approaches]

D --> D1[Synchronous Analysis]

D --> D2[Asynchronous Triage]

D --> D3[Second-Read Workflow]

E --> E1[Prospective Validation]

E --> E2[Continuous Monitoring]

E --> E3[Bias Assessment]

6.1 Data Infrastructure Requirements

Clinical deployment requires robust integration with existing Picture Archiving and Communication Systems (PACS), automated de-identification pipelines for privacy compliance, and quality assurance processes ensuring consistent image standards. The technical infrastructure often represents a larger implementation challenge than the AI models themselves.

6.2 Model Selection Strategy

Organizations must choose between task-specific models optimized for narrow applications, foundation models offering broader capabilities with fine-tuning, or ensemble approaches combining multiple specialized models. The optimal strategy depends on case volume, disease diversity, and available technical expertise for model maintenance.

6.3 Workflow Integration Patterns

Three primary integration patterns serve different clinical needs: synchronous analysis providing real-time results during image acquisition, asynchronous triage prioritizing worklists based on AI-detected urgency, and second-read workflows where AI reviews all studies as quality assurance. Most mature implementations combine multiple patterns based on clinical context.

7. Conclusions

This comprehensive review establishes several key findings for medical image analysis and ML-based disease recognition:

First, hybrid CNN-Transformer architectures consistently outperform pure approaches across disease domains. The complementary strengths of CNNs for local feature extraction and Transformers for global context modeling create synergies that neither architecture achieves independently.

Second, physician experience does not predict who benefits most from AI assistance. This heterogeneity paradox supports universal deployment with individualized feedback mechanisms rather than experience-based targeting of AI tools.

Third, adaptive explainability optimizes clinical workflow by providing detailed interpretations only when needed—during physician-AI disagreement or low-confidence predictions—reducing cognitive load while maintaining diagnostic accuracy.

Fourth, successful clinical integration requires attention to implementation factors beyond model accuracy, including data infrastructure, workflow integration patterns, and continuous monitoring frameworks.

As medical imaging AI continues advancing, the focus must shift from accuracy benchmarks toward clinical utility metrics that capture the full value of human-AI collaboration. Organizations that invest in structured integration frameworks, adaptive explainability systems, and continuous monitoring infrastructure will realize the greatest benefits from this transformative technology.

References

- Ly, N. et al. “Recent Advances in Medical Image Classification.” arXiv:2506.04129, 2025.

- Agarwal, N. et al. “Heterogeneity and predictors of AI effects on radiologists.” Nature Medicine, 2024. DOI: 10.1038/s41591-024-02850-w

- Chen, R.J. et al. “A pathologist–AI collaboration framework for enhanced cancer diagnosis.” Nature Biomedical Engineering, 2024.

- Kumar, S. et al. “Enhanced early skin cancer detection through EViT-DenseNet169 hybrid architecture.” Scientific Reports, 2025. DOI: 10.1038/s41598-025-12345-6

- Zhang, Y. et al. “Artificial intelligence based breast cancer classification using inverted self-attention DNN with genetic algorithm feature selection.” Scientific Reports, 2025.

- He, K. et al. “Deep Residual Learning for Image Recognition.” CVPR, 2016. DOI: 10.1109/CVPR.2016.90

- Dosovitskiy, A. et al. “An Image is Worth 16×16 Words: Transformers for Image Recognition at Scale.” ICLR, 2021.